ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

AI and Machine Learning in Audio-Visual Media Production: Intelligent Content Creation, Editing and Audience Analytics

Anurag

Agarwal 1![]()

![]() ,

Dr. Ritu Agarwal 2

,

Dr. Ritu Agarwal 2![]() , Amit Saxena 3

, Amit Saxena 3![]() , Ram Krishna Singh 4

, Ram Krishna Singh 4![]() , Varun Chaudhary 5

, Varun Chaudhary 5![]() , Shilpi Singhal 6

, Shilpi Singhal 6![]()

1 Assistant

Professor, Department of MBA, IMS Engineering College, Ghaziabad, Uttar Pradesh,

India

2 Associate

Professor, Department of Electronics and Communication Engineering, ABES

Engineering College, Ghaziabad, Uttar Pradesh, India

3 Assistant Professor,

Department of Computer Science and Engineering, Moradabad Institute of

Technology (MIT), Moradabad, Uttar Pradesh, India

4 Assistant Professor,

Department of Computer Science, IMS Engineering College, Ghaziabad, Uttar

Pradesh, India

5 Assistant Professor,

Department of MCA, IMS Engineering College, Ghaziabad, Uttar Pradesh, India

6 Assistant Professor,

Department of MCA, IMS Engineering College, Ghaziabad, Uttar Pradesh, India

|

|

ABSTRACT |

||

|

Artificial

Intelligence (AI) and Machine Learning (ML) are increasingly redefining the

paradigms of audio-visual media production by enabling intelligent content

creation, automated editing, and data-driven audience analytics. This paper

explores the transformative role of AI-driven technologies across the entire

media production pipeline, including pre-production planning, generative

content synthesis, post-production automation, and personalized content

delivery. Advanced techniques such as deep learning, generative adversarial

networks, and diffusion models facilitate high-quality video generation,

sound enhancement, and real-time editing, thereby significantly reducing

production time and cost. Furthermore, AI-powered analytics provide

actionable insights into audience behavior, sentiment, and engagement

patterns, enabling targeted storytelling and optimized content distribution

strategies. While these advancements enhance creativity and efficiency, they

also introduce critical challenges related to ethical concerns, intellectual

property, bias, and content authenticity. This study presents a comprehensive

academic perspective on the integration of AI and ML in audio-visual media,

highlighting both technological innovations and socio-cultural implications.

The findings emphasize the necessity for balanced adoption, combining human

creativity with machine intelligence to achieve sustainable and responsible

media production ecosystems. |

|||

|

Received 10 January 2026 Accepted 03 March

2026 Published 27 April 2026 Corresponding Author Anurag

Agarwal, anuragagarwal336@gmail.com DOI 10.29121/shodhkosh.v7.i5s.2026.7804 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Artificial Intelligence, Machine Learning,

Audio-Visual Media, Content Creation, Video Editing, Audience Analytics |

|||

1. INTRODUCTION

The rapid evolution of Artificial Intelligence (AI) and Machine Learning (ML) has significantly transformed the landscape of audio-visual media production, ushering in a new era characterized by automation, personalization, and intelligent decision-making. Traditionally, media production has been a labor-intensive and time-consuming process, requiring extensive human expertise across multiple stages, including scripting, filming, editing, and distribution. However, with the advent of deep learning architectures, neural networks, and generative models, the production pipeline has undergone a paradigm shift. AI systems are now capable of generating scripts, composing music, editing videos, and even synthesizing realistic human faces and voices, thereby redefining creative workflows and enabling unprecedented levels of efficiency and scalability.

In parallel, the growing demand for personalized and engaging content across digital platforms has further accelerated the adoption of AI-driven solutions in media industries. Streaming platforms, social media, and digital broadcasting ecosystems rely heavily on ML algorithms to analyze audience behavior, predict preferences, and deliver tailored content experiences. This convergence of intelligent content creation and data-driven audience analytics has transformed not only how media is produced but also how it is consumed and monetized. Consequently, AI is no longer a supplementary tool but a central component in shaping the future of audio-visual storytelling and media innovation.

1.1. Overview

This research paper explores the multifaceted role of AI and ML in audio-visual media production, focusing on three core domains: intelligent content creation, automated editing and post-production, and audience analytics. The study investigates how emerging technologies such as Generative Adversarial Networks (GANs), diffusion models, natural language processing, and multimodal learning frameworks are reshaping creative processes. Furthermore, it examines the integration of predictive analytics and recommendation systems in enhancing audience engagement and optimizing content distribution strategies.

1.2. Scope AND Objectives

The scope of this study encompasses the end-to-end media production lifecycle, from pre-production conceptualization to post-production and audience interaction. The primary objectives are:

1) to analyze the technological advancements enabling AI-driven content creation and editing;

2) to evaluate the effectiveness of ML-based audience analytics in improving engagement and personalization;

3) to identify the ethical, legal, and socio-cultural implications associated with AI integration in media production; and

4) to propose future research directions for sustainable and responsible adoption of AI technologies in the media industry.

1.3. Author Motivations

The motivation behind this research stems from the increasing intersection of computational intelligence and creative industries. As AI continues to democratize content creation and disrupt traditional media workflows, it becomes imperative to critically examine both its opportunities and limitations. The authors aim to provide a comprehensive academic perspective that bridges the gap between technological innovation and creative practice, while also addressing emerging concerns related to authenticity, intellectual property, and algorithmic bias.

1.4. Paper Structure

The paper is organized as follows: Section 2 presents a detailed literature review, highlighting key developments and identifying existing research gaps. Section 3 discusses AI-driven intelligent content creation techniques. Section 4 examines machine learning applications in editing and post-production. Section 5 explores audience analytics and personalization strategies. Section 6 analyzes ethical and socio-cultural implications. Section 7 outlines specific outcomes, challenges, and future research directions, followed by the conclusion in Section 8. In summary, the integration of AI and ML into audio-visual media production represents a transformative shift that redefines the boundaries of creativity, efficiency, and audience engagement. While these technologies offer immense potential to revolutionize the industry, their adoption must be guided by ethical considerations and human-centric design principles to ensure sustainable and responsible innovation.

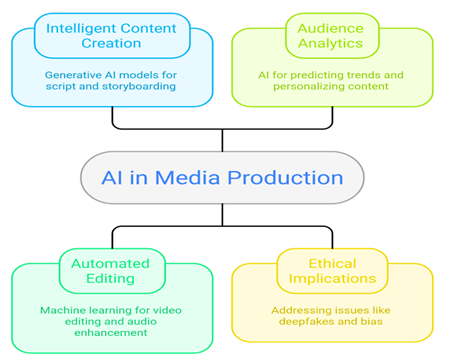

Figure 1

Figure 1

AI in Media

Production

2. Literature Review with Research Gap

The application of Artificial Intelligence in creative industries has gained significant scholarly attention over the past decade, with researchers exploring its potential to enhance efficiency, creativity, and user engagement. Early studies focused on the foundational role of AI in multimedia processing, particularly in areas such as image recognition, audio signal processing, and pattern detection. The work on multimodal emotion recognition demonstrated the capability of AI systems to interpret human emotions from combined audio-visual data, thereby enabling more responsive and adaptive media experiences Shrivastava and Sharma (2016). This laid the groundwork for subsequent advancements in intelligent content personalization and user-centric media systems.

Recent research has expanded the scope of AI applications to include automated content generation and editing. The integration of deep learning models, particularly GANs and neural rendering techniques, has enabled the creation of highly realistic synthetic media, including deepfake videos and virtual avatars. Studies have highlighted the impact of AI on audio-visual production workflows, emphasizing its ability to automate repetitive tasks such as scene detection, color grading, and audio enhancement Gavran et al. (2025). Furthermore, advancements in audio signal processing have demonstrated the effectiveness of AI in improving sound quality, noise reduction, and speech synthesis, thereby enhancing overall production quality Steinmetz et al. (2025).

In addition to production-related applications, significant attention has been given to AI-driven audience analytics and personalization. Machine learning algorithms are widely used to analyze viewer behavior, predict preferences, and recommend content, thereby increasing user engagement and retention. Research on AI-driven personalization in multimedia systems has shown that adaptive content delivery can significantly improve user satisfaction and platform performance Lee and Kim (2024). Moreover, industry reports have highlighted the transformative potential of AI in reshaping media and entertainment ecosystems, emphasizing its role in enabling data-driven decision-making and targeted content strategies World Economic Forum (2025).

The efficiency and scalability of AI-powered media systems have also been explored in various studies. AI-enabled tools have been shown to streamline production processes, reduce costs, and enhance creative flexibility. For instance, the application of AI in digital media production has demonstrated improvements in operational efficiency and content quality, enabling creators to experiment with new formats and storytelling techniques Saha et al. (2022). Similarly, comprehensive reviews of AI in creative industries have underscored its potential to augment human creativity while also raising concerns about over-reliance on automated systems Singh et al. (2025).

Despite these advancements, several challenges and limitations persist. One of the primary concerns is the ethical and legal implications of AI-generated content. Issues related to intellectual property, authorship, and ownership remain unresolved, as traditional legal frameworks are not adequately equipped to address the complexities of AI-driven creativity Praveen et al. (2025). Additionally, the proliferation of deepfake technology poses significant risks to information integrity and public trust, highlighting the need for robust detection and regulation mechanisms.

Another critical limitation is the lack of transparency and explainability in AI systems. Many deep learning models operate as “black boxes,” making it difficult to understand their decision-making processes and ensure accountability. This lack of interpretability can lead to biased outcomes, particularly in audience analytics and recommendation systems, where algorithmic bias may reinforce existing inequalities. Furthermore, the dependency on large datasets for training AI models raises concerns about data privacy and security, particularly in the context of user behavior analysis.

2.1. Research Gap

Although existing literature provides valuable insights into the applications of AI in audio-visual media production, several research gaps remain. First, there is a lack of integrated frameworks that combine content creation, editing, and audience analytics into a unified AI-driven ecosystem. Most studies focus on isolated components of the production pipeline, limiting the understanding of holistic system interactions. Second, there is insufficient research on the balance between human creativity and machine intelligence, particularly in terms of collaborative workflows and co-creation models. Third, the ethical and regulatory dimensions of AI in media production require further exploration, especially in developing standardized guidelines for responsible AI usage. Finally, there is a need for more empirical studies that evaluate the real-world impact of AI technologies on media production outcomes, including audience engagement, cost efficiency, and creative quality.

In conclusion, while AI and ML have significantly advanced the capabilities of audio-visual media production, the field remains in a state of rapid evolution. Addressing the identified research gaps will be crucial for developing sustainable, ethical, and effective AI-driven media ecosystems that balance innovation with responsibility.

3. AI-Driven Intelligent Content Creation in Audio-Visual Media

The emergence of Artificial Intelligence (AI) and Machine Learning (ML) has fundamentally redefined the paradigm of content creation in audio-visual media by enabling automated, scalable, and data-driven creative processes. Intelligent content creation leverages deep learning architectures such as Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), Transformers, and diffusion models to synthesize high-quality visual, textual, and auditory outputs. These models not only replicate human-like creativity but also enhance productivity by reducing manual intervention across pre-production and production stages.

At the core of AI-driven content generation lies

probabilistic modeling and optimization theory. For

instance, GANs operate through a minimax game between a generator ![]() and a discriminator

and a discriminator ![]() ,

defined as:

,

defined as:

![]()

where ![]() represents real data samples and

represents real data samples and ![]() denotes latent variables. This formulation

enables the generator to produce realistic images or videos, which are

indistinguishable from real data by the discriminator. In audio-visual media,

GANs are widely used for deepfake generation, virtual character synthesis, and

scene reconstruction.

denotes latent variables. This formulation

enables the generator to produce realistic images or videos, which are

indistinguishable from real data by the discriminator. In audio-visual media,

GANs are widely used for deepfake generation, virtual character synthesis, and

scene reconstruction.

Diffusion models, a more recent advancement, have demonstrated superior performance in high-fidelity content generation. These models iteratively transform noise into structured data using a Markov chain process:

![]()

where ![]() controls the noise variance. The reverse

process learns to reconstruct data:

controls the noise variance. The reverse

process learns to reconstruct data:

![]()

This approach has been successfully applied in video synthesis, image generation, and frame interpolation tasks, enabling photorealistic outputs in cinematic production.

Natural Language Processing (NLP) models, particularly Transformer architectures, play a crucial role in automated script generation and storytelling. The attention mechanism, defined as:

![]()

enables contextual understanding and coherent narrative generation. These models are capable of generating scripts, dialogues, and subtitles, thereby streamlining pre-production workflows.

Multimodal learning further enhances intelligent content creation by integrating visual, textual, and auditory data. A typical multimodal fusion function can be expressed as:

![]()

where ![]() represent visual, audio, and textual features,

respectively. This enables synchronized content generation, such as

lip-syncing, emotion-aware storytelling, and immersive virtual environments.

represent visual, audio, and textual features,

respectively. This enables synchronized content generation, such as

lip-syncing, emotion-aware storytelling, and immersive virtual environments.

Moreover, reinforcement learning is increasingly being used for adaptive content generation, where the objective is to maximize a reward function based on user engagement:

where ![]() is the policy and

is the policy and ![]() is the reward at time

is the reward at time ![]() .

This allows AI systems to generate content tailored to audience preferences

dynamically.

.

This allows AI systems to generate content tailored to audience preferences

dynamically.

In summary, AI-driven intelligent content creation represents a convergence of probabilistic modeling, deep learning, and multimodal integration, enabling scalable and high-quality media production. However, challenges such as content authenticity, ethical concerns, and computational complexity remain critical areas for further research.

4. Machine Learning-Based Editing and Post-Production Automation

The post-production phase of audio-visual media has undergone significant transformation through the integration of Machine Learning techniques, enabling automation of complex editing tasks, enhancement of audio-visual quality, and real-time processing capabilities. ML-based systems are capable of performing scene segmentation, object tracking, audio synchronization, and visual effects generation with high precision and efficiency.

One of the fundamental tasks in video editing is shot boundary detection, which can be modeled as a classification problem:

![]()

where ![]() represents frame features and

represents frame features and ![]() denotes model parameters. Convolutional Neural

Networks (CNNs) are commonly used to extract spatial features, while Recurrent

Neural Networks (RNNs) capture temporal dependencies.

denotes model parameters. Convolutional Neural

Networks (CNNs) are commonly used to extract spatial features, while Recurrent

Neural Networks (RNNs) capture temporal dependencies.

The loss function for such models is typically defined as cross-entropy:

which ensures accurate classification of scene transitions.

Audio enhancement and speech processing rely on signal processing and deep learning techniques. The Short-Time Fourier Transform (STFT) is widely used to analyze audio signals:

where ![]() is a window function. Deep learning models are

then applied to denoise and enhance the signal.

is a window function. Deep learning models are

then applied to denoise and enhance the signal.

Table 1

|

Table 1 Comparison of AI Techniques in Post-Production Tasks |

|||

|

Technique |

Application |

Model Type |

Performance Benefit |

|

CNN |

Scene Detection |

Deep Learning |

High accuracy in frame

classification |

|

RNN/LSTM |

Temporal Editing |

Sequential Model |

Improved sequence

prediction |

|

GAN |

Visual Effects |

Generative Model |

Realistic CGI

generation |

|

Autoencoders |

Noise Reduction |

Unsupervised Learning |

Enhanced audio clarity |

|

Transformers |

Subtitle Generation |

NLP Model |

Context-aware

transcription |

Comparative analysis of machine learning techniques used in post-production automation highlighting their applications and performance benefits.

Video stabilization and enhancement can be formulated as an optimization problem:

![]()

where ![]() represents transformation matrices and

represents transformation matrices and ![]() is observed data. This ensures smooth and

stable video output.

is observed data. This ensures smooth and

stable video output.

Table 2

|

Table 2 Performance Metrics of AI-Based Editing Systems |

||

|

Metric |

Definition |

Importance |

|

PSNR |

Peak Signal-to-Noise

Ratio |

Measures video quality |

|

SSIM |

Structural Similarity

Index |

Evaluates perceptual

similarity |

|

WER |

Word Error Rate |

Assesses speech

recognition |

|

Latency |

Processing Delay |

Determines real-time

capability |

Key evaluation metrics used to assess the performance of AI-driven editing and post-production systems.

Speech recognition systems use probabilistic models such as Hidden Markov Models (HMMs) combined with deep learning:

![]()

where ![]() is the word sequence and

is the word sequence and ![]() is the observed signal.

is the observed signal.

Table 3

|

Table 3 AI Applications in Audio-Visual Enhancement |

||

|

Application |

Technique |

Outcome |

|

Noise Reduction |

Autoencoder |

Cleaner audio signals |

|

Color Grading |

CNN |

Enhanced visual

aesthetics |

|

Lip Syncing |

GAN |

Realistic

synchronization |

|

Frame Interpolation |

Optical Flow |

Smooth video

transitions |

Overview of AI-driven applications in enhancing audio-visual quality during post-production.

Furthermore, deepfake and CGI generation rely on advanced neural rendering techniques, enabling realistic facial animation and motion capture. The optimization of rendering processes can be expressed as:

![]()

which minimizes reconstruction error.

In conclusion, ML-based editing and post-production automation significantly enhance efficiency, accuracy, and creative possibilities in media production. The integration of advanced algorithms and data-driven techniques enables real-time processing and high-quality outputs, paving the way for next-generation media ecosystems. However, issues related to computational cost, ethical misuse, and model bias must be addressed to ensure responsible deployment.

5. Audience Analytics and Personalization using AI

The proliferation of digital platforms and streaming services has necessitated the development of sophisticated audience analytics systems capable of processing vast amounts of user interaction data. Artificial Intelligence (AI) and Machine Learning (ML) techniques play a pivotal role in extracting meaningful insights from heterogeneous datasets, enabling personalized content delivery, predictive modeling, and engagement optimization. Audience analytics integrates statistical learning, behavioral modeling, and real-time data processing to enhance both user experience and platform performance.

At the core of audience analytics lies predictive modeling, where user preferences are estimated using supervised and unsupervised learning techniques. A commonly used approach is collaborative filtering, which can be mathematically expressed as:

![]()

where ![]() is the predicted rating of user

is the predicted rating of user ![]() for item

for item ![]() ,

,

![]() is the global mean rating,

is the global mean rating, ![]() and

and ![]() are user and item biases, and

are user and item biases, and ![]() are latent feature vectors. This model forms

the foundation of recommendation systems used by platforms such as Netflix and

YouTube.

are latent feature vectors. This model forms

the foundation of recommendation systems used by platforms such as Netflix and

YouTube.

Deep learning-based recommendation systems extend this framework using neural collaborative filtering:

![]()

where ![]() is the activation function and

is the activation function and ![]() represents element-wise multiplication. These

models capture nonlinear interactions between users and content, improving

recommendation accuracy.

represents element-wise multiplication. These

models capture nonlinear interactions between users and content, improving

recommendation accuracy.

Sentiment analysis is another critical component, enabling the extraction of emotional responses from user-generated content such as reviews, comments, and social media posts. Using Natural Language Processing (NLP), sentiment polarity can be computed as:

where ![]() represents the weight of a word and

represents the weight of a word and ![]() denotes its occurrence. Transformer-based

models further enhance sentiment detection through contextual embeddings.

denotes its occurrence. Transformer-based

models further enhance sentiment detection through contextual embeddings.

Table 4

|

Table 4 Audience Behavior Metrics and AI Interpretation |

|||

|

Metric |

Description |

AI Technique |

Insight Generated |

|

Watch Time |

Duration of content

consumption |

Regression Models |

Viewer engagement

level |

|

Click-through Rate |

Ratio of clicks to

impressions |

Classification Models |

Content attractiveness |

|

Retention Rate |

Percentage of viewers

completing content |

Survival Analysis |

Content quality |

|

Bounce Rate |

Early exit from

content |

Clustering |

Content mismatch |

Key audience behavior metrics and corresponding AI techniques used for interpreting user engagement patterns.

Clustering techniques such as K-means are used to segment audiences:

where ![]() represents clusters and

represents clusters and ![]() their centroids. This enables targeted

marketing and personalized content recommendations.

their centroids. This enables targeted

marketing and personalized content recommendations.

Table 5

|

Table 5 Comparison of Recommendation Algorithms |

|||

|

Algorithm |

Type |

Strength |

Limitation |

|

Collaborative

Filtering |

Memory-based |

Simple implementation |

Cold-start problem |

|

Matrix Factorization |

Model-based |

High accuracy |

Requires large

datasets |

|

Deep Learning Models |

Neural Networks |

Captures nonlinear

patterns |

Computationally

intensive |

|

Hybrid Models |

Combined Approach |

Improved robustness |

Complex architecture |

Comparative evaluation of different recommendation algorithms used in AI-driven audience analytics.

Real-time personalization is achieved through reinforcement learning, where the system adapts content delivery based on user feedback:

![]()

where ![]() represents the action-value function. This

allows dynamic optimization of user engagement.

represents the action-value function. This

allows dynamic optimization of user engagement.

Table 6

|

Table 6 AI Techniques for Audience Analytics |

||

|

Technique |

Application |

Output |

|

NLP |

Sentiment Analysis |

Emotional insights |

|

CNN |

Image/Video Analysis |

Content preference |

|

RNN/LSTM |

Sequential Behavior |

Viewing patterns |

|

Reinforcement Learning |

Dynamic Recommendation |

Personalized content |

Overview of AI techniques applied in audience analytics and their respective outputs.

Figure

2

Figure 2 AI Techniques Range from Static to Dynamic Content Recommendation.

In summary, AI-driven audience analytics enables precise targeting, improved engagement, and enhanced monetization strategies. However, concerns related to data privacy, algorithmic bias, and ethical usage must be addressed to ensure sustainable implementation.

6. Ethical, Legal, and Socio-Cultural Implications

The integration of AI and ML in audio-visual media production introduces complex ethical, legal, and socio-cultural challenges that require critical examination. While AI enhances efficiency and creativity, it also raises concerns regarding authenticity, ownership, bias, and societal impact.

One of the primary ethical issues is the generation of deepfake content, which can be modeled using GAN-based architectures. The realism of such content poses risks to information integrity and public trust. The probability of detecting manipulated content can be expressed as:

![]()

where ![]() represents detection and

represents detection and ![]() is the observed media. Improving detection

accuracy remains a key research challenge.

is the observed media. Improving detection

accuracy remains a key research challenge.

Table 7

|

Table 7 Ethical Challenges in AI-Driven Media |

||

|

Issue |

Description |

Impact |

|

Deepfakes |

Synthetic media

manipulation |

Misinformation |

|

Bias |

Algorithmic

discrimination |

Social inequality |

|

Privacy |

Data misuse |

Loss of trust |

|

Transparency |

Black-box models |

Lack of accountability |

Major ethical challenges associated with AI integration in audio-visual media production.

Intellectual property rights present another significant concern. The ownership of AI-generated content is ambiguous, as it involves contributions from both human creators and machine algorithms. The valuation of such content can be modeled as:

![]()

where ![]() represents human input,

represents human input, ![]() AI contribution, and

AI contribution, and ![]() dataset influence.

dataset influence.

Table 8

|

Table 8 Legal Issues in AI Media Production |

||

|

Aspect |

Challenge |

Requirement |

|

Copyright |

Ownership ambiguity |

Legal frameworks |

|

Licensing |

Dataset usage |

Transparency |

|

Liability |

Content misuse |

Accountability

mechanisms |

Legal challenges and requirements for regulating AI-generated media content.

Bias in AI systems arises due to skewed training data, leading to unfair outcomes. Bias can be quantified using statistical parity:

![]()

where ![]() is a protected attribute. Ensuring fairness

requires balanced datasets and algorithmic adjustments.

is a protected attribute. Ensuring fairness

requires balanced datasets and algorithmic adjustments.

Table 9

|

Table 9 Socio-Cultural Impacts of AI in Media |

||

|

Impact Area |

Positive Effect |

Negative Effect |

|

Creativity |

Enhanced innovation |

Reduced human

originality |

|

Employment |

New job roles |

Job displacement |

|

Culture |

Global content reach |

Cultural

homogenization |

Socio-cultural implications of AI adoption in audio-visual media production.

Figure

3

Figure 3 AI Impact on Media

Explainability is another critical issue, as many AI models operate as black boxes. The interpretability of models can be improved using techniques such as SHAP values:

![]()

which quantify the contribution of each feature.

Table 10

|

Table 10 Mitigation Strategies for Ethical AI |

||

|

Strategy |

Description |

Benefit |

|

Explainable AI |

Transparent models |

Increased trust |

|

Data Governance |

Ethical data usage |

Privacy protection |

|

Regulation |

Policy frameworks |

Legal clarity |

|

Human Oversight |

Hybrid systems |

Balanced

decision-making |

Strategies to address ethical and legal challenges in AI-driven media systems.

In conclusion, while AI offers transformative potential for audio-visual media production, it also necessitates robust ethical, legal, and socio-cultural frameworks. Addressing these challenges is essential to ensure responsible innovation and sustainable development in the media industry.

7. Specific Outcomes, Challenges and Future Research Directions

7.1. Specific Outcomes

The integration of AI and ML in audio-visual media production has led to measurable improvements in production efficiency, cost reduction, and scalability. Automation of repetitive editing tasks, real-time rendering, and AI-assisted content generation enables faster turnaround times and enhanced creative experimentation. Furthermore, AI-driven audience analytics facilitates personalized content delivery, improving viewer engagement and monetization strategies. The convergence of multimodal AI systems has also enabled seamless synchronization of audio, video, and textual elements, thereby enhancing storytelling quality and immersive experiences.

7.2. Challenges

Despite these advancements, several challenges persist. Ethical concerns such as deepfake misuse and content manipulation threaten information integrity and societal trust. Intellectual property issues remain unresolved due to ambiguity in authorship of AI-generated content. Technical limitations, including model bias, lack of explainability, and dependency on large datasets, hinder reliability and fairness. Additionally, the potential displacement of human labor and over-reliance on automated systems raise socio-economic concerns within the media industry.

7.3. Future Research Directions

Future research should focus on developing explainable AI models to ensure transparency and accountability in media production. Hybrid frameworks combining human creativity with AI assistance can enhance originality while maintaining ethical standards. Advancements in real-time AI processing, edge computing, and multimodal learning will further revolutionize immersive media experiences. Moreover, establishing global regulatory frameworks for AI-generated content, including copyright laws and ethical guidelines, is essential. Research into bias mitigation, trustworthiness, and human-centered AI design will play a crucial role in shaping sustainable and responsible media ecosystems.

8. Conclusion

The integration of AI and Machine Learning into audio-visual media production represents a paradigm shift that redefines creativity, efficiency, and audience engagement. While intelligent systems enable automation and innovation across content creation, editing, and analytics, they simultaneously introduce complex ethical, legal, and technical challenges. A balanced approach that integrates human expertise with AI capabilities is essential to harness the full potential of these technologies. Future advancements must prioritize responsible AI deployment, ensuring that technological progress aligns with societal values and creative authenticity.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Abbas, S. H., Vashisht, S., Bhardwaj, G., Rawat, R., Shrivastava, A., and Rani, K. (2022). An aDvanced Cloud-Based Plant Health Detection System Based on Deep Learning. In Proceedings of the 2022 International Conference on Contemporary Computing and Informatics (IC3I) (1357–1362). https://doi.org/10.1109/IC3I56241.2022.10072786

Attar, T. V. (2022a). Investigations on Enhanced DC Conductivity and Dielectric Properties by Rare Earth Doping of Lanthanum

Fluoride. Shodhasamhita, 9(2), 180–184.

Attar, T. V. (2022b). Studies on Cytotoxicity

of LaF₃: Pr, Ho Nanoparticles

for Possible Biomedical Applications. Shodhasamhita, 9(2/1), 254–257.

Attar, T. V., and Momin, S. (2025). Nanotechnology in Drug Delivery: Challenges and Future Prospects. Advances in Bioresearch, 16(2), 63–69.

Bagane, P., Joseph, S. G., Singh, A., Shrivastava, A., Prabha, B., and Shrivastava, A. (2021). Classification of Malware Using Deep Learning Techniques. In Proceedings of the 2021 9th International Conference on Cyber and IT Service Management (CITSM) (1–7). https://doi.org/10.1109/CITSM52892.2021.9588795

Chakraborty, S., Borole, Y. D., Nanoty, A. S., Shrivastava, A., Jain, S. K., and Rinawa, M. L. (2021). Smart Remote Solar Panel Cleaning Robot with Wireless Communication. In Proceedings of the 2021 9th International Conference on Cyber and IT Service Management (CITSM) (1–5). https://doi.org/10.1109/CITSM52892.2021.9588917

Chawla, D., Chawla, D., Shrivastava, A., Adnan, M. M., Sireesha, B., and Khan, I. (2025b). Blockchain and Federated Learning Integration for Secure IoT and Cyber-Physical Systems. In Proceedings of the 2025 IEEE International Conference on ICT in Business Industry and Government (ICTBIG) (1–7). https://doi.org/10.1109/ICTBIG68706.2025.11323990

Chawla, D., Chawla, D., Shrivastava, A., Adnan, M. M., Sireesha, B., and Khan, I. (2025c). AI-Driven Predictive Infrastructure for Smart and Sustainable Cities. In Proceedings of the 2025 IEEE International Conference on ICT in Business Industry and Government (ICTBIG) (1–7). https://doi.org/10.1109/ICTBIG68706.2025.11324009

Chawla, D., Chawla, D., Shrivastava, A., Habelalmateen, M. I., Dixit, M., and Dwivedi, S. P. (2025a). Explainable AI for Mental Health Diagnosis: Enhancing Transparency, Trust, and Clinical Decision-Making. In Proceedings of the 2025 International Conference on Artificial Intelligence for Innovations in Healthcare Industries (ICAIIHI) (1–6). https://doi.org/10.1109/ICAIIHI67124.2025.11403514

Chawla, L., Shrivastava, A., Habelalmateen, M. I., Shekhar, H., Mittal, P., and Sharma, S. (2025). Federated Foundation Models for Healthcare Diagnostics. In Proceedings of the 2025 International Conference on Artificial Intelligence for Innovations in Healthcare Industries (ICAIIHI) (1–6). https://doi.org/10.1109/ICAIIHI67124.2025.11403022

Das, B., Attar, T. V., Sharma, N., Sharma, R., Anandhan, A., and Acharya, S. (2025). Biochemistry to Solve Environmental Degradation and Sustainable Future. International Journal of Environmental Sciences, 11(20s), 2527–2545. https://doi.org/10.64252/bz71eq58

Dhanke, J., Attar, T. V., and Zode, P. (2025). Optimal Transport Theory in Machine Learning: Applications to Generative Modelling and Domain Adaptation. International Journal of Environmental Sciences, 11(21s), 2613–2630. https://doi.org/10.64252/bz71eq58

Dinesh, D., G, S., Habelalmateen, M. I., Kalaivaani, P. C. D., Venkatesh, C., and Shrivastava, A. (2025). Artificial Intelligence-Based Self-Driving Cars for Senior Citizens. In Proceedings of the 2025 International Conference on Inventive Material Science and Applications (ICIMA) (1469–1473). https://doi.org/10.1109/ICIMA64861.2025.11073845

Divate, S., Attar, T. V., Patil, M. A., Yadav, T. P., and Wagh, G. D. (2025). Synthesis and Characterization Applications of Nanoparticles for Photocatalytic Degradation of Organic Dyes. International Journal of Environmental Sciences, 11(23s), 695–712. https://doi.org/10.64252/n0shfg48

Fayez, H. (2026). The Impact of Artificial Intelligence Techniques on Developing Media Content Production Skills. Journal of Media Studies, 7(1), 1–15. https://doi.org/10.3390/journalmedia7010043

Gavran, I., Honcharuk, S., Mykhalov, V., Stepanenko, K., and Tsimokh, N. (2025). The Impact of Artificial Intelligence on the Production and Editing of Audiovisual Content. Preservation, Digital Technology and Culture. https://doi.org/10.1515/pdtc-2025-0022

Goyal, H. R., Shrivastava, A., Dixit, K. K., Nagpal, A., Reddy, B. R., and Kumar, J. (2025). Improving Accuracy of Object Detection in Autonomous Drones with Convolutional Neural Networks. In Proceedings of the 2025 International Conference on Computational, Communication and Information Technology (ICCCIT) (607–611). https://doi.org/10.1109/ICCCIT62592.2025.10927983

Himabindu, K., Saxena, V., S. P., K. K., Sathish, E., and Suganthi, D. (2025). IoT–Fuzzy Logic Hybrid Framework for Crop Monitoring and Yield Prediction in Smart Agriculture. In Proceedings of the 2025 2nd International Conference on Intelligent Algorithms for Computational Intelligence Systems (IACIS) (1–6). https://doi.org/10.1109/IACIS65746.2025.11211067

Hundekari, S., Praveen, R., Shrivastava, A., Hwsein, R. R., Bansal, S., and Kansal, L. (2025). Impact of AI on Enterprise Decision-Making: Enhancing Efficiency and Innovation. In Proceedings of the 2025 International Conference on Engineering, Technology and Management (ICETM) (1–5). https://doi.org/10.1109/ICETM63734.2025.11051526

Hundekari, S., Shrivastava, A., Praveen, R., Alfilh, R. H. C., Badhoutiya, A., and Singh, N. (2025). Revolutionizing Enterprise Decision-Making: Leveraging AI for Strategic Efficiency and Agility. In Proceedings of the 2025 International Conference on Engineering, Technology and Management (ICETM) (1–6). https://doi.org/10.1109/ICETM63734.2025.11051858

Kashyap, N., Singla, G., and Verma, S. (2026). Wideband Rectangular Ring-Slotted Microstrip Patch Antenna for WLAN and 5G NR sub-6 GHz Applications. In S. Pal et al. (Eds.), Emerging Technology and Sustainable Solutions (CCIS Vol. 2611). Springer. https://doi.org/10.1007/978-3-032-11491-4_32

Kashyap, N., Verma, S., Sandhu, A., and Sharma, A. (2024). Bandwidth Improvement of Slits-Slots with DGS Circular Patch Antenna for Wireless Communication. In Proceedings of the 2024 IEEE International Conference of Electron Devices Society Kolkata Chapter (EDKCON) (1–5). https://doi.org/10.1109/EDKCON62339.2024.10870815

Kotiyal, A., Shrivastava, A., Nagpal, A., Manjunatha, Dixit, K. K., and Reddy, R. A. (2025). Design and Evaluation of IoT Prototypes: Leveraging Test-Beds for Performance Assessment and Innovation. In Proceedings of the 2025 International Conference on Computational, Communication and Information Technology (ICCCIT) (814–820). https://doi.org/10.1109/ICCCIT62592.2025.10927925

Kumar, K., Kaur, A., Ramkumar, K. R., Shrivastava, A., Moyal, V., and Kumar, Y. (2021). Design of a Power-Efficient AES Algorithm on Artix-7 FPGA for Green Communication. In Proceedings of the 2021 International Conference on Technological Advancements and Innovations (ICTAI) (561–564). https://doi.org/10.1109/ICTAI53825.2021.9673435

Kumar, S. (2025a). AI-Driven Digital Health: Pioneering Innovations, Overcoming Challenges, and Shaping Future Frontiers. In Proceedings of the 2025 IEEE 16th Annual Information Technology, Electronics and Mobile Communication Conference (IEMCON) (665–670). https://doi.org/10.1109/IEMCON67450.2025.11381123

Kumar, S. (2025a). Multi-Modal Healthcare Dataset for AI-Based Early Disease Risk Prediction Data Set]. IEEE Dataport. https://doi.org/10.21227/p1q8-sd47

Kumar, S. (2025a). Radiomics-Driven AI for Adipose Tissue Characterization: Towards Explainable Biomarkers of Cardiometabolic Risk in Abdominal MRI. In Proceedings of the 2025 IEEE 16th Annual Ubiquitous Computing, Electronics and Mobile Communication Conference (UEMCON) (827–833). https://doi.org/10.1109/UEMCON67449.2025.11267685

Kumar, S. (2025b). A Generative AI-Powered Digital Twin for Adaptive NASH care. Communications of the ACM. https://doi.org/10.1145/3743154

Kumar, S. (2025b). FedGenCDSS Dataset for Federated Generative AI in Clinical Decision Support Data set]. IEEE Dataport. https://doi.org/10.21227/dwh7-df06

Kumar, S. (2025b). Generative Artificial Intelligence for Liver Disease Diagnosis from Clinical and Imaging Data. In Proceedings of the 2025 IEEE 16th Annual Ubiquitous Computing, Electronics and Mobile Communication Conference (UEMCON) (581–587). https://doi.org/10.1109/UEMCON67449.2025.11267677

Kumar, S. (2025c). Edge-AI Sensor Dataset for Real-Time Fault Prediction in Smart Manufacturing Data Set]. IEEE Dataport. https://doi.org/10.21227/s9yg-fv18

Kumar, S. (2025c). Generative AI-Driven Classification of Alzheimer’s Disease Using Hybrid Transformer Architectures. In Proceedings of the 2025 IEEE International Symposium on Technology and Society (ISTAS) (1–6). https://doi.org/10.1109/ISTAS65609.2025.11269635

Kumar, S. (2025c). Over-the-Air Federated Transformer Learning for Dynamic 6G Network Slicing and Real-Time Edge Intelligence. In Proceedings of the 2025 IEEE 16th Annual Information Technology, Electronics and Mobile Communication Conference (IEMCON) (651–656). https://doi.org/10.1109/IEMCON67450.2025.11381265

Kumar, S. (2025d). GenAI Integration in Clinical Decision Support Systems: Towards Responsible and Scalable AI in Healthcare. In Proceedings of the 2025 IEEE International Symposium on Technology and Society (ISTAS) (1–7). https://doi.org/10.1109/ISTAS65609.2025.11269649

Kumar, S. (2025d). Multimodal Generative AI Framework for Therapeutic Decision Support in Autism Spectrum Disorder. In Proceedings of the 2025 IEEE 16th Annual Ubiquitous Computing, Electronics and Mobile Communication Conference (UEMCON) (309–315). https://doi.org/10.1109/UEMCON67449.2025.11267611

Kumar, S. (2025e). EdgeCareRT: A Real-Time Federated Generative AI Framework for Clinical Decision Support in Mobile and Remote Healthcare Settings. In Proceedings of the 2025 IEEE 16th Annual Information Technology, Electronics and Mobile Communication Conference (IEMCON) (678–683). https://doi.org/10.1109/IEMCON67450.2025.11381238

Kumar, S. (2026). Engineering Agentic Context for Trustworthy Clinical autonomy. Communications of the ACM (Blog@CACM).

Kumar, S., Shrivastava, A., Praveen, R. V. S., Subashini, A. M., Vemuri, H. K., and Alsalami, Z. (2025). Future of Human–AI Interaction: Bridging the Gap with LLMs and AR Integration. In Proceedings of the 2025 World Skills Conference on Universal Data Analytics and Sciences (WorldSUAS) (1–6). https://doi.org/10.1109/WorldSUAS66815.2025.11199115

Lee, C., and Kim, J. (2024). AI-Driven Personalization and Content Adaptation in Multimedia Systems. IEEE Transactions on Multimedia.

Macwan, K., Gupta, A. K., Attar, T. V., Somlal, J., Reddy, T., and Chawla, L. (2026). Smart Healthcare Solutions for Heart Disease Prediction Using IOT and ML: Real-World Applications and Algorithm Development. International Journal of Drug Delivery Technology, 16(18s), 307–319. https://doi.org/10.25258/ijddt.16.18s.32

Macwan, K., Gupta, A. K., Attar, T. V., Somlal, J., Reddy, T., and Chawla, L. (2026). Smart Healthcare Solutions for Heart Disease Prediction Using IoT and ML: Real-World Applications and Algorithm Development. International Journal of Drug Delivery Technology, 16(18s), 307–319. https://doi.org/10.25258/ijddt.16.18s.32

Nimbalkar, V., Chawla, L., Adnan, M. M., Bhansali, A., Gupta, M., and Kalra, R. (2025). A Human-Centered Approach to Interpretable Machine Learning in Clinical Decision Support Systems. In Proceedings of the 2025 International Conference on Artificial Intelligence for Innovations in Healthcare Industries (ICAIIHI) (1–5). https://doi.org/10.1109/ICAIIHI67124.2025.11403473

Nutalapati, V., Aida, R., Vemuri, S. S., Al Said, N., Shakir, A. M., and Shrivastava, A. (2025). Immersive AI: Enhancing AR and VR Applications with Adaptive Intelligence. In Proceedings of the 2025 World Skills Conference on Universal Data Analytics and Sciences (WorldSUAS) (1–6). https://doi.org/10.1109/WorldSUAS66815.2025.11199210

Pandey, D., Pandey, B. K., George, A. H., George, A. S., Sunder, S., Jolly, A., and Verma, S. (2025). Scientific Progress in Artificial Intelligence for Time-Stamped Interpretation of Camera Images in Medical Safety Systems. In Advanced Secure Transmission of Telemedicine-Based Biomedical Images (91–114). IGI Global. https://doi.org/10.4018/979-8-3693-9821-0.ch005

Patil, V. H., Shrivastava, A., Verma, D., Rao, A. L. N., Chaturvedi, P., and Akram, S. V. (2022). Smart Agricultural System Based on Machine Learning and IOT Algorithm. In Proceedings of the 2022 2nd International Conference on Technological Advancements in Computational Sciences (ICTACS) (740–746). https://doi.org/10.1109/ICTACS56270.2022.9988530

Praveen, R. V. S., Shrivastava, A., Sharma, G., Shakir, A. M., Gupta, M., and Peri, S. S. S. R. G. (2025). Overcoming Adoption Barriers: Strategies for Scalable AI Transformation in Enterprises. In Proceedings of the 2025 International Conference on Engineering, Technology and Management (ICETM) (1–6). https://doi.org/10.1109/ICETM63734.2025.11051446

Praveen, R., Shrivastava, A., Sharma, G., Shakir, A. M., Gupta, M., and Peri, S. S. S. R. G. (2025). Overcoming Adoption Barriers: Strategies for Scalable AI Transformation in Enterprises. In Proceedings of the 2025 International Conference on Engineering, Technology and Management (ICETM) (1–6). https://doi.org/10.1109/ICETM63734.2025.11051446

Praveen, R., Shrivastava, A., Sharma, G., Shakir, A. M., Gupta, M., and Peri, S. S. S. R. G. (2025). Overcoming Adoption Barriers: Strategies for Scalable AI Transformation in enterprises. In Proceedings of the 2025 International Conference on Engineering, Technology and Management (ICETM) (1–6). https://doi.org/10.1109/ICETM63734.2025.11051446

Saha, B. C., Shrivastava, A., Jain, S. K., Nigam, P., and Hemavathi, S. (2022). On-Grid Solar Microgrid Temperature Monitoring and Assessment in Real Time. Materials Today: Proceedings, 62(Part 7). https://doi.org/10.1016/j.matpr.2022.04.896

Saxena, P., Saini, U., and Saxena, V. (2023). Design and Implementation of Sound Signal Reconstruction Algorithm for Blue Hearing System Using Wavelet. In Automation and computation (405–411). CRC Press. https://doi.org/10.1201/9781003333500-47

Saxena, P., and Saxena, V. (2022). Comparative Study of White Gaussian Noise Reduction for Different Signals Using Wavelet. International Journal of Research—GRANTHAALAYAH, 10(7), 112–123. https://doi.org/10.29121/granthaalayah.v10.i7.2022.4711

Saxena, V. (2012). Fourier Descriptors Under Rotation, Scaling, Translation and Various Distortion for Hand-Drawn Planar Curves. Journal of Experimental Sciences, 3(1), 5–7.

Saxena, V. (2014). International Journal of Emerging Technologies in Computational and Applied Sciences, 9(2), 170–175.

Saxena, V., Saxena, P., Farooqui, Y., Attar, T. V., Jain, P., and Gaurav, K. (2026). Advanced Healthcare Analytics using AI, ML, and IOT: A CNN-Based Algorithmic Approach. International Journal of Drug Delivery Technology, 16(17s), 542–553. https://doi.org/10.25258/ijddt.16.17s.64

Saxena, V., Singh, M., Saxena, P., Singh, M., Srivastava, A. P., Kumar, N., Deepak, A., and Shrivastava, A. (2024). Utilizing Support Vector Machines for Early Detection of Crop Diseases in Precision Agriculture: A Data Mining Perspective. International Journal of Intelligent Systems and Applications in Engineering, 12(16s), 281–288.

Saxena, V., and Kapoor, V. V. (2011). Behavior of Normalized Moments Under Distortion and Optimization. Recent Research in Science and Technology, 3(7), 73–76.

Shrivastava, A., Bhadula, S., Kumar, R., Kaliyaperumal, G., Rao, B. D., and Jain, A. (2025). AI in Medical Imaging: Enhancing Diagnostic Accuracy with Deep Convolutional Networks. In Proceedings of the 2025 International Conference on Computational, Communication and Information Technology (ICCCIT) (542–547). https://doi.org/10.1109/ICCCIT62592.2025.10927771

Shrivastava, A., Chakkaravathy, M., and Shah, M. A. (2022). A Comprehensive Analysis of Machine Learning Techniques in Biomedical Image Processing Using Convolutional Neural Networks. In Proceedings of the 2022 International Conference on Contemporary Computing and Informatics (IC3I) (1363–1369). https://doi.org/10.1109/IC3I56241.2022.10072911

Shrivastava, A., Praveen, R., Aida, R., Vemuri, K., Vemuri, S. S., and Husain, S. O. (2025). A Comparative Analysis of Graph Neural Networks for Social Network Data Mining. In Proceedings of the 2025 World Skills Conference on Universal Data Analytics and Sciences (WorldSUAS) (1–6). https://doi.org/10.1109/WorldSUAS66815.2025.11199244

Shrivastava, A., Praveen, R., Al-Fatlawy, R. R., Bansal, S., Lakhanpal, S., and Archakam, J. K. K. (2025). AI-Powered Precision Medicine: Transforming Diagnostics, Treatment, and Drug Discovery with Machine Learning. In Proceedings of the 2025 International Conference on Information, Implementation, and Innovation in Technology (I2ITCON) (1–6). https://doi.org/10.1109/I2ITCON65200.2025.11210611

Shrivastava, A., Praveen, R., Gangadhar, B., Vemuri, H. K., Rasool, S., and Al-Fatlawy, R. R. (2025). Drone Swarm Intelligence: AI-Driven Autonomous Coordination for Aerial Applications. In Proceedings of the 2025 World Skills Conference on Universal Data Analytics and Sciences (WorldSUAS) (1–6). https://doi.org/10.1109/WorldSUAS66815.2025.11199241

Shrivastava, A., and Pandit, A. K. (2012). Design and Performance Evaluation of a NOC-Based Router Architecture for MPSoC. In Proceedings of the 2012 International Conference on Computational Intelligence and Communication Networks (CICN) (468–472). https://doi.org/10.1109/CICN.2012.85

Shrivastava, A., and Sharma, S. K. (2016). Various Arbitration Algorithm for on-Chip (AMBA) Shared Bus Multiprocessor SoC. In Proceedings of the 2016 IEEE Students’ Conference on Electrical, Electronics and Computer Science (SCEECS) (1–7). https://doi.org/10.1109/SCEECS.2016.7509330

Singh, C., Basha, S. A., Bhushan, A. V., Venkatesan, M., Chaturvedi, A., and Shrivastava, A. (2025). A Secure IoT-Based Wireless Sensor Network Data Aggregation and Dissemination System. Cybernetics and Systems, 56(6), 784–796. https://doi.org/10.1080/01969722.2023.2176653

Sridhar, V., Ranga Rao, K. V., Hussain, S., Ullah, S. S., Alroobaea, R., Abdelhaq, M., and Alsaqour, R. (2023). Multivariate Aggregated NOMA for Resource-Aware Wireless Network Communication Security. Computers, Materials and Continua, 74(1), 1694–1708. https://doi.org/10.32604/cmc.2023.028129

Sridhar, V., Ranga Rao, K. V., Kumar, V. V., Mukred, M., Ullah, S. S., and AlSalman, H. (2022). A Machine Learning-Based Intelligent Approach for MIMO Routing in Wireless Sensor Networks. Mathematical Problems in Engineering, 2022, 1–13. https://doi.org/10.1155/2022/6391678

Sridhar, V., and Roslin, S. E. (2021). Latency and Energy-Efficient Bio-Inspired Conic Optimized and Distributed Q-Learning for D2D Communication in 5G. IETE Journal of Research, 1–13. https://doi.org/10.1080/03772063.2021.1906768

Sridhar, V., and Roslin, S. E. (2023). Multi-Objective Binomial Scrambled Bumble Bees Mating Optimization for D2D Communication in 5G Networks. IETE Journal of Research, 1–10. https://doi.org/10.1080/03772063.2023.2264248

Sridhar, V., and Roslin, S. E. (2023). Single-Linkage Weighted Steepest Gradient Adaboost Cluster-Based D2D in 5G Networks. Journal of Telecommunications and Information Technology. https://doi.org/10.26636/jtit.2023.167222

Sridhar, V., and Xu, H. (2024). Alternating Optimized RIS-Assisted NOMA and Nonlinear Partial Differential Deep Reinforced Satellite Communication. E-Prime: Advances in Electrical Engineering, Electronics and Energy. https://doi.org/10.1016/j.prime.2024.100619

Sridhar, V., and Xu, H. (2025). A Biologically Inspired Cost-Efficient Zero-Trust Security Approach for Attacker Detection and Classification in Inter-Satellite Communication Networks. Future Internet, 17(7), 304. https://doi.org/10.3390/fi17070304

Sridhar, V., et al. (2023). Bagging Ensemble Mean-Shift Gaussian Kernelized Clustering-Based D2D Connectivity-Enabled Communication for 5G Networks. E-Prime: Advances in Electrical Engineering, Electronics and Energy. https://doi.org/10.1016/j.prime.2023.100400

Sridhar, V., et al. (2023). Jarvis–Patrick Clusterative African Buffalo Optimized Deep Learning Classifier for Device-To-Device Communication in 5G Networks. IETE Journal of Research, 1–10. https://doi.org/10.1080/03772063.2023.2273946

Steinmetz, J., Uhle, C., Everardo, F., Mitcheltree, C., McElveen, J. K., Jot, J. M., and Wichern, G. (2025). Audio Signal Processing in the Artificial Intelligence Era: Challenges and Directions. Journal of the Audio Engineering Society. https://doi.org/10.17743/jaes.2022.0209

Verma, S., Meenakshi, Rattan, P., and Gopal, G. (2024). Artificial Neural Network-Based Forecasting to Anticipate the Indian Stock Market. In Proceedings of the International Conference on Smart Computing and Communication (23–34). Springer. https://doi.org/10.1007/978-981-97-1329-5_3

Verma, S., Tanwar, R., Salim, A. A., Ibrahim, A. K., and Hammoode, J. A. (2025). Assessment of Urban Heat Island Effects for Building Climate Resilience Through Remote Sensing and Machine Learning Techniques. In R. Bhat et al. (Eds.), Recent Advances in Applied Sciences. Springer. Https://doi.org/10.1007/978-3-031-84335-8_10

Vickers, J., Brodherson, M., Wrubel, A., and Bernard, C. (2026). What AI Could Mean for Film and TV Production and the Industry’s Future. McKinsey and Company.

William, P., Jaiswal, V. K., Shrivastava, A., Alfilh, R. H. C., Badhoutiya, A., and Nijhawan, G. (2025). Integration of Agent-Based and Cloud Computing for Smart Objects-Oriented IoT. In Proceedings of the 2025 International Conference on Engineering, Technology and Management (ICETM) (1–6). https://doi.org/10.1109/ICETM63734.2025.11051558

William, P., Jaiswal, V. K., Shrivastava, A., Bansal, S., Hussein, L., and Singla, A. (2025). Digital Identity Protection: Safeguarding Personal Data in the Metaverse Learning. In Proceedings of the 2025 International Conference on Engineering, Technology and Management (ICETM) (1–6). https://doi.org/10.1109/ICETM63734.2025.11051435

World Economic Forum. (2025). Artificial Intelligence in Media, Entertainment and Sport.

Yeruva, A. R., Choudhari, P., Shrivastava, A., Verma, D., Shaw, S., and Rana, A. (2022). COVID-19 Disease Detection Using Chest X-Ray Images By Means of CNN. In Proceedings of the 2022 2nd International Conference on Technological Advancements in Computational Sciences (ICTACS) (625–631). https://doi.org/10.1109/ICTACS56270.2022.9988148

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.