ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Designing Emotion-Sensitive Interactive Art Using Biometric Sensors and Adaptive Computing Models

Dr. Ria Kohli 1![]() , Suhas Bhise 2

, Suhas Bhise 2![]() , Yan Zhao 3

, Yan Zhao 3![]()

![]() ,

Senthil Kumar A 4

,

Senthil Kumar A 4![]()

![]() ,

Muhammad Muhammad Suleiman 5

,

Muhammad Muhammad Suleiman 5![]()

![]() ,

Dr. Aneesh Wunnava 6

,

Dr. Aneesh Wunnava 6![]()

![]()

1 Research

Scholar, Department of Computer Science and Applications, Bhagwant University,

Ajmer, Rajasthan, India

2 Assistant

Professor, Department of E&TC Engineering, Vishwakarma Institute of

Technology, Pune, Maharashtra 411037, India

3 Faculty of Education Shinawatra University, Thailand

4 Professor, Computer Science and Engineering, School of Engineering,

Dayananda Sagar University, Bangalore, India

5 Lecturer, Department of Computer Science, Federal University of

Science and Technology, Kabo, Kano State, Nigeria

6 Associate Professor, Department of Electronics and Communication

Engineering, Institute of Technical Education and Research, Siksha 'O' Anusandhan (Deemed to be University), Bhubaneswar, Odisha,

India

|

|

ABSTRACT |

||

|

The dynamic

terminal to technology and human perception, creativity and human expression,

an emotion-sensitive interactive art is where creative art is

capable of responding to the emotional state of the involved

participants. The paper will give an elaborate layout of the evolution of

adaptive art systems through the multimodal biometric sensor and intelligent

computing model. Physiological stimuli to acquire real-time emotional stimuli

are electroencephalography (EEG), electrocardiography (ECG), galvanic skin

response (GSR) and eye-tracking measurements. Noise filtering, normalization

and feature extraction are some of the advanced signal preprocessing

algorithms that ensure high data representation. The machine learning and

deep learning models that have been applied to classify emotional states with

high precision are referred to as Emotional state SVM, Convolutional Neural

Networks (CNN), Long Short-Term Memory (LSTM), and Transformer-based models.

The suggested system includes a dynamic computing system that is guided by the reinforcement learning, creating the possibility of

active dialogue between the user emotions and artistic products. The system

responses are constantly upgraded by the feedback

control mechanisms, making them more engaging and individual. The proposed

approach can be proved effective as experimental assessments show an

increased rate of emotion recognition and responsiveness. |

|||

|

Received 10 January 2026 Accepted 03 March

2026 Published 11 April 2026 Corresponding Author Dr. Ria

Kohli, kohliria7337@gmail.com DOI 10.29121/shodhkosh.v7.i4s.2026.7491 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Emotion Recognition, Interactive Art, Biometric

Sensors, Adaptive Computing, Deep Learning, Reinforcement Learning |

|||

1. INTRODUCTION

Art, technology and human centered computer intersections have led to interactive art systems which actively engage the audiences other than through mere observation. This is not the case with traditional art forms because the emphasis on interaction, responsiveness and real-time customization enables the viewers to be an inseparable part of the art process. The process of sensing technology evolution and artificial intelligence changes in the last years have enabled the development of a new branch of emotive interactive art when a piece of art becomes dynamic and reacts to physiological and psychological conditions of people. Such a transformation is part of a bigger movement of affective computing in which machines are created to interpret, identify and respond to human feelings in practical ways. Emotion sensitive art systems utilize biometric sensors to trace the responses in physiological responses that can be linked to emotions Chen et al. (2025). Such cues are brain activity (as assessed by electroencephalography (EEG), heart rate variability (as assessed by electrocardiography (ECG), skin conductance (as assessed by galvanic skin response (GSR)) and gaze patterns (as assessed by eye-tracking systems). The multimodal data is a rich and continuous presentation of user response and can provide deeper insights into their emotional engagement than a conventional interaction strategy such as gestures or voice signals Bhatia et al. (2022). However, much of these is rather cumbersome in transforming raw biometric data into valuable emotional explanations due to noise, inter-subject variance and the complexity of the human affect. Machine learning and deep learning based computational models have begun to be the subject of emotion recognition systems to be used to address these challenges. Support Vector Machines (SVM), Random Forests, and Convolutional Neural Networks (CNN) are widespread error-prone algorithms that are used in feature-based classification, and dynamic patterns of emotions are dynamic modeled based on Long Short-Term Memory (LSTM) networks and Transformers. Through these models, correctly, and scalably identifying emotion is made possible, the foundation of adaptive art responses DaCosta and Kinsell (2023).

Furthermore, such algorithms as dimensionality reduction and feature selection are employed to make the calculations as efficient as possible and minimize the occurrence of the necessary information of the emotional character. Besides emotion recognition, interactive art systems may also evolve in real time due to the integration of adaptive computing systems. The systems can learn the ideal artistic responses with the help of the reinforcement learning methods and the user feedback that is used to maximize the personalization and engagement. The feedback control systems retain variable visual, auditory or spatial components of the art work and create a closed circuit communication between the participant and the system. This kind of interaction between the work of art renders it as a dynamic system that evolves as it interacts Xu et al. (2020). The opportunities of emotion sensitive interactive art are pervasive and involve such domains as digital media, therapeutic, immersive installations, and research of human-computer interaction. In the artistic systems, emotional intelligence may allow the designers to create more interesting, compassionate, and significant experiences. The recent article provides the design framework of creating such systems that integrate biometric sensing, emotion recognition algorithms, and adaptive computer schemes to create the realm of interactive digital arts Kashyap et al. (2025).

2. Background and Related Work

2.1. Evolution of interactive and responsive art systems

The interactive and responsive art systems are a progressive change of the fixed form of art to the dynamic experience that includes participatory behavior. The interactive art originated in the mid 20 th century as mechanical and kinetic components of the artwork were introduced in response to the environmental stimulus, e.g., light, sound or movement. These systems were mainly based on analog systems and predefined responses and thus they were not very adaptable or complex. Since the emergence of digital computing in the late twentieth century, artists have started to incorporate software-based systems and thus allow real time interaction with sensors, projections and algorithmic control Hu et al. (2025). It is also the time when the art was shifting to immersive installations where participation of the audience became the key focus of the artistic narrative. The interactive art was further broadened by the emergence of multimedia technologies and virtual reality (VR), augmented reality (AR), and generative algorithms. These systems enabled a more advanced interaction with a user with visual, auditory and spatial dimensions Du et al. (2020). In more recent years, improvements in artificial intelligence and machine learning have made artworks to exhibit adaptive behavior, learning when they interact with the user and changing over time.

2.2. Overview of Biometric Sensing Technologies in Art

With the biometric sensing technologies, the interactive art system with emotion awareness has become a developmental part of the paper since the humans can capture the physiological signals with their affective states. The technologies capture some of the involuntary biological reactions which makes them more authentic to reflect the emotions of users compared to explicit inputs Yan et al. (2025). Examples of common biometric sensors in artistic uses are those in the domain of electroencephalography (EEG) to monitor brain activity, electrocardiography (ECG) to monitor the heart rate and variability, galvanic skin response (GSR) to detect changes in skin conductivity as a result of arousal, as well as eye-tracking devices used to analyze changes in gaze behavior and visual attention. When these sensors are incorporated into the artistic setting, one is able to acquire data in real-time and continuously, which is the foundation of the adaptive interaction Yan et al. (2025). The wearable technology has also enhanced the easy integration of the biometric devices into user experiences, thereby enhancing comfort and accessibility. Table 1 will be a summary of techniques, sensors, datasets, performance, limitations, contributions. Nevertheless, there are issues of providing reliability of the signal, minimizing the noise and inter-individual variability. Preprocessing methods have been used to overcome these problems: filtering, normalization, and artifact removal Yin et al. (2020).

Table 1

|

Table 1 Summary on Emotion-Sensitive Interactive Art Using Biometric Sensors and Adaptive Computing Models |

|||||

|

Technique/Model Used |

Biometric Modality |

Dataset/Environment |

Key Features |

Limitations |

Contribution |

|

SVM Cimtay and Ekmekcioglu

(2020) |

EEG |

DEAP Dataset |

Feature-based classification |

Limited temporal modeling |

Early EEG-based emotion recognition |

|

Random Forest |

GSR, ECG |

Controlled Lab Setup |

Multimodal physiological fusion |

Noise sensitivity |

Improved robustness with multimodal data |

|

CNN Wei et al. (2020) |

EEG |

SEED Dataset |

Spatial feature extraction |

High computation cost |

Deep learning for EEG emotion mapping |

|

LSTM |

ECG |

Wearable Sensors |

Temporal sequence modeling |

Requires large dataset |

Captures dynamic emotional patterns |

|

CNN + LSTM Fu et al. (2021) |

EEG, GSR |

DEAP Dataset |

Hybrid deep architecture |

Complex training |

Enhanced multimodal emotion detection |

|

Transformer Oh et al. (2021) |

EEG |

SEED-IV Dataset |

Self-attention mechanism |

High memory usage |

Improved long-range dependency learning |

|

SVM + PCA |

Eye-Tracking |

Visual Interaction Setup |

Dimensionality reduction |

Limited emotional depth |

Efficient gaze-based emotion inference |

|

Deep RL Patil and Rosemaro (2025) |

EEG, ECG |

Interactive Art System |

Adaptive response learning |

Reward design complexity |

Real-time adaptive art interaction |

|

Autoencoder + CNN |

GSR |

Custom Dataset |

Noise reduction + feature learning |

Data dependency |

Improved signal preprocessing |

|

Transformer + LSTM |

EEG, Eye-Tracking |

Multimodal Dataset |

Cross-modal attention |

High computational load |

State-of-the-art multimodal fusion |

|

Random Forest + RL |

ECG, GSR |

Smart Art Installations |

Adaptive feedback control |

Limited scalability |

Real-time system adaptation |

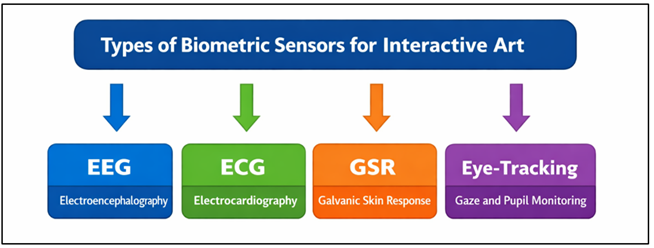

3. Biometric Sensor Integration

3.1. Types of sensors (EEG, ECG, GSR, eye-tracking)

Integration of biometric sensors is the basis of emotion sensitive interactive art systems because it allows recording physiological indicators that depict the internal states of users. Electroencephalography (EEG) is the electric activity of the brain and is extensively deployed in identifying cognitive and emotional activity, including attention, relaxation and stress. EEG signals are generally recorded with non-invasive electrodes on the scalp and its signals are very time-resolute yet they may need additional attention to interpretation. EEG, ECG, GSR, eye-tracking sensor integration can be seen in Figure 1. Electrocardiography (ECG) measures heart rhythm and gives the information on heart rate variability (HRV), highly linked to emotions arousal and stress level.

Figure 1

Figure 1 Biometric Sensor Types for Emotion-Sensitive

Interactive Art Systems

Galvanic Skin Response (GSR) or electrodermal activity is a measurement of the change in skin conductivity due to the activities of sweat glands and it is an accurate measure of emotional strength. The eye tracking system records the direction of gaze, duration of fixation and dilation of pupil and indicates the patterns of user attention and interaction in an artistic environment. All sensor modalities have their own distinct information and when they are used together, one can have the complete picture of user emotions. Multimodal sensing has become a favored method of detecting emotions due to factors of accuracy, latency, user comfort, and environmental constraints, on which the choice of the sensors is determined.

3.2. Data Acquisition Protocols and Calibration Methods

To make biometric signals reliable and consistent in interactive art systems effective data acquisition protocols are necessary. These guidelines are used to establish standard guidelines to the placement of sensors, the sampling rate, synchronization, and environmental regulation during data collection. An example is that EEG and ECG sensors should have the electrode placed at specific points in the skin to ensure that the signals are not altered, whereas GSR sensor needs to be placed on the parts of the body with the most concentration of sweat glands, like on the fingertips. Eye-tracking systems need proper calibration and alignment to be able to plot the gaze coordinates of visual stimuli.

3.3. Signal Preprocessing (Filtering, Normalization, Noise Reduction)

Signal preprocessing is a very important part of converting the raw biometric data to meaningful inputs of the emotion detection models. The signal is usually corrupted with noise, artifacts and inconsistencies that are generated by the environment, sensor movement or biological variations. Filtering methods are used to eliminate the undesired frequency content; e.g. band-pass filters are widely used to EEG and ECG signals in order to isolate desired frequency bands related to cognitive and emotional activity. Data is scaled using normalization techniques that will allow data to be properly compared when used by different users and sessions. It is especially significant in systems with multimodes with different units and magnitudes of signals. Different noise reduction methods including adaptive filtering, wavelet denoising and artifact-removal algorithms can be used to remove the noise as a result of muscle movements, eye blinking or external noise.

4. Emotion Detection and Classification Models

4.1. Supervised learning approaches

Learning Supervised learning techniques play the core role in detecting emotions, by matching the biometric data to the preset classes of emotions. The support vector machines (SVM) have been in fashion since they can run on high dimensional feature space and they form the most appropriate hyperplanes to optimize the distance between the classes. They also particularly apply well in small to medium-sized datasets and provide good generalization. The algorithm of the Random Forest is an ensemble learning algorithm, which utilizes the succession of decision trees to improve classification rates, as well as to experience fewer overfits. They are able to address non linear relationship and non-homogenous data and this renders them applicable to multimodal biometric inputs. Convolutional Neural Networks (CNN) also allow supervised learning of deep features, that is, structured features, e.g., spectrograms or spatial EEG maps. CNNs are hierarchical learners, which do not involve any manual feature engineering. They also train these models with labeled data i.e. physiological data is associated with an emotional state, e.g. happy, stressed or neutral. Even though they are very effective, the quality and variety of the labeled data are crucial to the supervised models. To achieve reliability and scalability of real-time emotion-sensitive art systems, proper training, validation and cross-validation strategies are needed.

4.2. Deep Learning Models (LSTM, Transformer-Based Architectures)

Deep learning models have also made great progress in emotion recognition by taking complex temporal and contextual patterns in biometric signals. Long Short-Term Memory (LSTM) networks are effective especially in sequential-based data as they have the capability of long-term dependence and capture temporal changes in emotional reactions. It is this that makes LSTM suitable to analyze time-series data like EEG, ECG, and GSR signals, where the emotional state of participants changes with time. LSTM networks help in reducing the vanishing gradient issue and allow the system to acquire dynamic patterns relating to the interactions of the user. The more recent architecture is transformer-based which uses self-attention to reason about sequence-wide dependencies. Transformers unlike recurrent models are able to process data in parallel, which enhances the computational efficiency and scalability. They can model complex associations among various modalities of multimodal emotion recognition systems.

4.3. Feature Selection and Dimensionality Reduction

Dimensionality reduction and feature selection are very important stages in optimizing emotion detecting models because of the added biometric data redundancy and efficiencies of the computing processes. Raw physiological signals tend to produce large numbers of features, a number of which can be irrelevant or be too correlated. The methods of feature selection are intended to select the most informative features that are useful in classifying emotions. Techniques like mutual information, correlation-based selection and recursive feature elimination are usually employed to keep the discriminative features and get rid of noise. Dimensionality reduction methods also reduce the high-dimensional data by creating lower-dimensional data, which do not substantially lose the information. Principal Component Analysis (PCA) is popularly applied to project data onto orthogonal components to maximize variance and Linear Discriminant Analysis (LDA) aims at maximizing the class separability. The methods improve model performance, training time and improve generalization. Such techniques are fundamental to the computational pipeline in emotion sensitive interactive art systems because efficient feature representation is necessary in real-time processing and proper interpretation of emotions.

5. Adaptive Computing Framework

5.1. Architecture of adaptive interactive systems

The design of adaptive interactive systems in emotion art incorporates senses, computation and response generation into a whole system that allows interaction in real-time. Multimodal biometric devices like EEG, ECG, GSR and eye-tracking devices constantly record physiological responses on users at the input layer. Such messages are sent to a preprocessor module which filters noise, normalizes and extracts features. The processed data is, then, inputted into an emotion recognition engine, made up of machine learning or deep learning models which identify the emotional state of the user. Figure 2 illustrates adaptive system inputs sensing, processing, feedback. A decision-making layer is also part of the system architecture which interprets the classified emotions and decides what artistic response is appropriate, e.g. a change in visual representation, soundscape or spatial arrangement.

Figure 2

Figure 2 Adaptive Interactive Art System Architecture

These responses are performed by a rendering engine which dynamically renders the artwork in real time. Middleware components make sure the hardware and software modules are synchronized and the latency is low with high responsiveness.

5.2. Reinforcement Learning for Dynamic Artistic Response

Reinforcement learning (RL) presents itself as an effective tool of facilitating the process of adaptive interactive art systems to learn optimal response due to an ongoing interaction between the system and the user. In this regard the system is supposed to be an agent that interacts with the environment that is represented by the emotions of the users and contextual inputs. Based on the identified emotional state based on the biometric signals, the agent will select actions (differing color schemes, animation patterns, or audio feedback). The actions are quantified through a reward function which quantifies user interaction, satisfaction or emotional orientation. The system eventually develops the ability to maximize cumulative rewards through improved response strategies. The popular techniques to carry out RL in adaptive art systems are Q-learning, Deep Q-Networks (DQN), and policy gradient. The methods allow the system to experiment with various artistic responses and use the ones that result in favorable reactions. Personalization is also supported in RL because the system is able to adjust to the specifications of a user and his or her behavioural patterns. With the use of reinforcement learning, emotion-sensitive art installations can be smarter and continually multiply to offer more rich and meaningful interactive experiences.

5.3. Feedback Control Mechanisms and System Adaptation

Stability, responsiveness and adaptability of emotive interactive art systems require feedback control mechanisms. These processes create a closed-loop system where the behavior of the system is continually affected by user responses. It starts with the perception of the emotional condition with the help of the biometric sensors and the creation of the artistic reaction. The subsequent physiological response of the user of this response is then observed and creates a feedback signal that causes additional system modifications. There are control strategies that can be used in regulating the system outputs, these are proportional-integral-derivative (PID) controllers, adaptive control models and fuzzy logic systems. Such techniques are used to avoid the sudden or uneven developments that may interfere with the user experience. Also, adaptive algorithms will dynamically update system parameters in response to feedback and allows personalization in real-time and enhanced emotional fit. The error correction and the system learning are also supported by the feedback mechanisms and allow the further refinement of the interaction strategies. Interactive art systems prevent either responsiveness or stability by incorporating the powerful feedback control, which forms immersive environments that develop according to user emotions.

6. Results and Discussion

Experimental assessment shows that the proposed system can be used to achieve high accuracy in emotion recognition and real-time responsiveness in multimodal biometric inputs. EEG, ECG, GSR and eye-tracking significantly increase the classification robustness over single sensor methods. Deep learning models, especially CNNLSTM models, are more successful in learning temporal dynamics of emotions as compared to conventional ones. Reinforcement learning facilitates dynamic artistic reactions, increasing the user feedback and personalization. On the whole the results confirm the effectiveness of this system in the establishment of immersive, emotion sensitive interactive art experience.

Table 2

|

Table 2 Performance Evaluation of Emotion Detection Models Using Biometric Data |

||||

|

Model |

Accuracy (%) |

Precision (%) |

Recall (%) |

F1-Score (%) |

|

SVM |

86.4 |

85.9 |

84.7 |

85.3 |

|

Random Forest |

88.7 |

87.5 |

86.9 |

87.2 |

|

CNN |

91.5 |

90.8 |

90.2 |

90.5 |

|

LSTM |

92.8 |

92.1 |

91.6 |

91.8 |

|

Transformer |

94.2 |

93.7 |

93.1 |

93.4 |

|

Hybrid (CNN + LSTM) |

95.6 |

95.1 |

94.8 |

94.9 |

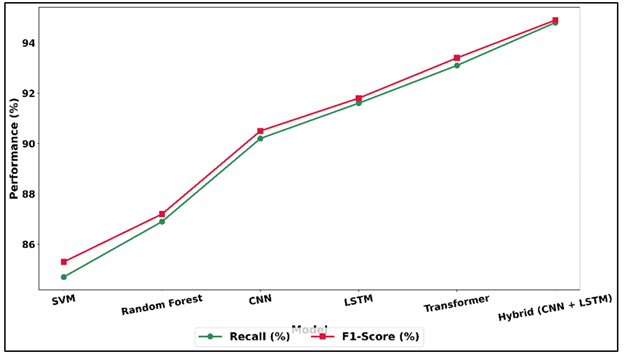

Table 2 provides a comparative analysis of different emotion detection models based on biometric data, which shows that the results are significantly more improved with the more sophisticated deep learning methods. The SVM and the Random Forest models used in the traditional machine learning models have moderate accuracy rates of 86.4% and 88.7, respectively, proving their success in working with structured features, but limited the ability to detect complex emotional patterns. Figure 3 indicates that deep models are more precise in accuracy compared to the traditional models.

Figure 3

Figure 3 Comparative Analysis of Accuracy and Precision Across Machine Learning and Deep Learning Models

The performance is enhanced to 91.5 percent with the introduction of deep learning models, which are specifically CNN, which allows the extraction of features automatically based on biometric signals. Models like LSTM that are designed so that they can learn temporal dependencies improve accuracy to 92.8, because they are able to learn sequential dependencies in physiological data. The advanced models have improved recall and F1-score as indicated by Figure 4.

Figure 4

Figure 4 Recall and F1-Score Performance Trends Across Classification Models

Transformer models have an accuracy of 94.2 which is better than LSTM since they can model longer-range dependencies by using attention mechanisms. The hybrid CNN + LSTM model has the best performance with the highest accuracy at 95.6 and F1-score at 94.9 since it has the best spatial and temporal feature combining learning capacity. In general, the findings indicate the relevance of hybrid and deep solutions to the sound and robust emotion recognition in interactive art systems.

7. Conclusion

The paper outlines a comprehensive scheme in the design of emotion sensitive interactive art systems which involves integration of biometric sensing systems and adaptive computing models. The paper leads to the importance of multimodal data collection using the EEG, ECG, GSR, and eye-tracking sensors to be able to measure a range of physiological indicators of human emotion. The effective preprocessing and feature extraction techniques provide the system with the reliable and meaningful data representation. The classification of emotional states with high accuracy and the elimination of the problems of temporal variability and signal complexity are possible due to the use of the supervised and deep learning models, including CNN, LSTM, and the Transformer-based models. Moreover, the proposed adaptive computing paradigm also leads to more responsive systems with the help of the reinforcement learning and feedback control process. These are the elements that allow the system to dynamically respond to the emotions of its users assisting them in developing personal and immersive experiences through generating artistic outputs. Modular architecture is scalable and flexible and hence can be applied in a wide range of applications such as in digital art installations, therapeutic environments, human computer interaction systems etc. These results mean that the biometric sensing and intelligent computational models are synergistic in the sense of accuracy in recognizing emotions and the involvement of the users. The project will contribute to the further evolution of the field of affective computing and interactive art providing a systematic approach to the designing of the emotionally responsive systems.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Bhatia, Y., Bari, A. H., Hsu, G.-S. J., and Gavrilova, M. (2022). Motion Capture Sensor-Based Emotion Recognition Using a Bi-Modular Sequential Neural Network. Sensors, 22(1), 403. https://doi.org/10.3390/s22010403

Chen, X., Ibrahim, Z., and Aziz, A. A. (2025). Predicting Emotional Responses in Interactive Art Using Random Forests: A Model Grounded in Enactive Aesthetics. Frontiers in Psychology, 16, 1609103. https://doi.org/10.3389/fpsyg.2025.1609103

Cimtay, Y., and Ekmekcioglu, E. (2020). Investigating the use of Pretrained Convolutional Neural Network on Cross-Subject and Cross-Dataset EEG Emotion Recognition. Sensors, 20, 2034. https://doi.org/10.3390/s20072034

DaCosta, B., and Kinsell, C. (2023). Serious Games in Cultural Heritage: A Review of Practices and Considerations in the Design of Location-Based Games. Education Sciences, 13, 47. https://doi.org/10.3390/educsci13010047

Du, G., Zhou, W., Li, C., Li, D., and Liu, P. X. (2020). An Emotion Recognition Method for Game Evaluation Based on Electroencephalogram. IEEE Transactions on Affective Computing, 10, 598.

Fu, E., Li, X., Yao, Z., Ren, Y. X., Wu, Y. H., and Fan, Q. Q. (2021). Personnel Emotion Recognition Model for Internet of Vehicles Security Monitoring in Community Public Space. EURASIP Journal on Advances in Signal Processing, 2021, 81. https://doi.org/10.1186/s13634-021-00789-5

Hu, H., Wan, Y., Tang, K. Y., Li, Q., and Wang, X. (2025). Affective-Computing-Driven Personalized Display of Cultural Information for Commercial Heritage Architecture. Applied Sciences, 15, 2076–3417. https://doi.org/10.3390/app15073459

Kashyap, S. V., Purohit, S., Kumar, D. A., Jawaid, F. I. M., Kumar, J. R. R., and Ajani, S. N. (2025, December). Visual Storytelling and Explainable Intelligence in Organizational Change Communication. ShodhKosh Journal of Visual and Performing Arts, 6(5s), 696–707. https://doi.org/10.29121/shodhkosh.v6.i5s.2025.6965

Oh, G., Ryu, J., Jeong, E., Yang, J. H., Hwang, S., Lee, S., and Lim, S. (2021). DRER: Deep Learning-Based Driver’s Real Emotion Recognizer. Sensors, 21, 2166. https://doi.org/10.3390/s21062166

Patil, S. S., and Rosemaro, E. (2025, March). Cognitive Computing: Integrating Reasoning and Learning in Intelligent Systems. International Journal of Advanced Computer Engineering and Communication Technology (IJACECT), 13(1), 9–13. https://doi.org/10.65521/ijacect.v13i1.59

Wei, C., Chen, L.-L., Song, Z.-Z., Lou, X.-G., and Li, D.-D. (2020). EEG-Based Emotion Recognition Using Simple Recurrent Units Network and Ensemble Learning. Biomedical Signal Processing and Control, 58, 101756. https://doi.org/10.1016/j.bspc.2019.101756

Xu, G., Ren, T., Chen, Y., and Che, W. (2020). A One-Dimensional CNN-LSTM Model for Epileptic Seizure Recognition Using EEG Signal Analysis. Frontiers in Neuroscience, 14, 1253. https://doi.org/10.3389/fnins.2020.578126

Yan, D. N., Zhao, J., Ma, Y. M., and Ma, H. (2025). The Influence of Neuroticism Personality Trait on User Interaction with Game-Based Hand Rehabilitation Training. Displays, 87, 102944. https://doi.org/10.1016/j.displa.2024.102944

Yan, Z., Lim, C. K., Halim, S. A., Ahmed, M. F., Tan, K. L., and Li, L. (2025). Digital Sustainability of Heritage: Exploring Indicators Affecting the Effectiveness of Digital Dissemination of Intangible Cultural Heritage Through Qualitative Interviews. Sustainability, 17, 1593. https://doi.org/10.3390/su17041593

Yin, Y., Zheng, X., Hu, B., Zhang, Y., and Cui, X. (2020). EEG Emotion Recognition Using Fusion Model of Graph Convolutional Neural Networks and LSTM. Applied Soft Computing, 100, 106954. https://doi.org/10.1016/j.asoc.2020.106954

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.