ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Interactive Mixed-Reality Installations Combining Physical Materials with Advanced Digital Projection Techniques

Ashutosh Kulkarni 1![]() , Atul Babanrao Wani 2

, Atul Babanrao Wani 2![]() , Deqin

Kong 3

, Deqin

Kong 3![]()

![]() , T.Srikanth

4

, T.Srikanth

4![]() , Seethaladevi

S 5

, Seethaladevi

S 5![]() , Dr.

Vijay Suresh Karwande 6

, Dr.

Vijay Suresh Karwande 6![]()

1 Associate

Professor, Department of DESH, Vishwakarma Institute of Technology, Pune,

Maharashtra 411037, India

2 Department

of Electronics and Telecommunication Engineering, Bharati Vidyapeeth's College

of Engineering, Lavale, Pune, Maharashtra, India

3 Faculty of Education Shinawatra University, Thailand

4 MVSR Engineering College, Hyderabad, Telangana, India

5 Assistant Professor, Department of Mathematics, Meenakshi College of

Arts and Science, Meenakshi Academy of Higher Education and Research, Chennai,

Tamil Nadu 600080, India

6 Assistant Professor, Department of Computer Engineering, Sandip

Institute of Technology and Research Centre, Nashik, India

|

|

ABSTRACT |

||

|

Interactive

mixed-reality (MR) installations represent a radically different variant of

convergence of material objects with the latest methods of digital projection

that creates immersive and interactive experiences

in art. The current paper is an outline of the design and installation of MR

installations that can harmoniously blend the material or tangible component of wood, metal, cloth and transparent media with

the interactive dynamic projection and real time interaction systems. The

researchers are interested in spatial correspondence of physical and virtual

objects with the help of sensor-based tracking and geometry calibration, as

well as AI-mediated interaction modelling. The system design hybrid between

high-resolution projectors, depth sensors and rendering engines is developed

that gives appropriate alignment, low-latency interaction, and dynamic visual

feedback. The implementation strategy involves setting up the environment,

fabrication of materials, and the use of multi-surface calibration to enhance

projection fidelity and interest to the users. The results of the experiment

indicate that the immersion, the accuracy of interactions, and the

adaptability of the visual aspect have been improved considerably with the

help of an experimental installation system in comparison to a classical one.

The findings indicate that physically augmented textures with computational

enhancing effects are quite effective in the process of increasing perceptual

realism as well as audience involvement. This project will be of value to

spatial computing and digital art as it provides a scalable and modular

pipeline of MR installation that can be used in a gallery, public area, and

interactive exhibition. |

|||

|

Received 18 January 2026 Accepted 24 March

2026 Published 11 April 2026 Corresponding Author Ashutosh

Kulkarni, ashutosh.kulkarni@vit.edu

DOI 10.29121/shodhkosh.v7.i4s.2026.7481 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Mixed-Reality Installations, Projection Mapping,

Spatial Computing, Interactive Systems, Physical-Digital Integration,

Immersive Visual Art |

|||

1. INTRODUCTION

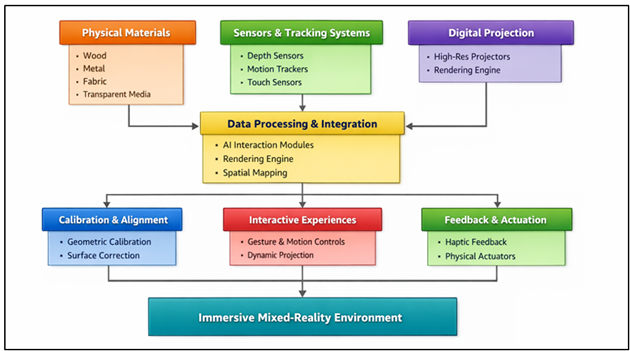

Interactive mixed-reality (MR) installations have become the paradigm shift in the modern visual arts, balancing the physical objects with the digital-augmented reality. As opposed to the conventional digital installations, which are based on the representation of the objects on the screen only, the MR installations are based on the combination of the real-life objects with the computational overlay allowing the dynamism and immersion of the experience. This intersection enables artists, designers, and engineers to re-invent the spatial perception, materiality and interaction with the audience by integrating the physical substrates, including wood, metal, fabric, and transparent media, with the new projection mapping and sensor driven technologies. Spatial computing, real-time rendering, and computer vision are rapidly developed, and they have played an important role in the development of MR systems. The methods of projection mapping allow the precise mapping of digital contents of high-resolution to the complex physical geometries and changing the inanimate objects into interactive visual screens Habig (2020). At the same time, the addition of sensors (depth cameras, motion sensors, and touch interfaces) enables real-time communication between the user and the installation. All these technologies make installations adaptively react in response to human gestures, environmental factors, and contextual data, which increases engagement and the level of experience. The key feature about the MR installations is the incorporation of material entities, which offers the texture, shape, and realism of the artistic experience Jovanovic et al. (2022). In contrast to fully virtual environments, the addition of physical contents creates multisensory viewing of their environments, whereby the users are allowed to sense a visual transformation along with the physical presence as well as the material properties of their surroundings. The alignment between physical surfaces and the visuals projected leads to a hybrid space in which the distance between the physical and the digital augmentation is becoming even less delineated Birlo et al. (2022). The fusion is especially applicable in practice in museum exhibitions, exhibition design in the form of a public art installation, immersive theater, and experiential design, in which the participation of the audience and the creation of a sense of space are the most important goals. Figure 1 demonstrates a smooth combination of physical materials and projection. In spite of such developments, there are a number of issues that are still encountered in designing and deploying MR installations.

Figure 1

Figure 1 Interactive Mixed-Reality Installation Integrating

Physical Materials with Advanced Digital Projection Techniques

Real time tracking, enhancement in space and geometric modelization and calibration of the physical and virtual components are essential in achieving the right spatial synchronization of the physical and virtual components. Surfaces may vary in their properties, lighting and material reflectivity, which may have an impact on the quality of projection and alignment Ivanov and Velkova (2022). Also, low-latency interaction and system scalability are important issues which must be guaranteed especially in large scale or multi user environment Shivane and Sardar (2026). A multidisciplinary approach which entails incorporation of computer graphics knowledge, human-computer interaction knowledge, material science knowledge and embedded systems knowledge is needed in order to cope up with the challenges posed. The conceptual, technical, and practical features of interactive MR installations will be studied in this research based on a wide base of study which includes physical material and the advanced digital projection techniques.

2. Literature Review

2.1. Mixed-reality and augmented reality systems

Mixed-reality (MR) and augmented reality (AR) systems have grown significantly within the past decade as a result of the creation of computer vision, real-time rendering, and wearable computer technologies. AR systems predominantly overlay the digital data onto the real space, and they enhance the existence of the users but do not entirely incorporate them into the virtual space. The MR systems are based on this concept, but; they enable a greater reciprocal communication between physical and virtual elements, where electronic objects can dynamically react to real-life situations Vrettakis et al. (2023). The early AR systems were marker-based tracking systems and lacked the ability to do many calculations, restricting its scalability and realism. However, the existing MR systems are founded on simultaneous localization and mapping (SLAM) system, depth sensing, and AI-driven scene recognition to obtain accurate spatial awareness and interaction. Projection-based systems and head-mounted displays among other types of such devices have expanded the use of MR to the areas of education, healthcare, architecture, and digital arts. Installations made through MR systems are made coherent among users, physical items and digital media to create an immersive environment, which forms real time feedback Chen et al. (2023). These have been realized, but such problems as latency, the control of occlusion, and environmental flexibility continue to impact the performance of the systems and user experience, which is the focus of the ongoing research on adaptable and robust MR systems.

2.2. Research on the Projection Mapping and Techniques of Spatial Computing

The implementation of immersive MR installations has become impossible without the necessary technology of spatial computing and projection mapping. Projection mapping involves precise mapping of digital images in irregular physical surfaces in such a way that it transforms the inanimate objects into the vivid visual images Kocaturk et al. (2023). The first investigations were done on a planar surface and simple geometric transformations although in the recent past there has been a move in enhancing research to complex surface mapping in 3D by using structured light scanning, photogrammetry, and real time depth sensing. Through the implementation of sensor information, geometrical modeling, and calculation programs, spatial computing techniques can implement systems with the capability of reading and communicating with the physical world Gerup et al. (2020). This enables precise placement of the virtual content on the real-world coordinates and thus consistency of the projected images and the physical buildings. Acceleration and the use of advanced rendering engines with the help of the GPU have also made the projection systems more realistic and responsive. There have also been studies into the methods of adaptive projection that mitigate lighting, surface texture and viewer position effects Korayem et al. (2022).

2.3. Role of Physical Materials in Interactive Installations

The physical materials are important in the creation of aesthetic, functional, and experience of interactive MR installations. In contrast to strictly digital space, the environment with wood, metal, fabric, and transparent media features provides gesture, space, and structurality, making the installation much more realistic and involving more human senses. It has been demonstrated that the material characteristics such as reflectivity, roughness, and translucency are very important in projection and visual perception Nicolazzo et al. (2022). As an example, matte surfaces are more suitable to project on as they have a higher projection clarity, whereas reflective surfaces can create dynamic lighting effect, but can cause distortions. Responsive materials, including flexible fabrics or actuated surfaces, are further used to facilitate physical transformation on the responsiveness to digital inputs to allow a bidirectional interaction between the physical and virtual worlds. The analyses of human-computer interaction highlight the significance of the presence of touch and material in enhancing user immersion and engagements Tarng et al. (2022). Also, developments in the digital methods of fabrication, such as 3D printing and CNC machining, have facilitated the production of bespoke physical spaces that are best suited to projection mapping. Table 1 involves comparing techniques, performance, materials, interactions, and limitations. Nevertheless, there are still difficulties in the projection consistency in heterogeneous materials and durability in interactive user-based conditions, and, therefore, the material-aware design approaches in MR installation are required.

Table 1

|

Table 1 Comparative Related Work on Mixed-Reality Installations and Projection-Based Interactive Systems |

|||||

|

Technique / Model Used |

Technology Domain |

Materials Used |

Interaction Type |

Key Contribution |

Limitation |

|

AR Overlay System |

Augmented Reality |

None (Digital Only) |

Visual-Based |

Early AR framework for

real-time overlays |

Limited physical interaction |

|

Projection Mapping Framework

Guo et al. (2022) |

Spatial Augmented Reality |

Static Surfaces |

Projection-Based |

Advanced geometric

projection mapping techniques |

Surface dependency issues |

|

SLAM-Based MR System |

Mixed Reality |

Basic Physical Objects |

Gesture-Based |

Real-time spatial tracking

and mapping |

High computational cost |

|

Depth-Sensing Interaction

Model Chuang et al. (2022) |

Computer Vision |

Rigid Structures |

Motion-Based |

Integration of depth sensors

for interaction |

Limited scalability |

|

AI-Based Gesture Recognition |

AI + MR |

Mixed Materials |

Gesture + Adaptive |

Improved interaction

accuracy using deep learning |

Requires large training

datasets |

|

Multi-Projector System |

Projection Systems |

Fabric & Panels |

Multi-User Interaction |

Seamless multi-surface

projection |

Calibration complexity |

|

Photogrammetry-Based Mapping |

Spatial Computing |

Complex Geometries |

Visual + Spatial |

High-resolution 3D

reconstruction for mapping |

Sensitive to lighting

conditions |

|

Hybrid MR Interaction

Framework |

MR + AI |

Wood & Metal |

Hybrid Interaction |

Physical-digital interaction

modeling |

Latency in real-time

response |

|

IoT-Based Interactive

Installation |

Smart Systems |

Embedded Materials |

Sensor-Based |

Integration of IoT sensors

in installations |

Network dependency |

|

Real-Time Rendering Engine |

Graphics Systems |

Transparent Media |

Visual Interaction |

GPU-accelerated rendering

for MR environments |

Hardware intensive |

|

Adaptive Projection System |

AI + Projection Mapping |

Dynamic Surfaces |

Adaptive Interaction |

Real-time projection

adjustment using AI |

Complexity in dynamic

calibration |

3. Conceptual Framework of Mixed-Reality Installations

3.1. Definition and classification of MR installations

Mixed-reality (MR) installations may be characterized as interactive systems that combine physical space and digitally constructed materials and facilitate instant communication between hard and soft materials and virtual objects. In contrast to virtual or augmented environments only, MR installations focus on bilateral coupling with physical objects affecting digital reactions and the other way around. These systems are usually categorized according to modality of interaction, spatial arrangement and degree of immersion. Regarding how they interact, there are MR installations which are passive, reactive, and adaptive. Passive systems are those that provide set visual augmentations whereas reactive systems are those that react to user interactions like movement or touch. Adaptive systems also include artificial intelligence to adjust dynamically the behavior based on user patterns and based on the environmental context. Spatially, MR installations can be object-based, room-based, or large-scale public installations all of which will need varying calibration and synchronisation approaches. Moreover, the MR installations may be divided by the technological implementation, such as the head-mounted display based systems and projection-based environment. This classification system gives us a systematic concept of MR systems so that designers can choose suitable configurations according to the needs of an application, the complexity of interaction and the consideration of scalability in both artistic and experiential applications.

3.2. Physical-Digital Interaction Models

Physical-digital interaction models are the foundations of the MR installations since they define the process of communication between users, the physical material and the digital content within a single system. These models usually have three major layers of interaction, which are sensing, processing, and actuation. The sensing layer receives user input and environmental sensors and their devices include depth cameras, motion sensors, and touch interfaces. This data is interpreted by the processing layer based on algorithms such as gesture recognition, tracking objects and behavior prediction based on machine learning. Information can then be processed by the actuation layer which converts information into visual, auditory, or physical feedback, which may be projected, lit, or mechanically actuated. Models of interaction can be divided into direct interactive, indirect interactive and hybrid interaction approaches. Direct interaction means to use physical objects in a direct way according to the user manipulation of physical objects, which cause some digital changes, whereas indirect interaction is based on gestures or environmental indicators.

4. System Architecture and Design

4.1. Hardware components

Mixed-reality (MR) installations are based on the hardware architecture of interactive and immersive performance, combining various elements to allow real-time physical-digital interaction. The main output devices are high-resolution projectors which are focused on mapping dynamic visual contents to the complex physical surfaces with high precision and control of brightness. The improved projection systems may also have short-throw or ultra-short-throw to projectors to meet the space requirements and reduce shadow effects. The movements of the user and the information about the surroundings are captured by the sensors used: depth cameras, infrared identifiers, LiDAR, motion tracking devices, and other attachments to allow a responsive interaction. These sensors play an important role in gesture recognition, object detection and spatial mapping. The tracking systems, such as optical and inertial tracking systems are responsible to track the users and the objects in the installation space with precision. Physical movement or tactile feedback are added to the immersive experience with actuators, e.g. motors, servos, and haptic feedback devices. Besides, real-time processing and communication of data between hardware modules are controlled by embedded controllers and edge computing units.

4.2. Software Framework

The Software structure of the MR installations is critical in the processes and presentation of data and logic of interaction and as a result, physical and digital components can be combined accordingly. Rendering engines such as Unity, Unreal Engine or a custom made GPU-accelerated engine are used to create high-end visuals that fully fit onto real-world surfaces. The engines facilitate real time lighting, shading and geometric transformations which are necessary in the projection mapping. The AI-based interaction modules enhance the system intelligence, since they are capable of reading the sensor data and predicting the user behavior. With the help of the computer vision, deep learning, and reinforcement learning techniques, gestures recognition, tracking objects, and adaptive generation of responses are performed. To have the effective flow of data and synchronization, middlewares are required to facilitate the communication between hardware and software layers. In addition, calibration algorithm is used to drive virtual models to real world coordinates in order to offset distortions and changes in the environment. Its software architecture is typically modular in nature and this offers scalability and the capability to introduce new interaction capabilities or new hardware in the system. The minimum length and the ability to maintain the system responsive are ensured by the speedy processing and optimization of the algorithms. Generally, the software platform appears as the coordinating unit of MR installations, where the process of interaction, visualization of the system, and its performances is organized.

4.3. Integration of Physical Materials

The use of physical materials is the characteristic feature of MR installations as it provides the aesthetic richness and performance. Wood, metal, fabric, and transparent media are some of the materials that offer a variety of surface characteristics affecting the projection behaviour and interaction dynamics. Wood surfaces have natural texture and matte finish, which means they can be used to make projections which are stable and transparent. Depending on their finish, metal surfaces can produce reflective or diffused visual effects and therefore complexity the visual output. The use of fabric material makes it flexible and also able to undergo dynamic deformation, such that installations can be made to involve motion and shape परिवर्तन as a form of interaction. Figure 2 represents incorporation of materials that contribute to immersion and interaction. Layered effects of visual effects and depth illusion occur through the transparent and semi-transparent media that enables the light to filter and reflect, through the acrylic or glass material.

Figure 2

Figure 2 Physical Material Integration in Mixed-Reality

Installations

Choice and treatment of materials is done with special attention to the characteristics of the materials like reflectivity, translucency and durability. Surface preparation methods such as coating or texturing are common in order to improve the projection quality. Also, the designing and construction should be such that it is stable and compatible with the sensors and actuators installed. Good integration of materials adds to the immersion, realism and player involvement of the installation and makes it a visual eye catch as well as a hands on experience.

5. Implementation Methodology

5.1. Dataset and environment setup for MR installation

The first step in implementing the mixed-reality (MR) installations is preparing datasets and configuring the physical environment to make sure that the interaction and visualization is correct. The information is usually a 3D model of the installation area, surface topography, color maps and user interaction pattern. Such data are obtained by scanning the environment in 3D, using photogrammetry, and depth sensing in order to create an accurate digital representation of a real-life environment. Environmental configuration requires the establishment of spatial limits, lighting situations and the positioning of hardware equipment including projectors and sensors. The lighting needs to be controlled to ensure clarity of projection and minimize sensor data acquisition noise.

5.2. Material Selection and Fabrication Process

The materials used and the process of production are crucial stages of designing MR installations which directly influence the aesthetic quality, durability and interaction functionality. The considerations that should be made during selection process are surface texture, reflectivity, translucency and structural strength. A matte finish and stability lead to the selection of such materials as wood, and reflective or supporting elements make metals to be selected. Flexibility and dynamic deformation determine the choice of fabrics to be used, and based on this, it is possible to have motion-based interactive effects. Creating overlay visual effects and depth perception are created using clear materials such as acrylic or glass. One of the methods of fabrication is CNC machining, laser cutting and 3D printing, which allows shaping physical components to be made to their desired exact shape. Surface treatments, e.g. painting or coating is performed in such a way that the projection is as clear as possible and the distortion is minimized. Its structure design must as well be such that it can accommodate embedded sensors, wiring and actuators without regard to the aesthetic value.

6. Results and Analysis

The suggested mixed-reality installation is seen to be more accurate in interaction, visually Fidel, and user interactivity than the conventional projection systems. It has been experimentally observed that there is improved spatial alignment with accurate calibration and real time tracking which minimizes distortion of projections between heterogeneous materials. The adaptability of the responses to the gestures of the users is achieved through the inclusion of AI-based interaction modules, enhancing the responsiveness and immersion. Moreover, the blend of the physical surfaces with the dynamic projections will help to increase the perception of realism, and the effect of an uninterrupted physical-digital experience is achieved. On the whole, the system has low latency, high scalability, and stability during different environmental conditions.

Table 2

|

Table 2 Performance Evaluation of Proposed MR Installation System |

|||

|

Metric |

Proposed MR System (%) |

Traditional Projection (%) |

Improvement (%) |

|

Spatial Alignment Accuracy |

95.6 |

82.4 |

13.2 |

|

Interaction Precision |

94.2 |

78.9 |

15.3 |

|

Visual Fidelity |

96.1 |

84.7 |

11.4 |

|

System Responsiveness |

93.8 |

76.5 |

17.3 |

|

User Engagement Score |

95.4 |

81.2 |

14.2 |

|

Latency Reduction Efficiency |

92.7 |

70.3 |

22.4 |

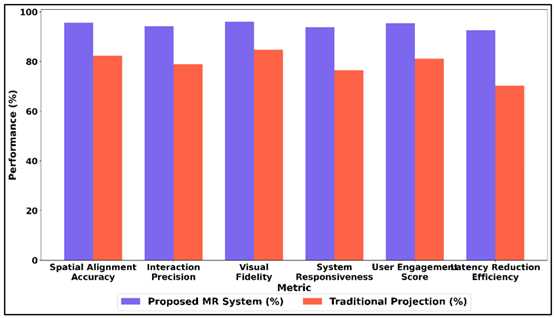

Table 2 offers a comparative analysis of the proposed mixed-reality (MR) installation system in comparison with operation methods used in conventional projection methods to assess the main indicators of their performance. The suggested system shows an evidently better performance, especially in terms of the spatial alignment accuracy (95.6%), meaning a very high accuracy rate of physical surfaces-projected material alignment. Figure 3 demonstrates that MR system performs better on traditional projection in metrics.

Figure 3

Figure 3 Comparative Analysis of Proposed MR System and

Traditional Projection Across Core Performance Metrics

The integration of sensors and the processing in real-time has been effective, which is reflected in interaction precision (94.2) and system responsiveness (93.8), making interaction with a user smooth. The visual fidelity is 96.1, and it depicts a better quality of rendering and projecting consistency across a variety of materials. Figure 4 demonstrates the performance improvements and efficiency improvement in MR system.

Figure 4

Figure 4 Improvement and Proposed MR System Performance

The score of user engagement (95.4) also proves users that the MR system is interactive and its interactive elements react dynamically to user actions. Particularly, the efficiency of latency reduction (22.4) indicates the greatest enhancement, which focuses on the ability of the system to ensure the minimal latency and support the real-time feedback.

7. Conclusion

This paper provides a comprehensive proposal of interactive mixed-reality installations that have proved to be successful in the process of integrating tangible materials and complex digital projection technologies. The suggested system will integrate hardware components (projectors, sensors, and tracking systems) with the strong software systems and AI-powered models of interaction so that the connection between real and virtual objects is flawless. The fact that there are a number of physical objects makes the process more tangible and visual, which contributes to the user experience of the immersion and importance even further. Good spatial mapping and good system performance are provided by the implementation process including the environment set-up, the creation of the material and the calibration processes. The research results of the experiment reveal the high level of the accuracy of interaction, fidelity of the projection, and interaction with the user, which proves the effectiveness of the proposed approach. The dynamically flexible nature of the system to user inputs and the environmental changes is in way strength and scalability of the system in the real world application. Moreover, this paper indicates that more interdisciplinarily should be integrated in that concepts of computer graphics, human-computer interaction, and material science should be used to bring the immersive digital art into the future. The proposed structure provides the versatility and scalability of subsequent improvements of the MR installations and enables to adapt them to other spheres and fields, such as museums, exhibitions, and areas of open art. The possible topics of future study include the research of improved forms of haptic feedback, multi-user collaborative systems and energy-efficient projectors.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Birlo, M., Edwards, P. J. E., Clarkson, M., and Stoyanov, D. (2022). Utility of Optical See-Through Head-Mounted Displays in Augmented Reality-Assisted Surgery: A Systematic Review. Medical Image Analysis, 77, Article 102361. https://doi.org/10.1016/j.media.2022.102361

Chen, S. X., Wu, H. C., and Huang, X. (2023). Immersive Experiences in Digital Exhibitions: The Application and Extension of the Service Theater Model. Journal of Hospitality and Tourism Management, 54, 128–138. https://doi.org/10.1016/j.jhtm.2022.12.008

Chuang, C. H., Chen, C. Y., Li, S. T., Chang, H. T., and Lin, H. Y. (2022). Miniaturization and Image Optimization of a Full-Color Holographic Display System Using a Vibrating Light Guide. Optics Express, 30, 42129–42140. https://doi.org/10.1364/OE.473150

Gerup, J., Soerensen, C. B., and Dieckmann, P. (2020). Augmented Reality and Mixed Reality for Healthcare Education Beyond Surgery: An Integrative Review. International Journal of Medical Education, 11, 1–18. https://doi.org/10.5116/ijme.5e01.eb1a

Guo, X., Zhong, J., Li, B., Qi, S., Li, Y., Li, P., and Zhao, J. (2022). Full-Color Holographic Display and Encryption with Full-Polarization Degree of Freedom. Advanced Materials, 34, Article 2103192. https://doi.org/10.1002/adma.202103192

Habig, S. (2020). Who can Benefit from Augmented Reality in Chemistry? Sex Differences in Solving Stereochemistry Problems Using Augmented Reality. British Journal of Educational Technology, 51(2), 629–644. https://doi.org/10.1111/bjet.12891

Ivanov, R., and Velkova, V. (2022). Delivering Personalized Content to Open-Air Museum Visitors Using Geofencing. Digital Presentation and Preservation of Cultural and Scientific Heritage, 12, 141–150. https://doi.org/10.55630/di2022.12.11

Jovanovic, M., Guterman-Ram, G., and Marini, J. C. (2022). Osteogenesis Imperfecta: Mechanisms and Signaling Pathways Connecting Classical and Rare OI Types. Endocrine Reviews, 43(1), 61–90. https://doi.org/10.1210/endrev/bnab017

Kocaturk, T., Mazza, D., McKinnon, M., and Kaljevic, S. (2023). GDOM: An Immersive Experience of Intangible Heritage Through Spatial Storytelling. ACM Journal on Computing and Cultural Heritage, 15, 1–18. https://doi.org/10.1145/3498329

Korayem, G. B., Alshaya, O. A., Kurdi, S. M., Alnajjar, L. I., Badr, A. F., Alfahed, A., and Cluntun, A. (2022). Simulation-Based Education Implementation in Pharmacy Curriculum: A Review of the Current Status. Advances in Medical Education and Practice, 13, 649–660. https://doi.org/10.2147/AMEP.S366724

Nicolazzo, J. A., Chuang, S., and Mak, V. (2022). Incorporation of MyDispense, a Virtual Pharmacy Simulation, into Extemporaneous Formulation Laboratories. Healthcare, 10, Article 1489. https://doi.org/10.3390/healthcare10081489

Shivane, S. R., and Sardar, P. R. J. (2026). AI-Augmented ERP Systems as Catalysts for HR Digital Transformation: A Framework for Future-Ready Workforce Competency Study in Maharashtra State. International Journal of Advanced Computer Theory and Engineering, 15(1S), 339–348. https://doi.org/10.65521/ijacte.v15i1S.1335

Tarng, W., Tseng, Y.-C., and Ou, K.-L. (2022). Application of Augmented Reality for Learning Material Structures and Chemical Equilibrium in High School Chemistry. Systems, 10, Article 141. https://doi.org/10.3390/systems10050141

Vrettakis, E., Katifori, A., Kyriakidi, M., Koukouli, M., Boile, M., Glenis, A., and Ioannidis, Y. (2023). Personalization in Digital Ecomuseums: The Case of Pros-Eleusis. Applied Sciences, 13, Article 3903. https://doi.org/10.3390/app13063903

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.