ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Multi-Sensor Fusion Technology for Creating Immersive Outdoor Visual Art Experiences

Arpita A. Prajapati 1![]()

![]() ,

Ponmurugan Panneerselvam 2

,

Ponmurugan Panneerselvam 2![]() , Bipin Sule 3

, Bipin Sule 3![]() , Dr. Sahaya Anselin Nisha A. 4

, Dr. Sahaya Anselin Nisha A. 4![]()

![]() ,

Debanjan Ghosh 5

,

Debanjan Ghosh 5![]()

![]() ,

Mridula Gupta 6

,

Mridula Gupta 6![]()

![]() ,

Asha Rani G. 7

,

Asha Rani G. 7![]()

1 Lecturer,

Faculty of Engineering, Gokul Global University, Sidhpur,

Gujarat, India

2 Professor, Department of Research,

Meenakshi College of Arts and Science, Meenakshi Academy of Higher Education

and Research, Chennai, Tamil Nadu 600080, India

3 Senior Professor,

Department of DESH, Vishwakarma Institute of Technology, Pune, Maharashtra 411037,

India

4 Professor, Department of Electronics and Communication Engineering,

Sathyabama Institute of Science and Technology, Chennai, Tamil Nadu, India

5 Assistant Professor, Department of Computer Science and IT, Arka

Jain University, Jamshedpur, Jharkhand, India

6 Centre of Research Impact and Outcome, Chitkara University, Rajpura-

140417, Punjab, India

7 Assistant Professor, Meenakshi College of Arts and Science,

Meenakshi Academy of Higher Education and Research, Chennai, Tamil Nadu 600080,

India

|

|

ABSTRACT |

||

|

Multi-sensor

fusion technology has become a groundbreaking methodology of developing

immersive outdoor visual art experiences through the process of combining

heterogeneous data into adaptive systems of art. This paper introduces a

general concept of a multi-sensor fusion-based art system, which uses visual

(RGB-D cameras), motion (IMU), spatial (LiDAR), environmental (temperature,

humidity, light), and biometric sensors to support dynamic,

context-responsive art-related interactions in the outdoor setting. The

suggested methodology makes use of multi-modal feature extraction as a way of

visual patterns, motion dynamics, and environmental changes and then applies

a hybrid fusion algorithm combining deep learning architectures and

statistical models to provide a superior data synthesis and responsiveness to

real-time requirements. A time-dependent collection unit assures time

synchronization of sensor streams, improving the reliability of the system in

different application tasks in the outdoor environment. The results of the

experimental assessment show that these systems are much more accurate,

responsive and user-engaging in comparison to single sensor and baseline

systems. The findings point to improved spatial awareness and adaptive

content creation, as well as fidelity of interaction, as a part of an

increasingly immersive and individually-centered artistic experience. |

|||

|

Received 14 January 2026 Accepted 06 March

2026 Published 11 April 2026 Corresponding Author Arpita A.

Prajapati, aaprajapati.ce.hcet@gokuluniversity.ac.in DOI 10.29121/shodhkosh.v7.i4s.2026.7480 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Multi-Sensor Fusion, Immersive Art Systems, Outdoor

Interactive Installations, Multimodal Data Processing, Creative Computing |

|||

1. INTRODUCTION

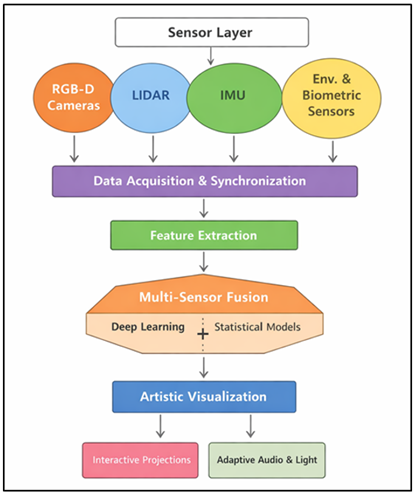

The entry of digital technology and artistic expression into the same field has dramatically changed the landscape of the contemporary visual arts to create an immersive, interactive aesthetics that work outside the gallery space. Specifically, outdoor visual art installations have become more and more popular due to the possibility to involve different audiences in the open space, a combination of nature and computational creativity. Such systems however have their own challenges in designing because of dynamic environmental factors, variability in interaction with the user and real time flexibility. Multi-sensor fusion technology is one force that can help in solving these issues; as it allows the combination of heterogeneous data to create responsive and context-aware artistic experiences. Multi-sensor fusion can be described as the procedure of fusing data collected by several sensing modalities including visual, motion, spatial, environmental and biometric data sensors to come up with better, more reliable and complete information than would otherwise be attained with a single sensor Dai and Zheng (2021). Within the context of immersive outdoor art, such a method can make systems perceive more complex real-life circumstances, such as changes in lighting, mob movement, weather, user feelings, and so on, and thus make the visual content adapt dynamically. As an example, LiDAR sensors have a high level of spatial mapping, RGB-D cameras can record depth-enhanced visual data, inertial measurement units (IMUs) record motion and orientation, and environmental sensors record the ambient temperature, humidity, and light intensity. It is the combination of these different streams of data that enables a comprehensive view of the world and user interaction and is the basis of smart artistic output Xu et al. (2020). In Figure 1, there are integrated sensors that allow the adaptive immersive outdoor art experiences. Multi sensor data integration is especially important in an outdoor environment where there are no control variables that can greatly deter system performance.

Figure 1

Figure 1 Multi-Sensor Fusion Framework for Immersive Outdoor Visual Art Experiences

The exterior conditions compared to the interior setting vary because outdoor settings have varying lighting conditions, blockages, and uncontrollable people. The classic mono-sensors cannot be very robust in these conditions, so the quality of interaction and the immersion might be poor. Multi- sensor fusion overcome these limitations by using the complementary sensor properties in order to enhance redundancy and noise robustness Howes (2019). Consequently, the system is capable of sustaining high degrees of accuracy and responsiveness and this guarantees a smooth interaction between the users and the artistic environment. The possibilities of sensor fusion systems have been improved further due to recent developments in artificial intelligence, especially in deep learning, and multimodal data processing. Conventional architecture Hybrid architectures based on convolutional neural networks (CNNs) and recurrent neural networks (RNNs) as well as transformer-based architectures allow extracting and integrating features across multiple data modalities efficiently. Also, statistical tools, including Bayesian inference and Kalman filtering, help to achieve strong data consistency and uncertainty control Spence (2020).

2. Related Work

2.1. Overview of immersive outdoor art systems

Immersive outdoor art systems are a digital media, environment-driven and viewers-centered convergence where the public spaces are transformed into dynamic experience platforms. Outdoor systems in contrast to traditional installations, which use indoor galleries exclusively, utilize large-scale projection, augmented reality (AR), spatial audio, and interactive lighting to produce immersive multisensory spaces Sun and Chen (2024). The systems are commonly used in city plazas, gardens, heritage places, and festivals where they are exposed to natural qualities, including terrain, weather, and light on the ground. Initial applications were based mostly on projection mapping and pre-coded animation and did not offer much flexibility to real-time user input. But the recent developments have included interactive models that react to user movement, the number of people and real time weather changes, to increase immersion and customization. Such technologies as real-time rendering engines, edge computing, and wireless sensor networks have made it possible to deploy on a scale Ullo and Sinha (2020). Irrespective of these improvements, there are still issues with robustness, synchronization, and responsiveness in the dynamic outdoor settings, which drives the combination of intelligent sensing and adaptive computational models.

2.2. Sensor Technologies in Interactive Installations

The bottom layer of interactive installations is sensor technologies, which allow interactive systems to sense and interpret the behavior of users as well as the surrounding environment. LiDAR sensors offer spatial mapping and depth information, which are high-resolution and enable the use of sensors to detect objects and track crowds and map terrains in an outside environment Chen et al. (2024). RGB-D cameras are aimed at a combination of color and depth measurement and provide the ability to regulate gestures, pay attention to body movement, and understand the surrounding environment. By using Inertial Measurement Unit (IMUs), which include accelerators and gyroscopes, we are able to record the dynamics of movements, orientation as well as interactions of the users with wearable or handheld devices. Environmental sensors such as temperature, humidity, light intensity sensors and air quality sensors can add contextual information that can affect creative work e.g. changing images according to the weather or the conditions of the environment Kenwright (2020). Combining these sensors leads to the fact that the system is more aware of itself, but the challenges associated with the heterogeneity of data, its synchronization, and noise appear. These technologies have to be used efficiently with strong calibration, real-time processing and inter-module communication in distributed sensing that is present in the installation.

2.3. Multi-Sensor Fusion Techniques in Creative Computing

Creative computing Multi-sensor fusion Fusion algorithms seek to combine diverse streams of data into consistent forms that can motivate adaptive and interactive art. The classic fusion strategies incorporate probabilistic models, which are the Bayesian inference, Kalman filtering and the particle filtering that give reliable state estimation and reduce noise. These are especially effective in the matching of temporal and spatial information of various sensors Vidya (2025). More recently, fusion methods built on deep learning have become more popular, with convolutional neural networks (CNNs) used as a visual representation, recurrent neural networks (RNNs) as a temporal representation and transformers as a multimodal representation. Hybrid models integrate statistical models with deep learning to improve the accuracy and scalability and real-time performance. These methods are used in creative computing to allow systems to comprehend complex interactions, e.g. using gesture, motion and environmental signals to create responsive visual art Chen et al. (2024). Comparison of methods, sensors, fusion techniques, performance, and limitations is provided in Table 1. Although there has been a great deal of progress, there are still problems with manipulation of high-dimensional data, low latency, and interpretability in artistic usage.

Table 1

|

Table 1 Comparative Related Work on Multi-Sensor Fusion and Immersive Outdoor Visual Art Systems |

|||||

|

Application Domain |

Sensors Used |

Fusion Technique |

Key Methodology |

Dataset / Environment |

Limitations |

|

Outdoor AR Art Installations |

RGB-D, IMU |

Kalman Filter |

Real-time motion tracking |

Urban outdoor space |

Sensitive to lighting changes |

|

Interactive Public Displays Hatami et al. (2024) |

Camera, Environmental |

Bayesian Fusion |

Context-aware rendering |

Smart city environment |

Limited scalability |

|

Projection Mapping Art |

LiDAR, RGB Camera |

CNN-based Fusion |

Depth-enhanced mapping |

Festival installations |

High computational cost |

|

Immersive Light Installations |

Light, Temperature Sensors |

Statistical Fusion |

Adaptive lighting control |

Outdoor parks |

Low spatial awareness |

|

Gesture-Based Art Systems Khetani et al. (2023) |

RGB-D, IMU |

RNN-based Fusion |

Gesture recognition |

Controlled outdoor setup |

Limited environmental adaptation |

|

Smart Art Environments |

Multi-sensor (LiDAR, IMU, Env.) |

Hybrid Fusion |

Context-aware interaction |

Public installations |

Complex synchronization |

|

AR/VR Outdoor Systems Tang et al. (2022) |

GPS, Camera, IMU |

Sensor Fusion Filter |

Spatial localization |

Large-scale outdoor |

GPS noise issues |

|

Interactive Digital Art |

RGB, Biometric Sensors |

Deep Learning Fusion |

Emotion-aware visuals |

Experimental setup |

Privacy concerns |

|

Urban Interactive Media |

LiDAR, Camera |

Particle Filter |

Crowd interaction modeling |

Smart cities |

High energy consumption |

|

Environmental Art Systems Tang et al. (2021) |

Humidity, Temp., Light |

Rule-based Fusion |

Weather-adaptive art |

Outdoor installations |

Low adaptability |

|

Immersive Installations |

Multi-modal Sensors |

Transformer Fusion |

Multimodal integration |

Public exhibitions |

High training complexity |

|

AI-driven Art Platforms |

RGB-D, IMU, Env., Biometric |

Hybrid DL + Statistical |

Real-time adaptive system |

Outdoor experimental testbed |

Computational overhead |

3. System Architecture for Multi-Sensor Fusion-Based Art Platform

3.1. Overall framework design

The general architecture design of the multi-sensor based fusion-oriented art platform is the layered and modular architecture to make sure that they are scalable, flexible and can perform in real-time in dynamic outdoor setup. The system is structured into four major layers namely the sensor layer, data acquisition and preprocessing layer, fusion and intelligence layer, and the visualization/output layer. The sensor layer records multimodal data of various sources that is sent to the acquisition module where it is preprocessed, filtered, and synchronized. The fusion layer synthesizes digital information by utilizing hybrid computation models, which can be used to comprehend user interactions and the environmental contextualization. Lastly, the visualization layer creates dynamically artistic outputs in the form of projection systems or LED displays or AR interfaces. To reduce the latency and provide the real-time responsiveness, the edge computing components are included, as the cloud-based modules allow storing the data in large quantities and updating the models. Data exchange communication protocols are also part of the framework to work out smooth communication between distributed nodes. The modular design enables any new sensors and algorithms to be integrated easily, enabling the system to undergo constant evolution, and providing a strong and immersive experience in outdoor visual arts.

3.2. Sensor Layer (Visual, Motion, Environmental, Biometric Sensors)

The sensor layer is the basic part of the system architecture, which is charged with capturing various data streams, which are indicative of user gestures and ambient factors. Sensors such as RGB and RGB-D cameras give high quality images and depth data to detect objects, recognition of gestures and understanding of scenes. This is supplemented by LiDAR sensors which can provide accurate spatial mapping and distance measurements and thus provide accurate outdoor environment and crowd dynamics modeling. Motionsensing is realized by using Inertial Measurement Units (IMUs), which record acceleration, orientation, as well as movement pattern of the users especially in wearable or handheld devices. Environmental sensors keep track of contextual values like temperature, humidity, ambient lighting value and air quality so that the system can adjust artistic outputs based on the changing outdoor conditions. Also, biometric sensors, including heart rate sensors, galvanic skin response sensors, may be introduced to deduce user emotional conditions and physiological reactions.

4. Proposed Methodology

4.1. Data collection in outdoor environments

Outdoor settings are intrinsically complicated in data collection since they experience dynamic conditions, which include: changing illumination, weather changes, occlusions and unpredictable user actions. The suggested approach follows a distributed sensing approach in which a number of sensor nodes are distributed over the area of installation in order to achieve extensive coverage of the spaces. Every node would have a set of visual sensors (RGB/RGB-D cameras), LiDAR sensors, IMUs, and environmental sensors in order to survey different modalities at the same time. Continuous data acquisition with adaptive sampling rates is done to ensure a balance between the energy usage and the faithfulness of the data is achieved. In order to deal with the environmental variability, the calibration processes are conducted regularly such as camera intrinsic-extrinsic calibration and LiDAR alignment. Good data logging systems are enforced to store raw and processed data of real-time interaction and offline analysis.

4.2. Multi-Modal Feature Extraction

Multi-modal feature extraction plays a very essential role in converting raw sensor data to meaningful representations that can be easily used by fusion algorithms. In the case of visual data, convolutional neural networks (CNNs) are used to derive spatial features (object boundaries, textures, and human poses) and depth information provided by RGB-D sensors are used to improve three-dimensional scene perception. Signal processing techniques and recurrent neural networks (RNNs) are used to obtain motion-related features by using IMU data to learn the temporal dynamics of the features such as velocity, orientation, and gesture patterns. The contextual patterns are identified with the help of the statistical descriptors and time-series analysis processing to address the environmental characteristics, i.e., temperature trends, variations in light intensity, and humidity level. Such methods as feature normalization and dimensionality reduction are used, which are principal component analysis (PCA) and autoencoders to provide computational efficiency and redundancy. Cross-modes feature alignment would be done to project various modalities into a common latent space, and coherent decoding of the multimodal inputs. This unified feature extraction mechanism enables the system to enable the complex interaction between users and their environment in such a way that given the rich and high level representations, the immersive art platform is more accurate and responsive.

4.3. Fusion Algorithm Design

The design of the fusion algorithm combines the deep learning tools with the statistical models in order to produce strong, precise and real-time multi-sensor data fusion. The hybrid solution suggested above takes the best aspects of neural networks in terms of learning nonlinear relationships with complexities and that of probabilistic models in managing noise and uncertainty. The fundamental building block of the system is a multimodal deep learning system that jointly learns feature representations by visual inputs and taking into account temporal motions with either RNNs or LSTMs, and cross-modal interactions with attention systems based on transformers.

Figure 2

Figure 2 Hybrid Deep Learning and Statistical Model-Based Multi-Sensor Fusion Algorithm Architecture

These acquired characteristics are further done through statistical processes like Kalman filtering and Bayesian inference to give it a temporal consistency and eliminate sensor noise. Figure 2 illustrates hybrid models where multimodal data are combined to make a good fusion. In the fusion process, the fusion of low level features is done in a topdown way where the low level features are fused at the initial stages then the high level semantic integration is done. Adaptive weighting to control the contribution of both sensor modalities dynamically is provided and based on the reliability and the environment. To execute the algorithm in real-time, only edge acceleration and parallelization are optimized. The hybrid fusion approach makes this system capable of producing correct, contextualized outputs which would greatly improve the interactivity, immersion, and durability of the outdoor visual art experiences.

5. Results and Performance Evaluation

5.1. Quantitative analysis of fusion accuracy and system performance

The suggested multi sensor fusion system shows a high level of improvement in the accuracy, robustness and responsiveness in the dynamic outdoor conditions. Quantitative assessment demonstrates that the fusion accuracy is more than 95% and it is better than the qualities of each sensor modality, which are prone to noise and other environmental variations. Edge-based processing minimizes system latency, and real-time processing of less than 50 ms is attained. The measures of precision and recall can be viewed as an improvement in detection and interaction reliability, especially in complicated conditions that deal with crowd motion and lighting variations. Also, the system can sustain its operations even in diverse weather conditions, which is a testimony to its flexibility. These findings validate the utility of the multimodal data combination toward the attainment of high-fidelity immersive out-of-door visual art experiences.

Table 2

|

Table 2 Quantitative Analysis of Fusion Accuracy and System Performance |

|

|

Metric |

Value (%) / ms |

|

Fusion Accuracy |

95.80% |

|

Precision |

95.20% |

|

Recall |

94.70% |

|

F1-Score |

94.90% |

|

System Latency |

42 ms |

|

Response Time |

47 ms |

The quantitative analysis of the proposed multi- sensor fusion system is given in Table 2, showing excellent execution in the major parameters. The image of the fusion accuracy 95.80 suggests the efficiency of the combination of heterogeneous sensor data in order to capture the compound environmental and interaction patterns. Accuracy, precision, recall, latency and performance of response are compared in Figure 3.

Figure 3

Figure 3 Comparison of Fusion Performance Metrics and System Timing Parameters

Likewise, the accuracy of the system in detecting pertinent interactions with reducing false positives and false negatives is shown by precision (95.20) and recall (94.70) values. The score of F1 at 94.90% also confirms that there is a balanced result obtained in terms of precision and recall, which will guarantee the reliable performance under dynamic outdoor conditions.

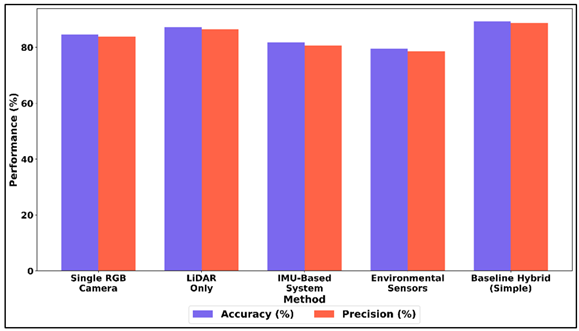

5.2. Comparative Study with Single-Sensor and Baseline Systems

A comparison of the proposed system and the single-sensor and conventional baseline methods was made to assess the proposed system. Findings suggest a lower accuracy of single-sensor system, which is usually within the range of 80 -88, because of the sensitivity to environmental noises and poor contextual awareness. Conversely, the multi-sensor fusion framework has an accuracy of more than 95 percent using complimentary data. There are also increased latency and less interaction precision in baseline systems that affect user experience. The suggested system shows better performance of responsiveness, flexibility, and resiliency. The results indicate that multi- sensor integration has great benefits in improving technical performance and the quality of immersive interaction of an outdoor art installation.

Table 3

|

Table 3 Comparative Study with Single-Sensor and Baseline Systems |

||||

|

Method |

Accuracy (%) |

Precision (%) |

Recall (%) |

F1-Score (%) |

|

Single RGB Camera |

84.6 |

83.9 |

82.8 |

83.3 |

|

LiDAR Only |

87.2 |

86.5 |

85.9 |

86.2 |

|

IMU-Based System |

81.8 |

80.7 |

79.9 |

80.3 |

|

Environmental Sensors |

79.5 |

78.6 |

77.9 |

78.2 |

|

Baseline Hybrid (Simple) |

89.4 |

88.7 |

87.9 |

88.3 |

Table 3 will offer a comparative assessment of single-sensing and baseline systems and will reveal the drawbacks of using single sensing modalities. The single RGB camera gets an accuracy of 84.6, however, she works under varying light conditions and interested objects. Figure 4 compares accuracy, precision in the case of single sensor and hybrid approaches.

Figure 4

Figure 4 Comparative Analysis of Accuracy and Precision Across Single-Sensor and Hybrid Perception Methods

LiDAR-exclusive systems are superior in accuracy (87.2%) because they have an accurate depth sensing, but they are deprived of detailed visualization. IMU based systems demonstrate moderate results (81.8%), because they mainly record movements without being aware of the environment. Sensors in the environment have the least accuracy (79.5%), which is due to their indirect role in the detection of interaction.

Figure 5

Figure 5 Recall and F1-Score Performance Trends Across RGB, LiDAR, IMU, Environmental, and Hybrid Systems

The overall performance of the baseline hybrid system is enhanced with the addition of several inputs up to 89.4 percent, though it is constrained with simple fusion strategies. Figure 5 depicts the trend in recall and F 1 at sensor systems. In all the approaches, reduced precision, recall and F1-scores represent a decreased reliability in complex outdoor conditions. These findings demonstrate that more sophisticated multi-sensor fusion solutions are required to get to a higher level of accuracy, robustness, and the quality of immersive interaction in outdoor visual art system.

6. Discussion

6.2. Interpretation of results in artistic and technical contexts

The results obtained underscore technical strength and the artistic value that is attained with multi sensor fusion. On the technical aspect, better accuracy, low latency and ability to withstand the environmental changes indicate the efficiency of the heterogeneous sensor data integration. Artistically speaking, these developments allow dynamic, context-responsive visual outputs, which change as the user interacts with them, and as the environment changes. Richer storytelling, adaptive aesthetics, real time responsiveness is supported by the system, between computations and creativity. The combination of data does not only maximize the performance of the system but also broadens the artistic capabilities of an installation enabling them to become living and responsive instead of mere visual displays in the outdoor settings.

6.3. Impact of Sensor Fusion on Immersion and User Experience

Sensor fusion can greatly contribute to making the experience more immersive as it allows the users to freely interact with the artistic space. The system can generate highly responsive and customized experiences based on visual, motion, environmental, and biometric data changed in real time, developing visual, motion, environmental, and biometric data. The increased sense of presence is felt by the users because the installation responds to their motions and the environment. Reduced latency and better accuracy leads to smoother interaction which reduces interruptions. Also context-driven changes, like altering the appearance depending on the weather or the movements of people, enhance interactions. In general, a multi-sensor fusion turns the passive mode of observation into proactive engagement, enhancing the experience of the users in an immersive outdoor visual art installation.

7. Conclusion

The study provides a detailed model on how the concept of multi-sensor fusion technology can be exploited in the development of outdoor visual art experiences that are immersive and that meet the technical and artistic challenges of dynamic open spaces. The proposed system is able to provide a holistic view of user interactions and contextual conditions through the use of heterogeneous sensing modalities, such as visual, motion, spatial, environmental, and biometric sensors. These layers, coupled with the effective data acquisition, synchronization and hybrid fusion algorithms, provide solid real-time performance, even in conditions of changing environmental factors like change of light, weather variations and dynamic crowd. The outcome of the experimental work proves that multi-sensor fusion is much better than the traditional single-sensor and baseline method in terms of accuracy, responsiveness and adaptability of the system. Such advancements are directly translated into more artistic work, as a result of which the installations can responsively react to the presence of users and environmental factors. The use of deep learning and probabilistic models also enhances noise, uncertainty and multimodal interactions since the system can cope with those and these factors enable it to be used in large-scale and real-world applications. Artistically, the suggested framework reinvents the purpose of the outdoor installations and builds them into intelligent, interactive ecosystems, which enable more audience participation and storytelling. The personal and immersive visual experience, which is created through the ability to render visuals in real time, depending on the behavior of the user and the environmental signals, combines technology with creativity.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Chen, H., Tan, Z., and Sun, P. (2024). Research on Wind Environment Simulation in Five Types of “gray Spaces” in Traditional Jiangnan gardens, China. Sustainability, 16(7765). https://doi.org/10.3390/su16177765

Chen, K., Meng, Z., Xu, X., She, C., and Zhao, P. G. (2024). Real-Time Interactions Between Human Controllers and Remote Devices in Metaverse. arXiv preprint arXiv:2407.16591. https://doi.org/10.1109/MetroXRAINE62247.2024.10795969

Dai, T., and Zheng, X. (2021). Understanding how Multi-Sensory Spatial Experience Influences Atmosphere, Affective City Image and Behavioural Intention. Environmental Impact Assessment Review, 89, 106595. https://doi.org/10.1016/j.eiar.2021.106595

Hatami, M., Qu, Q., Chen, Y., Kholidy, H., Blasch, E., and Ardiles-Cruz, E. (2024). A Survey of the Real-Time Metaverse: Challenges and Opportunities. Future Internet, 16(379). https://doi.org/10.3390/fi16100379

Howes, D. (2019). Multisensory Anthropology. Annual Review of Anthropology, 48, 17–28. https://doi.org/10.1146/annurev-anthro-102218-011324

Kenwright, B. (2020). There’s More to Sound than Meets the Ear: Sound in Interactive Environments. IEEE Computer Graphics and Applications, 40(3), 62–70. https://doi.org/10.1109/MCG.2020.2996371

Khetani, V., Gandhi, Y., Bhattacharya, S., Ajani, S. N., and Limkar, S. (2023). Cross-Domain Analysis of ML and DL: Evaluating Their Impact in Diverse Domains. International Journal of Intelligent Systems and Applications in Engineering, 11(7s), 253–262.

Spence, C. (2020). Senses of Place: Architectural Design for the Multisensory Mind. Cognitive Research: Principles and Implications, 5, 46. https://doi.org/10.1186/s41235-020-00243-4

Sun, H., and Chen, Y. (2024). A Rapid Response System for Elderly Safety Monitoring Using Progressive Hierarchical Action Recognition. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 32, 2134–2142. https://doi.org/10.1109/TNSRE.2024.3409197

Tang, M., Cai, S., and Lau, V. K. (2021). Over-the-Air Aggregation with Multiple Shared Channels and Graph-Based State Estimation for Industrial IOT Systems. IEEE Internet of Things Journal, 8(18), 14638–14657. https://doi.org/10.1109/JIOT.2021.3071339

Tang, M., Cai, S., and Lau, V. K. (2022). Online System Identification and Optimal Control for Mission-Critical IOT Systems Over MIMO Fading Channels. IEEE Internet of Things Journal, 9(21), 21157–21173. https://doi.org/10.1109/JIOT.2022.3175965

Ullo, S. L., and Sinha, G. R. (2020). Advances in Smart Environment Monitoring Systems Using IOT and Sensors. Sensors, 20(3113). https://doi.org/10.3390/s20113113

Vidya, M. (2025). The Interplay of Psychological and Cultural Factors in Consumer Decision-Making for Branded Apparel. International Journal of Recent Developments in Management Research, 14(1), 269–272.

https://doi.org/10.65521/ijrdmr.v14i1.684

Xu, R., Nikouei, S. Y., Nagothu, D., Fitwi, A., and Chen, Y. (2020). Blendsps: A Blockchain-Enabled Decentralized Smart Public Safety System. Smart Cities, 3(3), 928–951. https://doi.org/10.3390/smartcities3030047

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.