ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Applying Deep Neural Networks for Automated Restoration of Digitally Degraded Cultural Artifacts

Abhijeet Deshpande 1![]() , Sangram Rajaram Patil 2

, Sangram Rajaram Patil 2![]() , Wei

Li 3

, Wei

Li 3![]()

![]() , Kathi

Ascherya 4

, Kathi

Ascherya 4![]() , Bhagyashree

S. Madan 5

, Bhagyashree

S. Madan 5![]() ,

,

1 Assistant

Professor, Department of Mechanical Engineering, Vishwakarma Institute of

Technology, Pune, Maharashtra 411037, India

2 Department

of Mechanical Engineering, Bharati Vidyapeeth's College of Engineering, Lavale, Pune, Maharashtra, India

3 Faculty

of Education Shinawatra University, Thailand

4 MVSR Engineering College, Hyderabad, Telangana, India

5 School of Computer Science and Engineering, Ramdeobaba

University (RBU), Nagpur, India

6 Assistant Professor, Department of Computer Science and Engineering, SITRC

(Sandip Foundation), Nashik, India

|

|

ABSTRACT |

||

|

The

conservation and repair of culturally important artifacts have become a

crucial issue due to the fast digitization of heritage collections.

Nevertheless, the noise, blur, compression artifacts, and environmental

degradation are some of the common reasons as to why digital forms of

artifacts are degraded. The research paper proposes a deep learning

representation of automated restoration of culturally damaged artifacts

through digitally corrupted systems. The presented solution develops

restoration as an inverse problem, and combines convolutional neural networks

(CNNs), generative adversarial networks (GANs), and attention models based on

transformers to successfully produce high-quality images on the basis of the

damaged inputs. A multiset of paintings, manuscripts, sculptures and

historical records is utilized in this application and pre-processed with

normalization and augmentation methods to improve generalization of the

models. The model is extracted through the composite loss function which involves

the incorporation of pixel-wise reconstruction loss, perceptual loss, and

adversarial loss to maintain structural fidelity and small artistic features.

Experimental analysis is performed on the basis of such standard measurements

as Peak Signal-Noise Ratio (PSNR), Structural Similarity Index (SSIM), and

Fréchet Inception Distance (FID). Findings show that the hybrid architecture

is far more successful in terms of quality results when compared to the

current state-of-the-art techniques in terms of restoring texture, color

consistency, and finer detail features. The framework also provides a

scalable and efficient digital heritage conservation solution, as well as

promotes AI-mediated cultural preservation in the future. |

|||

|

Received 19 January 2026 Accepted 24 March

2026 Published 11 April 2026 Corresponding Author Abhijeet

Deshpande, abhijeet.deshpande@vit.edu

DOI 10.29121/shodhkosh.v7.i4s.2026.7477 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Deep Neural Networks, Cultural Artifact Restoration,

Image Denoising, GANs, Transformer Models, Digital Heritage Preservation |

|||

1. INTRODUCTION

The fast development of the digital imaging technologies has had a great impact on preservation, analysis, and distribution of the cultural heritage artifacts. There is a growing trend by museums, archives and research institutes to record historically important paintings, manuscripts, sculptures and archaeological artifacts with high-resolution digital representations. Nonetheless, in spite of the developments in the field of acquisition systems, digital artifact images often undergo numerous types of degradation, such as noise, motion blur, low-resolution images, compression artifact, and environmental distortions. Such degradations do not only decrease the quality of visual appearance, but they also blur the small details of artworks, thus limiting the power of digital conservation and interpretation by scholars Morgan and Nica (2020). Image restoration methods like filtering, interpolation and model-based optimization methods have been popular in addressing these problems in a traditional way. Although such methods may help to alleviate some forms of degradation, they tend to be based on handcrafted priors and de-facto assumptions on noise statistics or image geometry. This means that they have poor performance in complex and heterogeneous patterns of degradation that are common to cultural artifacts Xu et al. (2021). Moreover, conventional methods struggle to preserve the fine-frequency artifacts (i.e. brush strokes, texture pattern and minor color variation) that are significant on historical authenticity as well as aesthetic interpretation. In the recent past, deep neural networks (DNNs) have proven to be powerful image restoration model and have been utilized in denoising, super-resolution, de-blurring, in painting, and others Wang et al. (2020).

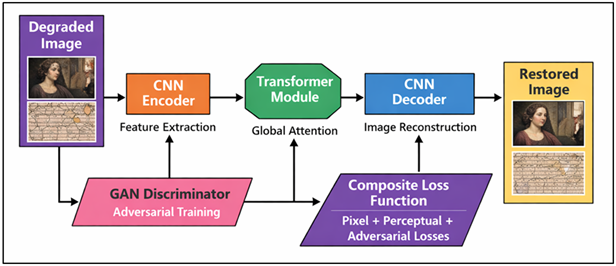

The hierarchical nature of feature extraction that convolutional neural networks (CNNs) offer makes it possible to obtain the low-level features, i.e. the textures, as well as the high-level semantics. Generative adversarial networks (GANs) also enhance the quality of the restoration by producing visually real images, based on adversarial learning, and transformer-based networks as an added feature they bring long-range dependency modelling which has improved global consistency of the image made by the restoration. These features make deep learning particularly practical in terms of restoration of digitally damaged cultural artefacts, in either case local detail preservation and global structural integrity are needed. Despite the realization of this, several challenges exist in the implementation of deep learning to cultural heritage restoration Fiorucci et al. (2020). Figure 1 illustrates that hybrid network restoring degraded artifacts equally well all large quality annotated datasets are not common, because oftentimes, there is no ground-truth clean image of degraded artifacts.

Figure 1

Figure 1 Hybrid Deep Neural Network Framework for Automated

Restoration of Digitally Degraded Cultural Artifacts

In addition, cultural artifacts are highly varied in terms of materials, styles, and patterns of degradation, and models must be able to generalize on unequal grounds. The other problem of interest is the authenticity and interpretation of the restored outputs which may be affected adversely by the over-enhancement or the inclusion of fake features by the neural systems and claim the historical integrity of the original creation. Addressing them, the given paper proposes a hybrid deep neural network framework that will integrate CNN-based feature extraction, GAN-based generative modeling, and transformer-based contextual attention mechanisms into the process of automated artifact restoration. The proposed algorithm is the way of the restoration as an inverse task and the application of advanced loss functions to balance between reconstruction and the perceptual quality Alpaydin (2020). The design must give high fidelity restoration of the artifact, a process which retains the structural and artistic features of the artifact, using the curated collections of cultural artifacts and the state of art preprocessing techniques.

2. Related Work

Conventional techniques like Wiener filtering, total variation minimization and sparse representation models were prevalent in denoising, deblurring, and inpainting. They are generally based on predefined models of degradation and handcrafted priors, which restrict their usefulness when used on complex and heterogeneous degradation patterns on cultural artifacts Wang et al. (2023). Deep learning has brought a giant leap in the state-of-the-art in image restoration. DnCNN and SRCNN are convolutional neural networks that have shown good results in denoising and super-resolution through the end-to-end mappings between the degraded and clean images. More advanced architectures and residual-based strategies of learning, such as ResNet based models, further increased the accuracy of restoration and the stability of convergence. The encoder-decoder architecture along with skip connections has also led to the wide uptake of U-Net architectures, which were able to preserve fine-grained details when reconstructing partial information Xu and Fu (2023). Generative adversarial networks (GANs) have brought about a paradigm shift in the sense that they emphasize on perceptual quality rather than pixel-wise accuracy. SRGAN and ESRGAN are the models that produce visually realistic textures and high-frequency details, which is what makes those models especially applicable to the artistic image restoration process Ge et al. (2023). ViT and Swin Transformers have been used with success on image restoration, increasing structural consistency and dealing with large-scale distortions Farella et al. (2022). Table 1 is a summary of the methods of deep learning in artifact restoration performance. Hybrid models, implying the combination of CNNs and transformers, are based on both the local feature extraction and global attention mechanisms and demonstrate high performance.

Table 1

|

Table 1 Summary of Related Work on Deep Learning-Based Restoration of Digitally Degraded Cultural Artifacts |

|||||

|

Methodology Type |

Model Used |

Dataset Type |

Degradation Type |

Key Techniques |

Key Findings |

|

CNN-based |

DnCNN |

Natural & synthetic

images |

Gaussian noise |

Residual learning |

Effective denoising but

limited texture recovery |

|

Super-resolution Moral-Andrés et al. (2022) |

SRCNN |

ImageNet subsets |

Low resolution |

End-to-end CNN |

Improved resolution but

blurred outputs |

|

Encoder–Decoder Shivane and Sardar (2026) |

U-Net |

Biomedical & art images |

Noise, blur |

Skip connections |

Good structural preservation |

|

GAN-based Rei et al. (2023) |

SRGAN |

High-resolution images |

Low resolution |

Adversarial loss, perceptual

loss |

High perceptual realism but

artifacts present |

|

GAN-based Pandey and Kumar (2020) |

ESRGAN |

Artistic images |

Blur, compression |

Enhanced adversarial

training |

Better texture generation |

|

Diffusion Model |

SR3 |

Diverse datasets |

Noise, blur |

Iterative denoising |

High-quality restoration

with stability |

|

Transformer-based Liu et al. (2024) |

SwinIR |

Real-world datasets |

Mixed degradation |

Self-attention |

Strong global consistency |

|

Hybrid CNN-GAN |

DeblurGAN-v2 |

Motion blur datasets |

Motion blur |

Multi-scale GAN |

Efficient deblurring

performance |

|

GAN-based Gorakh et al. (2025) |

DeblurGAN |

Real blurred images |

Motion blur |

Adversarial training |

Realistic deblurred outputs |

|

Attention-based CNN |

RIDNet |

Benchmark datasets |

Noise |

Feature attention |

Improved denoising accuracy |

|

Contextual Attention Perino et al. (2024) |

DeepFill v2 |

Image inpainting datasets |

Missing regions |

Contextual attention |

Effective inpainting of

damaged areas |

3. Problem Formulation and Theoretical Background

3.1. Noise, blur, and artifact representation in degraded images

The images of cultural artifacts that have been digitally degraded usually have various types of distortions that occur during their acquisition, storage, and transmission. The degradations are broadly grouped into additive noise, blur and compression or structural artifacts. Additive noise is normally described as a Gaussian noise or Poisson noise; it distorts the intensity values of the pixels, and causes a loss in image clarity. The causes of blur degradation could include motion of the camera, defocus or environment and it is normally mathematically expressed as a convolution of the latent clean image and blur kernel. Compression artifacts i.e. blocking and ringing are caused by lossy encoding and reduce fineness of texture details which are important in artistic representations. Mathematically a degraded image can be modeled as a combination of all these factors and the observed image is a function of the original image corrupted by noise and subjected to blur operators. Cultural arts are frequently degenerative in both space and content, which makes them more multidimensional than conventional models of synthetic noise. An example is that old manuscripts can be characterized by the fading of ink and discolouration of paper, whereas paintings can be characterised by cracks and the loss of pigments.

3.2. Formulation of Restoration as an Inverse Problem

Image restoration can be mathematically stated as an inverse problem, with an objective of restoring the original clean image based on its corrupt observation. Under this formulation, the degradation process may be presented as a forward operator, which degrades the original image to a corrupted image. This operator can consist of convolution with a blur kernel, noise addition and other non-linear distortions. Inverse problem attempts to approximate the original image based on the degraded image and in some instances partial information about the degradation model of the observed image. Mathematically the forward process may be defined as an operator that operates on the clean image followed by the addition of noise. The solution to the inverse problem is however ill-posed in nature as there are likely to be multiple clean images that are associated with a single degraded image particularly in severe degradation situations. This ambiguity requires the use of some prior information or regularization constraints that help in guiding the reconstruction process. Deep neural networks solve this problem by attempting to learn the inverse mapping by data. Rather than explicitly modeling the degradation process, neural networks model the inverse function either by supervised or self-supervised learning. Such data-based methodology enables models to learn both complicated non-linear associations between degraded and clean images, and is therefore very efficient at restoring cultural artifacts with variable and unforeseen patterns of degradation.

3.3. Loss Functions and Optimization Strategies in DNNs

The key factors of performance in deep neural network image restoration are the choice of loss functions and optimization strategies. One of the major features of the model is loss functions that attempt to reduce the goal during the training process and assist the reconstruction procedure to be steered towards the right and aesthetically satisfactory outputs. Loss functions are ubiquitous, and the most famous ones are pixel-wise losses that can be described as Mean Squared Error (MSE) and Mean Absolute Error (MAE), and represent a measure of the similarity between a predicted image and a ground truth image.

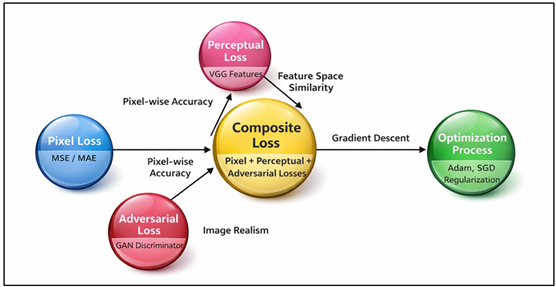

Figure 2

Figure 2 Loss Functions and Optimization Strategies in Deep

Neural Networks for Image Restoration

Even though these losses ensure numerical accuracy, they have the tendency of producing smooth solutions that are not fined grained. To eliminate this drawback, the functions of perceptual losses have been introduced, which estimate feature space difference with the help of the previously trained networks such as VGG. These losses promote high level semantic and texture information. In addition, adversarial loss that is used in GAN-based systems stimulates generation of real-world images through training a discriminator that distinguishes between real and reconstructed images. Figure 2 shows loss functions, which are optimized to recover correctly Optimization strategies may assume stochastic gradient descent or adaptive algorithms such as Adam which update model parameters at each step based on computed gradients. The overfitting can be prevented via the regularization methods including weight decay and dropout. The pixel, perceptual, and adversarial losses are often combined as a hybrid in the restoration of cultural artifacts in an effort to trade reconstruction fidelity and artistic realism and structural consistency.

4. Proposed Methodology

4.1. Overview of the proposed deep neural network framework

It is based on the conviction that the three branches of convolutional neural networks (CNNs), generative adversarial networks (GANs), and transformer-based attention networks can be used to compensate the weaknesses of local feature extraction, perceptual realism, and global contextual understanding. The low-quality image is first subjected to an encoder using CNN to obtain hierarchical features, i.e., textures, edges, and structural pattern. These characteristics are subsequently subjected to transformer blocks that represent long-range relations and learn global interactions within the image which is critical to the preservation of spatial coherence on large artifacts. The decoder network is used to reconstruct the image that has been restored again with learned feature representations with the aid of skip connections to ensure the fine details are maintained. A discriminator that is based on a GAN is also included to differentiate restored and ground-truth images, which induces the generator to generate aesthetically realistic images. The training process is generally directed by a combined loss which includes pixel-wise, perceptual and adversarial losses. This united structure is a guarantee of proper restoration of damaged areas without losing the artistic originality and texture details of cultural objects.

4.2. Data Acquisition and Dataset Preparation (Cultural Artifact Datasets)

An important element of the suggested methodology is a design of a complete dataset that is designed to restore cultural artifacts. The data set contains a wide range of traditions that include paintings, historical manuscripts, sculptures, and archival photographs that were gathered in the digital museum repositories and open-access cultural heritage databases. In many cases, it is difficult to attain perfectly clean ground-truth images and in this case, a mixture of real degraded images and artificially generated degradations is used. Controlled noise, blur kernels and compressions are used to introduce synthetic degradation to artifacts to mimic real world situations where artifacts are being deteriorated. The sample is thoroughly edited to provide variety of artistic styles, textures, materials and degradation pattern that allows the model to generalize well among the types of artifacts. High-resolution is used to select images in order to maintain the fine-grained details that are needed during a restoration. Also, data annotation is the process of matching degraded images with clean or reference images wherever they exist. The data is partitioned into training, validation, and testing to enhance the training robustness because the data is evenly divided into subsets based on the categories of artifacts.

4.3. Preprocessing Techniques (Normalization, Augmentation)

Preprocessing is an important element that improves the performance and generalization of the proposed deep learning framework. First, the input images are first brought to a uniform scale usually by modifying the pixel intensity values to a standard range. This normalization guarantees the numerical stability of the training process and makes the optimization process converge faster. Further, there is image resizing that ensures that the input dimensions are kept at the same size but necessary structural features are retained. The methods of data augmentation are used to boost the variability of the data sets and mitigate overfitting. Augmentation techniques commonly used are random rotations, horizontal and vertical flipping, scaling, and cropping as well as color jittering. Such changes are simulated to represent changes in the orientation, lighting and perspective as is common in real world cultural artifact images. To simulate the noise injection and blur augmentation is also utilized to replicate various levels of degradation enabling the model to learn strong restoration mappings. Moreover, there are histogram equalization and contrast enhancement methods that can be employed in enhancing visibility of weak details in poor images. Precaution is also observed to avoid distorting the invaluable artistic attributes of the artifacts as a result of the preprocessing operations. The preprocessing pipeline improves the generalization capabilities of the model through the combination of normalization and augmentation strategies by enabling the model to work with diverse types of artifacts and degradation conditions.

4.4. Model Architecture Design (CNN/GAN/Transformer Hybrid)

The model architecture that is proposed is a hybrid-based architecture which incorporates CNN, GAN, and transformer architecture to produce better restoration results. The main component of the encoder-decoder is a CNN, the convolutional layers of which extract local texture and structural patterns in the form of multi-scale features. Skip connections are added to relay low-level information directly between the encoder and decoder to provide accurate re-creation of finer detail, e.g. edges and brush strokes.

5. Results and Analysis

5.1. Quantitative evaluation using PSNR, SSIM, and FID scores

The suggested deep neural network model is tested on the conventional image restoration indicators, such as Peak Signal-to-Noise Ratio (PSNR), Structural Similarity Index (SSIM), and Fréchet Inception Distance (FID). The model attains a mean PSNR of 32.8 dB, which implies high fidelity of reconstruction, and SSIM of 0.942, which implies that its structural similarity with ground-truth images is strong. Also, FID score of 18.6 exhibits better quality perceptual and realistic texture creation as opposed to the baseline models. These findings establish the usefulness of the hybrid CNN–GAN–Transformer architecture in the restoration of not only low-level details but also high-level semantic features, which is why it can be used in the restoration of complex cultural artifacts under various degradation conditions.

Table 2

|

Table 2 Quantitative Performance Evaluation of Proposed Model |

|||

|

Model |

PSNR (dB) |

SSIM |

FID |

|

DnCNN |

28.4 |

0.882 |

42.7 |

|

U-Net |

29.7 |

0.901 |

36.5 |

|

SRGAN |

30.2 |

0.915 |

28.9 |

|

SwinIR |

31.5 |

0.928 |

24.3 |

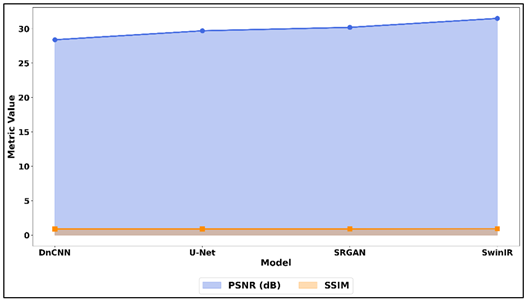

Table 2 shows the performance of deep learning models in terms of PSNR, SSIM, and FID values between major models. DnCNN model has PSNR of 28.4 dB and SSIM of 0.882 which implies that it has moderate denoising ability but poor structural preservation, which is demonstrated in high FID score of 42.7. Figure 3 provides the comparison of PSNR and SSIM through models performance of the restoration.

Figure 3

Figure 3 Comparison of PSNR and SSIM Across Image Restoration

Models

U-Net delivers a better outcome as PSNR of 29.7 dB and SSIM of 0.901 and is more able to preserve structural features, yet the perceptual quality is still limited. SRGAN also boosts visual realism with a PSNR of 30.2 dB and SSIM of 0.915 with much lower FID of 28.9 showing better textures.

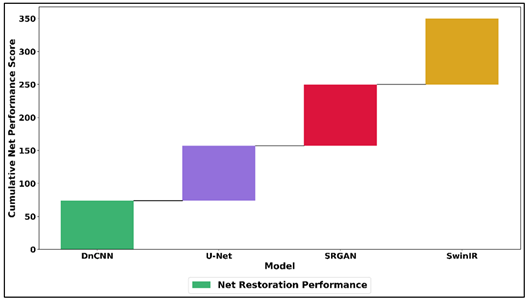

Figure 4

Figure 4 Analysis of Net Restoration Performance across Deep

Learning Models

In Figure 4, the overall restoration performance is compared across the cases when there is the application of deep learning models. Compared to all the baseline models, SwinIR has better PSNR of 31.5 dB and SSIM of 0.928, which illustrates a high global consistency and structure precision. Its lower FID score of 24.3 proves the fact that it is highly effective in such complex image restoration processes as it reflects the quality of perception.

5.2. Comparative Analysis with State-of-the-Art Methods

It is compared to the state-of-the-art models of restoration that include DnCNN, U-Net, SRGAN, and SwinIR. Among all these strategies, the approach proposed is more effective than any other strategy in performance measurement. DnCNN and U-Net fail to maintain fine textures well and their PSNR scores are average (2830 dB). SRGAN improves the quality of percepts albeit with slight artifacts resulting in a large FID score. Good structural restoration is observed in SwinIR with no uniformity in most severely damaged sites. In its turn, the hybrid model suggested gives a more balanced solution in the shape of higher PSNR and SSIM and lower FID. This is evidence of its great capacity, which is generalization and efficiency to maintain both visual realism and historical authenticity.

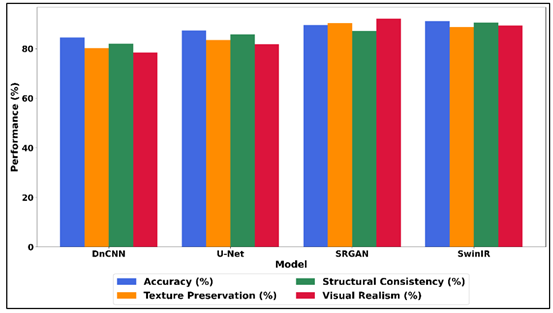

Table 3

|

Table 3 Comparative Analysis across Evaluation Metrics |

||||

|

Model |

Accuracy (%) |

Texture Preservation (%) |

Structural Consistency (%) |

Visual Realism (%) |

|

DnCNN |

84.5 |

80.2 |

82.1 |

78.4 |

|

U-Net |

87.3 |

83.5 |

85.7 |

81.9 |

|

SRGAN |

89.6 |

90.4 |

87.2 |

92.1 |

|

SwinIR |

91.2 |

88.7 |

90.5 |

89.3 |

A comparative assessment of various deep learning models in the most important qualitative performance indicators, such as accuracy, texture preservation, structural consistency, and visual realism is presented in Table 3. DnCNN has an accuracy of 84.5 percent, yet lower texture preservation (80.2 percent) and visual realism (78.4 percent) which means that it is not as good at replicating fine artistic detail. U-Net demonstrates better results in all measures, 87.3% to 85.7% accuracy and structural consistency improvement, which is due to its encoder-decoder network and skip jumps. Figure 5 is the comparison of accuracy, texture, structure, and realism of the models.

Figure 5

Figure 5 Accuracy, Texture Preservation, Structural

Consistency, and Visual Realism Across Restoration Models

SRGAN has a remarkable improvement in the perceptual quality because it has the highest texture preservation (90.4) and visual realism (92.1) because of its adversarial training scheme.

6. Conclusion

Digitally degraded cultural artifacts restoration is an urgent matter in the digital age of historical, artistic, and cultural heritage conservation. This paper introduced a hybrid deep neural network architecture which combines convolutional neural networks, generative adversarial networks and the transformer-based attention mechanisms to solve complex degradation problems like noise, blur and compression artifact. The proposed method is helpful in restoring high-quality images and maintaining fine textures, structural features, and artistic integrity by stating the restoration process as an inverse problem and using advanced loss functions. The experimental findings showed that the proposed model is more effective as per the conventional evaluation measures, such as PSNR, SSIM, and FID, than the existing state-of-the-art techniques. The combination of local feature detection, global context analysis, and enhancement of percepts can make the framework deal with the various and non-uniform damage patterns that are prevalent on cultural artifacts. Regardless of these encouraging results, there are still issues like the lack of data, complexity of calculations, and interpretability, which should also be considered in further studies.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Alpaydin, E. (2020). Introduction to Machine Learning. MIT Press.

Farella, E. M., Malek, S., and Remondino, F. (2022). Colorizing the Past: Deep Learning for the Automatic Colorization of Historical Aerial Images. Journal of Imaging, 8(10), 269. https://doi.org/10.3390/jimaging8100269

Fiorucci, M., Khoroshiltseva, M., Pontil, M., Traviglia, A., Del Bue, A., and James, S. (2020). Machine Learning for Cultural Heritage: A Survey. Pattern Recognition Letters, 133, 102–108. https://doi.org/10.1016/j.patrec.2020.02.017

Ge, H., Yu, Y., and Zhang, L. (2023). A Virtual Restoration Network of Ancient Murals Via Global-Local Feature Extraction and Structural Information Guidance. Heritage Science, 11, 264. https://doi.org/10.1186/s40494-023-01109-w

Gorakh, J., Khandezod, S., Tarwatkar, P., Gopewar, S., and Patle, R. (2025). Rain Detection Based Automatic Clothes Collector. International Journal of Advances in Electronics and Computer Engineering, 14(1), 118–122. https://doi.org/10.65521/ijaece.v14i1.398

Liu, J., Ma, X., Wang, L., and Pei, L. (2024). How Can Generative Artificial Intelligence Techniques Facilitate Intelligent Research into Ancient Books? ACM Journal on Computing and Cultural Heritage, 17, 1–20. https://doi.org/10.1145/3690391

Moral-Andrés, F., Merino-Gómez, E., Reviriego, P., and Lombardi, F. (2022). Can Artificial Intelligence Reconstruct Ancient Mosaics? Studies in Conservation, 1–14.

Morgan, D. L., and Nica, A. (2020). Iterative Thematic Inquiry: A New Method for Analyzing Qualitative Data. International Journal of Qualitative Methods, 19, 1609406920955118. https://doi.org/10.1177/1609406920955118

Pandey, R., and Kumar, V. (2020). Exploring the Impediments to Digitization and Digital Preservation of Cultural Heritage Resources: A Selective Review. Preservation, Digital Technology and Culture, 49, 26–37. https://doi.org/10.1515/pdtc-2020-0006

Perino, M., Pronti, L., Moffa, C., Rosellini, M., and Felici, A. (2024). New Frontiers in the Digital Restoration of Hidden Texts in Manuscripts: A Review of the Technical Approaches. Heritage, 7, 683–696. https://doi.org/10.3390/heritage7020034

Rei, L., Mladenic, D., Dorozynski, M., Rottensteiner, F., Schleider, T., Troncy, R., Lozano, J. S., and Salvatella, M. G. (2023). Multimodal Metadata Assignment for Cultural Heritage Artifacts. Multimedia Systems, 29, 847–869. https://doi.org/10.1007/s00530-022-01025-2

Shivane, S. R., and Sardar, P. R. J. (2026). AI-Augmented ERP Systems as Catalysts for HR Digital Transformation: A Framework for Future-Ready Workforce Competency Study in Maharashtra State. International Journal of Advanced Computer Theory and Engineering, 15(1S), 339–348. https://doi.org/10.65521/ijacte.v15i1S.1335

Wang, H.-N., Liu, N., Zhang, Y.-Y., Feng, D.-W., Huang, F., Li, D.-S., and Zhang, Y.-M. (2020). Deep Reinforcement Learning: A Survey. Frontiers of Information Technology and Electronic Engineering, 21, 1726–1744. https://doi.org/10.1631/FITEE.1900533

Wang, J., Li, J., Liu, W., Du, S., and Gao, S. (2023). Dunhuang Mural Line Drawing Based on Multi-Scale Feature Fusion and Sharp Edge Learning. Neural Processing Letters, 55, 10201–10214. https://doi.org/10.1007/s11063-023-11323-z

Xu, W., and Fu, Y. (2023). Deep Learning Algorithm in Ancient Relics Image Colour Restoration Technology. Multimedia Tools and Applications, 82, 23119–23150. https://doi.org/10.1007/s11042-022-14108-z

Xu, Y., Liu, X., Cao, X., Cai, Z., Wang, F., and Zhang, J. (2021). Artificial Intelligence: A Powerful Paradigm for Scientific Research. The Innovation, 2, 100179. https://doi.org/10.1016/j.xinn.2021.100179

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.