ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

The Evolution of Post-Photography Art in an Era Dominated by AI Image Synthesis

Swati Shivkumar Shriyal 1![]() , Dr. E. Senthil Kumaran 2

, Dr. E. Senthil Kumaran 2![]()

![]() ,

Dr. Divya Mishra 3

,

Dr. Divya Mishra 3![]()

![]() ,

Ayush Gandhi 4

,

Ayush Gandhi 4![]()

![]() ,

Yagna B. Adhyaru 5

,

Yagna B. Adhyaru 5![]()

![]() ,

Saraswati B. 6

,

Saraswati B. 6![]() , Srimathi N. 7

, Srimathi N. 7![]()

1 Department

of Artificial Intelligence, Vishwakarma University, Maharashtra, Pune, 411048,

India

2 Assistant Professor, Department of

Visual Communication, Sathyabama Institute of Science and Technology, Chennai,

Tamil Nadu, India

3 Associate Professor, Department of Computer Science and Engineering

(AIML), Noida Institute of Engineering and Technology, Greater Noida, Uttar

Pradesh, India

4 Centre of Research Impact and Outcome, Chitkara University, Rajpura-

140417, Punjab, India

5 Assistant Professor, Faculty of Engineering, Gokul Global

University, Sidhpur, Gujarat, India

6 Assistant Professor, Computer Science, Meenakshi College of Arts and Science, Meenakshi Academy of Higher Education and Research, Chennai, Tamil Nadu 600080, India

7 Assistant Professor, Meenakshi College of Arts and Science,

Meenakshi Academy of Higher Education and Research, Chennai, Tamil Nadu 600080,

India

|

|

ABSTRACT |

||

|

The advent of

image generation via artificial intelligence has drastically changed the

basis of the visual culture and modern day art practice. Historically,

photography has served as a tool of recording reality, where optical capture

and physical cameras are used to capture visual data provided by the outside

world. Nevertheless, more recent developments in machine learning, especially

generative models like Generative Adversarial Networks (GANs), diffusion

models or neural rendering systems have made possible the production of

exceptionally realistic images by pairing this technique with no physical

photographic capture. In this technology change, post-photography is a

conceptual and artistic paradigm where images are produced, altered or

synthesized by a computation instead of being captured using conventional

photographic processes. Artists in this new paradigm are starting to work

more and more closely with AI systems, whereby algorithmic tools are used to

discover new aesthetics, generative workflows and creative human-machine

hybridity. Democratization of image creation has also been enabled by the

availability of AI based visual tools and people with minimal technical

expertise can create advanced visual arts. |

|||

|

Received 15 January 2026 Accepted 19 March

2026 Published 11 April 2026 Corresponding Author Swati

Shivkumar Shriyal, swati.shriyal@vupune.ac.in DOI 10.29121/shodhkosh.v7.i4s.2026.7476 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Post-Photography, Artificial Intelligence Art, Image

Synthesis, Generative Models, Algorithmic Creativity, Computational

Aesthetics |

|||

1. INTRODUCTION

The fast development of digital technologies has greatly changed the means of producing, distributing and interpreting visual images in a modern society. Photography, the medium that was a main tool of reality acquisition and documentation, is being radically transformed by the development of artificial intelligence-based image-generation algorithms. Historically, photography was based on optical machines like cameras and sensors to capture the light reflected on real-life occurrences, thus giving visual images which were commonly taken to be true images of reality. Things however have recently changed with artificial intelligence, machine learning and even computational graphics that have provided new ways of generating images that are no longer reliant on the physical capture of a photograph Mohamed and Sadek (2023). This has led to increased growth of what scholars and artists increasingly refer to as post-photography, which is a conceptual construct that renegotiates the nature, intent and authenticity of the images in the digital era. One of the most powerful forces of this change is the artificial intelligence. Both generative machine learning models (especially Generative Adversarial Networks (GANs)) and diffusion-based image synthesis models, and neural rendering methods have studied the ability to produce very realistic and visually appealing images on the basis of textual prompts, data patterns or latent representations. They can be contrasted with traditional photography, where the viewer sees the existing scenes captured through a camera, where these AI systems are able to form purely synthetic visual representations that can resemble photographs, yet they are constructed by computation (AI) Lambright (2023). Consequently, the perception between the reality that is captured and the one produced by algorithms is becoming more and more indistinct, as it questions the time time assumptions of the truthfulness and documentary nature of photographic images.

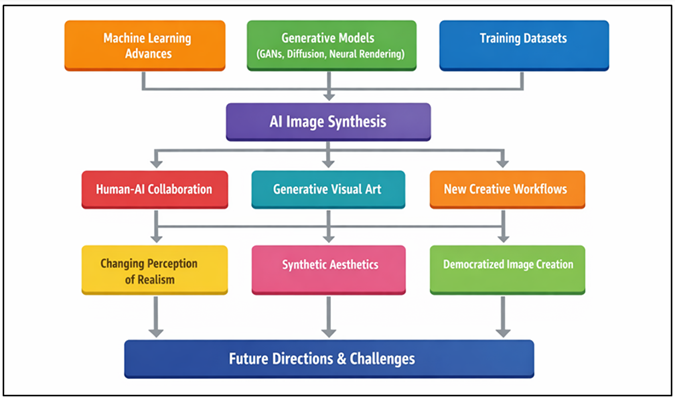

AI-generated imagery has also transformed the art practices and creative workflow. Today, artists do not have to use physical cameras, setups of lights and post-process software. Alternatively, they will be able to work with AI systems that operate as artistic collaborators who are able to create a wide variety of visual outputs according to acquired patterns on large datasets. Model training, algorithmic manipulation, and iterative prompting allow artists to experiment with new visual styles and create complex compositions and experiment with forms of generative art that would otherwise be hard or impossible to create through traditional photographic techniques Başarir (2022). Figure 1 demonstrates how AI technologies have turned photography into post-photographic art work. This change has pushed the limits of artistic experimentation, which have resulted in new ways of hybrid creativity that involves human imagination and computer power.

Figure 1

Figure 1 Conceptual Block Diagram of the Evolution of Post-Photography Art in the Era of AI Image Synthesis

Outside the artistic field, there are significant cultural and aesthetic consequences of the emergence of post-photographic images. Images created with AI have started to affect advertising, films, social networks, digital storytelling, and multimedia production and change the perception of visual authenticity and realism among viewers. Democratization of image production has also occurred in the form of the emerging creation of AI-enabled creative tools that allow people lacking professional abilities in the field of photography to generate advanced imagery Short and Short (2023). Simultaneously, the technological change also poses some critical issues regarding authorship, intellectual property, and ethical responsibility, as well as the credibility of images as the information sources.

2. Related Work

Post-photography is an idea that has been developed through the combination of the digital imaging technologies, computational creativity, and machine-driven visual synthesis, which is based on artificial intelligence. The initial research on digital photography delved into the role played by image manipulating software and digital editing tools on photographic authenticity and visual representation. The scholars of media studies and visual culture emphasized the fact that digital image processing technologies like Photoshop provided new types of visual manipulation, which questioned the classical understanding of photography as objective representation of reality Grover et al. (2020). These advances established the conceptual base of what would later be known as post-photographic art where images are more and more constructed, edited or synthesized as opposed to being directly captured. As machine learning has developed, there are studies that have revolved around how generative algorithms can be used in creating visual content. GAN-based systems showed that it is possible to create realistic images by training two neural networks: the generator and discriminator, in a competitive learning model. Further studies increased the size of GAN architectures to generate high-resolution portraits, artificial landscapes and artistic visual styles Liu et al. (2021). These experiments indicated that machine learning was able to generate images that have a visual appearance of realistic images that resembled those created by photographs even though they were completely artificial. Other more recent studies have considered the emergence of diffusion-based generative models and larger multimodal systems that can be used to generate images on textual inputs. DALL•E, Stable Diffusion, and Midjourney are diffusion models that have enhanced image realism, texture generality, and diversity of style to a considerable degree. Researchers of visual creativity based on AI claim that these systems can be used to create novel types of generative art, where the textual description, conceptual prompts, or algorithmic limitations achieve the creative flow Xu et al. (2024). It has resulted in the emergence of new artistic processes where artists have been working with AI systems to discover new aesthetic forms and visual storytelling. Another topic that researchers explored is the cultural-aesthetic implication of AI-generated imagery. According to the studies on digital art and computational aesthetics, AI technologies are disrupting a traditional concept of authorship, originality, and creative agency Ramesh et al. (2022). Table 1 provides a summary of other previous research on AI image synthesis and post-photography. According to some scholars, AI systems become creative partners, and it increases opportunities in terms of artistic experimentation, whereas others are more concerned with ethical issues associated with the bias of the datasets, the intellectual property, and authenticity of visual media.

Table 1

|

Table 1 Related Work on Post-Photography, AI Image Synthesis, and Generative Visual Art |

||||

|

Research Focus |

AI Technique / Model |

Application Area |

Key Contribution |

Limitation |

|

Generative image synthesis Png et al. (2024) |

Generative Adversarial Networks (GAN) |

Synthetic image generation |

Introduced GAN framework enabling realistic image

creation |

Training instability and mode collapse |

|

Deep convolutional generative models Vartiainen and Tedre (2023) |

DCGAN |

Visual feature learning |

Demonstrated stable GAN training for image

synthesis |

Limited image resolution |

|

High-resolution generative art Wei et al. (2022) |

StyleGAN |

Portrait synthesis and generative art |

Generated photorealistic human faces with style

control |

Requires large computational resources |

|

Diffusion-based image generation Du et al. (2024) |

Diffusion Models |

AI image synthesis |

Improved visual realism and diversity in

generated images |

Slow generation process |

|

Text-to-image generation Sheela et al. (2025) |

DALL·E model |

AI-driven digital art |

Enabled generation of images from textual prompts |

Ethical concerns on training data |

|

AI and computational creativity |

Generative art algorithms |

AI-assisted artistic production |

Discussed AI as a creative collaborator |

Conceptual rather than empirical study |

|

AI-generated artistic styles Bird and Lotfi (2023) |

Creative Adversarial Networks |

Computational aesthetics |

Generated artworks beyond learned artistic styles |

Evaluation of creativity remains subjective |

|

Cultural implications of AI imagery Zhu et al. (2023) |

Computational media analysis |

Visual culture studies |

Analyzed transformation of photography in AI era |

Limited focus on technical models |

|

Post-photography in digital media Li et al. (2024) |

AI image manipulation tools |

Media and communication studies |

Examined authenticity and visual truth in

synthetic images |

Lack of large-scale experimental evaluation |

3. Technological Evolution of AI Image Synthesis

3.1. Development of machine learning in visual media

Machine learning has been a radical factor in changing the technology of visual media. The first generation of digital image processing systems was mostly dependent on rule-based algorithms that were aimed at carrying out a given task like edge detection, image segmentation, and pattern recognition. These methods needed features and predetermined instructions that had to be designed manually thus restricting their flexibility and capability to detect intricate visual patterns. The development of machine learning methods enabled computers to learn visual representations without human intervention and the accuracy and flexibility of image analysis systems became much more effective. The big breakthrough was the emergence of deep learning and especially convolutional neural networks (CNNs) that found extensive application in the field of image classification, object detection, and scene understanding. The CNNs allowed the machines to automatically acquire hierarchical visual representations of textures, shapes and semantic representations of large image datasets. This was enabled to enable visual computing systems to comprehend images to a degree of sophistication that had not been possible with conventional computer vision systems.

3.2. Generative Models for Image Creation (GANs, Diffusion Models, Neural Rendering)

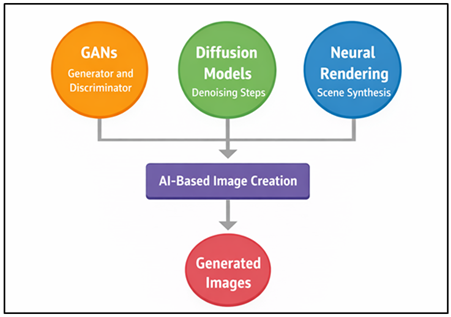

Generative models are among the greatest technological development in visual media generation in artificial intelligence. All of these models are meant to memorize the statistical distribution of image data and produce new images that mimic the patterns that exist in training data sets. The Generative Adversarial Network (GAN) is considered one of the first and the most powerful methods in this area. GANs are the two neural networks which are a generator and a discriminator trained together, in a competitive manner. The generator synthesizes images and the discriminator measures the authenticity of the images be it real or artificial. In such adversarial training, GANs slowly acquire the ability to generate very realistic images. GANs, diffusion, neural rendering, which produce AI images are presented in Figure 2. Diffusion-based generative models have also become a potent competitor to GAN structures in the past few years.

Figure 2

Figure 2 Generative Models for AI-Based Image Creation Using GANs, Diffusion Models, and Neural Rendering

Diffusion models are neural networks that create images by denoising random noise into coherent visual images in a succession of denoising autoencoders. The models have shown spectacular abilities in generating the images with a very detailed and coherent output to textual prompt or latent representation.

3.3. Data-Driven Image Generation and Training Datasets

The state of the art AI image synthesis systems are based on data-driven learning. In contrast to the classical ways of computer graphics that are based on explicit rules and mathematical modeling, AI-based image generation systems are taught the rules of visual patterns directly by looking at large sets of images. These datasets include millions of labeled or unlabeled photographs which are of different visual categories (objects, landscapes, and human faces, artworks and everyday scenes). Through these datasets, machine learning models are able to acquire complicated associations among visual features, textures, shapes and semantic meanings. Big data sets are important in identifying the quality and variety of produced pictures. The training data in AI image synthesis may consist of edited collections of images acquired through social repositories, digital collections, and web-based media. Through the training process, the neural networks examine the statistical pattern in such datasets in order to construct internal representations of visual structures. It is through these representations that the model is able to create new images that resemble the visual features of the training images. Nevertheless, there are also significant problems when using massive data.

4. Transformation of Artistic Practices in the AI Era

4.1. Human–AI collaboration in creative processes

The introduction of AI into the artistic production has ushered in a new paradigm of working in cooperation with the human creators and the computational systems. Compared to the conventional artistic tools that merely help to implement the vision of an artist, the AI systems are able to take an active part in the creative process and generate visual concepts, propose stylistic alternatives, and even create completely new pieces of work on the basis of the learnt patterns. Such a partnership relationship will make AI not a passive tool but an interactive creative partner. Prompts, parameter control and trial and error can be utilized by artists in directing AI models to give the machine multiple visual interpretations of a concept. Human-AI interaction is frequently a cyclical work process whereby the artist makes initial orders or conceptual input and the AI makes visual output which is further refined or edited by the artist. Through this process artists are able to experiment more with their aesthetic possibilities in less amount of time. The variations produced by AI can initiate new artistic directions, previously unexplored by the artist, as well as promote experiments in visual narration and visual design.

4.2. Algorithmic Creativity and Generative Visual Art

The algorithmic creativity is the application of the computational algorithms to create artistic works, patterns and visual compositions. Generative models have attracted considerable interest in the field of an artificial intelligence and digital art, where algorithmic creativity appears to create complex and aesthetically attractive pieces of art. Generative visual art is based on mathematical algorithms, probabilistic systems, and machine learning algorithms to generate images which are dynamically changing in response to a given set of parameters, or based on learned patterns of data. As AI generative models are developed, artists are able to create algorithms capable of producing a diverse visual output on their own. Such outputs could comprise abstract pattern, stylized portraits, surreal landscapes or experimental works with many artistic influences combined. The algorithmic process enables artists to search huge creative spaces creating a range of thousands of versions of a visual idea and choosing the most interesting to be refined further. The traditional principles of artistic originality and authorship are also overthrown by algorithmic creativity. Artists, rather than creating all the details of a work of art by hand, can specify the generative system that governs it and leave the algorithm to generate the visual output. This change emphasizes the artistic as a designer of creative systems and not as a manufacturer of single images.

4.3. New Workflows for Artists Using AI-Based Tools

The emergence of artificial intelligence in the creative sector has greatly transformed work concepts applied by artists and visual designers. The classical artistic processes were generally linear and included such stages as the development of the idea, hand drawing or photographing, editing, and the production. The current AI-driven creative processes may start with a conceptual prompt or textual prompting to drive image synthesis networks. Generative systems take in descriptive instructions by artists, and generate a series of visual interpretations of the concept requested. The outputs may be reformed by the means of additional prompts, parameter manipulations, or post-processing methods. This process is iterative which enables artists to apply a variety of design options and polish their concepts through repeated experimentation. AI also is also compatible with the available digital art programs, allowing artists to overlay the generative results with the traditional editing tools, including compositing, color grading, and visual effects.

5. Cultural and Aesthetic Implications of Post-Photography

5.1. Changing perception of realism and visual truth

The advent of imagery produced through artificial intelligent has had profound influence on the conventional interpretation of realism and truth of the visual in photography and the visual media. Traditionally, photography was popularly viewed as a valid form of representation of the reality since they captured images on the actual physical surroundings by use of optical equipment. Photographs were traditionally viewed as objective records in terms of visuality and documented events, people, and places with a high level of authenticity. Nonetheless, the emergence of AI-based image synthesis tools is questioning this established assumption since it is now possible to produce images that are extremely realistic and are not based on real-world sceneries. AI-created images can be quite similar in the features of photographs, including lighting, texture, perspective, and depth, so the viewers are becoming more and more confused between the real photographs and the ones created using synthetic methods. Consequently, the idea of visual truth is getting confusing and complicated. Realistic looking images might no longer correspond to real world events or physical situations but instead to computational interpretations which are produced by machine learning models. This change has significant cultural implications to journalism, media communication and visual storytelling.

5.2. Hyper-Realism and Synthetic Aesthetics

Artificial intelligence has brought new aesthetic opportunities, which cannot be limited to traditional photography. Hyper-realism and synthetic aesthetics are considered to be one of the most remarkable changes in post-photographic art. Most hyper-realistic AI-generated images show details, texture, and visual clarity, as well as competing with or even surpassing traditional photographic images. artists can produce scenes with perfect lighting conditions, complicated visual compositions and extremely smooth textures that could not be available in the real world through advanced generative models. Synthetic aesthetics are those styles of visual art which have their roots in computational processes instead of in physical photographic space. Artificial intelligence can be used to combine various styles, create surrealistic imagery, and create fantastic scenes that break traditional visual conventions. This allows artists to make images that are both realistic and imaginatively abstract causing visual compelling works that are between photography, digital illustration and generative art. Industries like advertising, film production, game design and digital media are also being affected by the emergence of hyper-realistic and synthetic aesthetics. Machine-generated image products are being utilized in the generation of concept art, film shots, and digital worlds.

5.3. Democratization of Image Creation through AI Tools

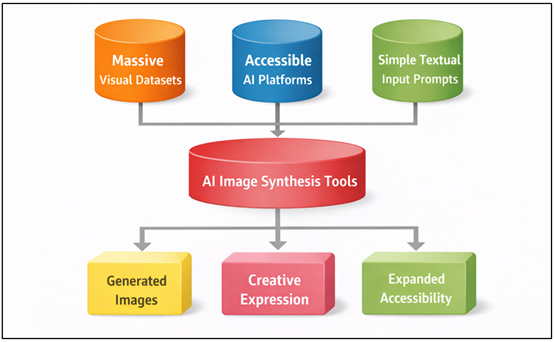

The democratization of image making in the digital age has been facilitated by the availability of artificial intelligence based image making tools. Historically, there were professional skills in photography and visual art production, which demanded technical expertise, costly equipment, and substantial training, including in the areas of lighting, composition, and digital editing. These obstacles restricted the production of quality images to the trained photographers, designers, and visual artists. According to Figure 3, AI tools provide an opportunity to create digital images that are easily accessible and democratized. But with the advent of AI-driven image generation algorithms, the barriers to producing advanced images have been dramatically reduced because they allow users to create advanced images using only a few textual inputs or user-friendly interfaces.

Figure 3

Figure 3 Illustrating the Democratization of Image Creation through AI-Based Image Synthesis Tools

Based on AI, anyone with little technical skill can create a visually complex work of art, digital illustration, or photo-realistic image. These tools can be used to automatically create images with advanced visual properties depth, lighting, and style variation by using large generative models that train using large visual datasets. This has led to the fact that creative expression is no longer the prerogative of professionally trained people.

6. Future Directions of Post-Photography Art

6.1. Integration of AI with immersive technologies (AR, VR, XR)

The next wave of post-photography art is more related to immersive technologies, including Augmented Reality (AR), Virtual Reality (VR) and Extended Reality (XR). The technologies allow artists to go beyond the fixed visuals and produce interactive forms of visual experiences where viewers are able to participate in digitally generated environments in real time. Together with image synthesis on the basis of artificial intelligence, the immersive technologies offer a potent tool of creating dynamic visual spaces that combine genuine and synthetic aspects. AI is able to create believable textures, objects, and spaces that are incorporated into interactive platforms, and enable artists to create complex visual stories and spaces. AI-generated imagery can be overlaid onto physical spaces in the AR environment, to form hybrid spaces, which combine the real and the virtual world. Likewise, VR technologies enable artists to create an all-synthetic world that viewers could explore in an interactive way featuring AI-generated landscapes, characters, and visual formations. The combination of AI with immersive technologies is also increasing the role of the viewer in the artistic experiences. Rather than just observing pictures, users are able to engage with the generative visual systems that are able to react to user behavior, movement or the environment. This interactive aspect presents new story-telling and experiential art forms. With the further development of immersive technologies, AI-based post-photographic art can transform into the multi-sensory visual scenery that will alter the way artistic visual imagery is produced and consumed.

6.2. Evolution of Hybrid Human–Machine Creative Ecosystems

The further advancement of artificial intelligence is supposed to bring about the creation of hybrid creative ecosystems where human artists and intelligent systems will work in closer collaboration. The AI roles in these ecosystems are not limited to image generation tools, but an adaptive creative collaborator, which is able to learn preferences and patterns of users in art, as well as in user interactions and style. They can also develop into a kind of collaboration between artists, designers and technologists with AI models to create elaborate visual projects. Creative ecosystems of the future can be created based on interactive systems, which constantly learn through the feedback of artists and enable an AI model to optimize its output based on the changing artistic intent. This form of learning adaptive process might allow AI systems to achieve a distinct stylistic attribute based on a particular artist or artistic community. This can lead to artistic production being a reciprocal interaction between the creative power of people and machine intelligence. Such ecosystems can also be collaboratively networked in which two or more artists engage with common generative systems. Creative platforms based on clouds might enable artists in various geographical regions to collaborate to produce visual arts with the help of AI. These developments can support interdisciplinary cooperation in the world of art, computer science, design, and media studies. Finally, the new type of creative production in human-machine ecosystems is a new form of human-machine interactions that broaden the creative faculties and changes the definition of the role of technology in visual culture.

6.3. Emerging Research Areas in Computational Aesthetics

The field of computational aesthetics is a new interdisciplinary field of research which examines the uses of computational techniques to analyse, interpret, and produce aesthetically pleasing visual content. Computational aesthetics gains a more significant role in the context of post-photography and the ways artificial intelligence can be applied to recreate artistic principles of balance, symmetry, harmony of colors and visual composition. The inclusion of aesthetic evaluation metrics in generative systems by researchers is expected to enhance the artistic content and visual consistency of the images created by AI. Recent studies in computational aesthetics have aimed at the creation of algorithms to use in assessing artistic characteristics within images and to forecast human aesthetic preferences. Big data sets of artworks and photos can be analyzed using machine learning models to determine patterns that make visual appeal. The insights are then learnable to be used in the generative models and thus the AI systems can be used to generate images closer to how people perceive the human beauty and art. The other key research direction is the creation of explainable AI systems that are able to understand the aesthetic reasoning of the images generated. Learning the process through which AI models generate aesthetically pleasing results could enable artists to better control the processes of generation. Also, scientists are working on the ability of AI systems to acquire various cultural aesthetic traditions, which means that generative art will be as diverse as possible in artistic opinion.

7. Conclusion

The emergence of technologies that are based on artificial intelligence has greatly altered the visual culture and artistic production, which has created a post-photography concept. Compared to conventional photography where an image of a real-world scene is created by using optical capture, a visual image synthesis based on AI-driven computations allows the development of the image. Artificial intelligence Generative technologies, including Generative Adversarial Networks, diffusion models, and neural rendering systems, have presented new ways of creating very realistic and creative images without the use of physical cameras. These technological developments have increased the scope of visual media and re-equipped the modes of image production, perception and experience. Post-photography has also influenced the creative arts by changing the way creative practices can be conducted through the incorporation of novel modes of partnership between human artists and intelligent systems. AI tools are actively employed by artists to experiment with algorithmic creativity, create variations of images and establish experimental artistic processes that merge human intuition with machine generated opportunities. This artistic blend promotes interdisciplinary cooperation and enables artists to experiment with multi-layered visual forms and compositions which could have been hard to make before. Moreover, the cultural and aesthetic connotations of AI-generated images indicate the modified approach to the perception of realism, authenticity, and visual truth in modern media. With an ever more advanced synthetic imagery, viewers need to re-examine the interpretation and reliance of visual information.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Başarir, L. (2022). Modelling AI in Architectural Education. Gazi University Journal of Science, 35, 1260–1278. https://doi.org/10.35378/gujs.967981

Bird, J. J., and Lotfi, A. (2023). CIFAKE: Image Classification and Explainable Identification of AI-Generated Synthetic Images. arXiv preprint arXiv:2303.14126. https://doi.org/10.1109/ACCESS.2024.3356122

Du, Z., Zeng, A., Dong, Y., and Tang, J. (2024). Understanding Emergent Abilities of Language Models from the Loss Perspective. arXiv preprint arXiv:2403.15796.

Grover, R., Emmitt, S., and Copping, A. (2020). Critical Learning for Sustainable Architecture: Opportunities for Design Studio Pedagogy. Sustainable Cities and Society, 53, 101876. https://doi.org/10.1016/j.scs.2019.101876

Lambright, K. (2023). The Effect of a Teacher's Mindset on the Cascading Zones of Proximal Development: A Systematic Review. Technology, Knowledge and Learning, 1–17.

Li, Y., Liu, Z., Zhao, J., Ren, L., Li, F., Luo, J., and Luo, B. (2024). The Adversarial AI-Art: Understanding, Generation, Detection, and Benchmarking. arXiv preprint arXiv:2404.14581. https://doi.org/10.1007/978-3-031-70879-4_16

Liu, X., Kang, J., Ma, H., and Wang, C. (2021). Comparison Between Architects and Non-Architects on Perceptions of Architectural Acoustic Environments. Applied Acoustics, 184, 108313. https://doi.org/10.1016/j.apacoust.2021.108313

Mohamed, N. A. G., and Sadek, M. R. (2023). Artificial Intelligence as a Pedagogical Tool for Architectural Education: What Does the Empirical Evidence Tell Us? MSA Engineering Journal, 2, 133–148. https://doi.org/10.21608/msaeng.2023.291867

Png, W. H., Aun, Y., and Gan, M. (2024). FeaST: Feature-Guided Style Transfer for High-Fidelity Art Synthesis. Computers and Graphics, 122, 103975. https://doi.org/10.1016/j.cag.2024.103975

Ramesh, A., Dhariwal, P., Nichol,

A., Chu, C., and Chen, M. (2022). Hierarchical

Text-Conditional Image Generation with CLIP Latents. arXiv Preprint

arXiv:2204.06125.

Sheela, M. A. A., Amulya, K., Lokesh, D., Yesubabu, K., and Ajay, P. (2025). Federated Multimodal Language Recognition: A Deep Learning Approach for Real-Time Applications. International Journal of Recent Advances in Engineering and Technology, 14(1), 17–26.

Short, C. E., and Short, J. C. (2023). The Artificially Intelligent Entrepreneur: ChatGPT, Prompt Engineering, and Entrepreneurial Rhetoric Creation. Journal of Business Venturing Insights, 19, e00388. https://doi.org/10.1016/j.jbvi.2023.e00388

Vartiainen, H., and Tedre, M. (2023). Using Artificial Intelligence in Craft Education: Crafting with Text-to-Image Generative Models. Digital Creativity, 34, 1–21. https://doi.org/10.1080/14626268.2023.2174557

Wei, J., Tay, Y., Bommasani, R., Raffel, C., Zoph, B., Borgeaud, S., Yogatama, D., Bosma, M., Zhou, D., Metzler, D., et al. (2022). Emergent Abilities of Large Language Models. Transactions on Machine Learning Research, 2022.

Xu, Y., Xu, X., Gao, H., and Xiao, F. (2024). SGDM: An Adaptive Style-Guided Diffusion Model for Personalized Text to Image Generation. IEEE Transactions on Multimedia, 26, 9804–9813. https://doi.org/10.1109/TMM.2024.3399075

Zhu, M., Chen, H., Yan, Q., Huang, X., Lin, G., Li, W., Tuv, Z., Hu, H., Hu, J., and Wang, Y. (2023). GenImage: A Million-Scale Benchmark for Detecting AI-Generated Image. arXiv preprint arXiv:2306.0857. https://doi.org/10.52202/075280-3398

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.