ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Deepfakes and Visual Journalism: Reconfiguring Trust, Realism, and Authenticity in Digital Visual Culture

Shopita Khurana 1![]()

![]() ,

Dr. Kamaljeet Kaur 2

,

Dr. Kamaljeet Kaur 2![]()

![]() ,

Sukant Tiwari 3

,

Sukant Tiwari 3![]() , Dr. Vikash Kumar 4

, Dr. Vikash Kumar 4![]()

![]() ,

Dr. Ashok Bairagi 5

,

Dr. Ashok Bairagi 5![]() ,

,

1 Research

Scholar, University Institute of Media Studies, Chandigarh University, Mohali,

Punjab, India

2 Professor, University Institute of Media Studies, Chandigarh

University, Mohali, Punjab, India

3 Junior Research Fellow, Department of Mass Communication and Journalism, School of Media and Communication, Babasaheb Bhimrao Ambedkar University, Lucknow, India

4 Assistant Professor, Department of Journalism and Mass Communication,

Vivekananda Global University, Jaipur, Rajasthan, India

5 Assistant Professor, Head of Department School of Cinema Aaft

University of Media and Arts, Raipur, India

6 Professor, University Institute of Media Studies, Chandigarh

University, Mohali, Punjab, India

|

|

ABSTRACT |

||

|

With the

advent of the deepfake technology and its

propagation, the landscape of visual journalism has radically changed and

brought relevant issues of credibility, realism, and authenticity in the

digital visual culture. In this piece, the author investigates the impact of

AI-produced synthetic media on the perception of the audience and the

credibility of visual journalism. According to a synthesized theoretical

framework of the post-truth theory, the visual culture theory, and the trust

theory, the research problem is the effect of exposure to deepfakes on the

perception of realism, perceived authenticity, and media literacy and the

overall influence of the three variables on trust of visual journalism. It is

both a mixed-method study design (including a qualitative content analysis of

visual media) and a quantitative survey-based audience perception and case

studies of real-life deepfake instances. The findings suggest that there is

certain paradoxical influence in the application of deepfakes,

on the one hand, they enhance visual authenticity and immersion, and on the

other hand, they reduce authenticity and lead to the loss of trust among the

viewers. The paper further stipulates the moderating role of technological

awareness, platform credibility and regulatory systems in the effect of the

reaction of the users towards synthetic media. The results show that the

traditional verification systems are increasingly becoming inadequate in taking into account the dynamism of depth fake instruments

and consequently, there is need to employ sophisticated detection systems,

moral policies and codes. Moreover, the paper illuminates the importance of

media literacy in the context of enabling the consumer of the

visual information to make predominant judgments and prevent

misinformation. The study also contributes to the existing gap in the

literature of technology development in the creation of deepfakes, and the

sociocultural implications of this technology in the journalism field. It is

a highly elaborate manner of redefining the reorganization of trust with the

visual media and provides practical recommendations to the journalists,

policymakers and the individuals that create technology. |

|||

|

Received 23 December 2025 Accepted 17 January 2026 Published 28 March 2026 Corresponding Author Shopita Khurana, spk16062014@gmail.com DOI 10.29121/shodhkosh.v7.i2s.2026.7355 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Deepfakes, Visual Journalism, Media Trust,

Authenticity, Realism, Digital Visual Culture, AI-Generated Content, Media

Literacy, Misinformation, Deep Learning |

|||

1. INTRODUCTION

Deepfakes, which are generated with the help of some models like Generative Adversarial Networks (GANs) and autoencoders, allow the manipulation or generation of images and audio in a realistic way. These technologies are able to replace faces, change expression and create completely new situations that are close to real records. Although deepfake technology has potential in the creative industry, entertainment, and education, its abuse is very dangerous especially in the sphere of journalism. Such a capacity to produce hyper-realist media content disrupts the conventional premise according to which seeing is believing thus destabilizing the epistemological principles of visual truth Cenamor et al. (2019).

The evolution of the deepfakes has been supported by the general trend of the post-truth era, in which misinformation and disinformation play an ever-growing role in the social discourse. In this regard, visual journalism is experiencing a credibility crisis like never before Chen et al. (2024), Falahat et al. (2020). Since news organizations were used to using visual evidence to build power and authority, they are currently facing audiences that are more doubtful about digital images. Such a loss of trust does not only influence the audience but also has very far-reaching consequences on the democratic processes, since the controlled media can be used to manipulate elections, provoke social upheavals and ruin reputations. Furthermore, the combination of the social media and deepfake technologies has increased the speed at which the manipulated content is spread. Twitter, YouTube, and Instagram are examples of platforms that spread information quickly, and their content validation is often less important than virality. To this end, deepfake content can reach millions of users before fact-checking mechanisms can be triggered, which increases the possible effects of such content. This emerges as a critical issue to have strong detection systems, moral standards, and regulatory systems to reduce the risk posed by synthetic media. In theory, the emergence of deepfakes requires the re-definition of the major concepts of visual culture, including realism and authenticity. Historically linked with truth, realism is becoming disconnected with it because of the possibilities of AI-based media. On the same note, authenticity, which previously relied on the indexical connection between an image and its physical counterpart, is currently being challenged in an online world, where images can be developed artificially without any physical grounding. This change forces researchers and professionals to redefine the interpretation and validation of visual evidence in the modern journalism Frishammar et al. (2018) .

The issue discussed in this paper focuses on how the trust in visual journalism can be reconfigured in the era of deepfakes. With the advancement of the synthetic media and its availability, it becomes difficult to discern between original and manipulated content. This poses the important questions of the future of journalistic integrity, the contribution of technology in the protection of the truth and the obligation on the part of the media institutions to ensure the people trust. Based on this, the main research questions are: (1) to examine how deepfake technology changes the credibility of visual journalism; (2) to investigate how realism and authenticity are currently being renegotiated in digital visual culture; (3) to assess how audiences and their trust on the existence of synthetic media changes; and (4) to suggest ways of mitigating the risks of deepfakes which include technological, ethical and policy-based interventions. Another aim of the study is to fill the gap between computer science, communication studies, and visual culture to bridge the gap between technological development and the media theory.

2. Existing Literature

Deepfake technology and visual journalism have become an urgent topic of cross-cutting research, with a range of artificial intelligence, media studies, communication theory, and digital ethics. This part examines the available scholarly articles in order to make sense of how deepfakes are transforming concepts of realism, authenticity, and trust in the modern visual culture. The history of visual journalism is rather significant in defining the perceptions of people on events by offering apparently straightforward depictions of the reality. Photographic and video images have long been linked to objectivity and truth, based on what scholars have referred to as indexicality of images, in that is their natural association with real world events. The initial research in media theory focused on the fact that visual media contribute to the perception of credibility and emotional attachment, which legitimizes the power of news media. With the substitution of analog media by digital media, however, this indexical relationship has been undermined. Digital editing tools brought about the potential of manipulation, but these changes could either be detected or demanded special skills. The modern stage of AI-based image and video generating is a departure in time and space since deep learning algorithms are capable of producing pictures and videos of high realism that tend to question the ultimate truth of the image Grant (2020).

Advanced machine learning techniques, mostly Generative Adversarial Networks (GANs), which are composed of two neural networks, a generator and a discriminator, are used to create deepfakes: both networks are trained to compete against each other and to get progressively more realistic outputs. There are more techniques like autoencoders and diffusion models, which further improve the quality and availability of synthetic media generation. Recent reports note that deepfaking tools now are increasingly accessible and easier to use, reducing the threshold to entry to both legitimate and malicious applications. Scientists have revealed uses to entertainment and film production, education and accessibility. The same technologies are, however, more and more used to misinform, manipulate politics, and commit identity fraud. The authenticity of modern deepfakes can lead to the situation when it is hard to detect such a fake, thus advanced forensic methods and AI-based detection algorithms should be developed Helfat and Raubitschek (2018). Trust is one of the cornerstones of journalism that determines the ways of receiving and consuming news material by the audience. Conventional theories of media credibility focus on credibility of the sources, accuracy, transparency and consistency. The digital era has however led to more fragmentation of trust because of the spread of online platforms and user generated content.

Researchers insist that deepfakes increase this gap of trust by creating uncertainty in visual evidence. The supposedly genuine media can be viewed as possibly manipulated, which results in the so-called liar dividend when people can ignore the genuine facts as fakes. Empirical research shows that audiences are becoming more skeptic with some groups being more concerned about digital manipulation technologies, especially younger audiences. This loss of trust is very challenging to the journalists who now have the responsibility to ensure that they not only fact-check information, but also prove authenticity to the audience. Deepfakes as an ethical issue have been the subject of many discussion in literature. Issues of concern are misuse of synthetic media to defame, harass and also political propaganda. Non-consent explicit content, impersonation of prominent personalities, and fake stories have been generated through deepfakes and it casts profound doubts regarding privacy, consent, and accountability. Ethically, as a journalist, there are dilemmas connected to the use of AI-generated images in news coverage. Although synthetic media can be applied in a responsible way in illustrative or reconstructive form, its possible misleading effects on the audience require disclosure and ethical standards. Some of the studies support the introduction of industry standards, such as watermarking, provenance tracking, and labeling AI-generated content in a transparent way Kiyabo and Isaga (2020), Liao et al. (2024).

Researchers have come up with various methods of detection in response to the increasing threat posed by deepfakes. These involve visual analysis of visual evidence, lighting inconsistency and shadows, and body language like eye blinking. Regardless of such improvements, deepfakes detection is a complex and dynamic issue. With the development of generative models, they are becoming harder to detect, and a continuous arms race between the creation and detection of deepfakes continues. Researchers point out how interdisciplinary solutions that integrate technological innovation with policy interventions and media literacy efforts should be made. Moreover, the nature of policy and regulatory responses, particularly in relation to deepfakes has reached an infantile stage and differs across regions and jurisdictions Mukherjee and Mukherjee (2022).

Table 1

|

Table 1 Summary of Existing Methods |

|||

|

Technique / Methodology |

Application Area |

Key Contribution |

Limitations |

|

Deep learning-based survey

(CNN, RNN) Nayak et al. (2022) |

Media forensics |

Comprehensive taxonomy of

detection techniques |

Limited real-world dataset

validation |

|

CNN-based classification Patil et al. (2025) |

Image and video authenticity |

Identifies

strengths/weaknesses of detection models |

Poor generalization to

unseen data |

|

Transformer + multimodal

fusion Sarwar et al. (2024) |

Visual media verification |

Captures spatial-frequency

inconsistencies effectively |

High computational cost |

|

Signal processing + ML

hybrid Singh and Kaur (2021) |

Digital media security |

Enhances detection via

feature extraction techniques |

Limited scalability in

real-time systems |

|

GAN, VAE, diffusion models Sousa et al. (2020) |

AI-generated media |

Benchmarking datasets and

detection models |

Rapid evolution makes

benchmarks outdated |

|

Audio-visual multimodal

learning Tiberius et al. (2021) |

Fake media detection |

Shows superiority of

multimodal detection methods |

Integration complexity |

|

Hybrid deep learning model

[16] |

Image/video forensics |

Improves temporal

consistency detection |

Computational overhead |

As shown in the literature review Table 1, recent studies on deepfakes are mostly concentrated on detection methods relying on advanced AI models, including CNNs, transformers, and multimodal learning models. Majority of the studies focus on enhancing the accuracy of detecting manipulated images and videos with particular attention to the use of spatial, temporal and frequency-based features. One of the important lessons is the increasing trend of multimodal models (audio + video), which have a higher detection ability than single-modality models. Also, it is becoming a popular method to use hybrid models (e.g., CNN + BiLSTM) to obtain both visual and temporal inconsistencies of deepfake content. Nevertheless, the research always displays a number of shortcomings such as Poor generalization to new or unseen deepfake methods, High computational complexity, which makes detection in real time challenging, Rapid development of deepFake generation algorithms, which provides a constant arms race.

3. Theoretical Framework

The development of deepfake technology creates the need to have a solid theoretical base to make sense of it in regard to visual journalism, especially in the realms of trust, realism, and authenticity. As a result, viewers have no option but to challenge the verity of any visual media, causing a more general crisis of epistemic credibility. To add to this view is the theory that relates to visual culture which studies the construction of meaning through images and how images shape the perceptions of the society. Traditionally, visual journalism has been based on the indexicality of the image, in which photographs and videos are regarded as direct records of the reality. Nonetheless, the deepfake technology breaks this connection by creating images that are real-life and lack a physical referent. This changes the interpretation of realism to be more of a perception than a claim to truth thus changing the way audiences view and respond to visual media.

The theory of trust is also the conceptual framework based on the trust concept in the digital communication scenario, where trust is treated as a result of credibility, reliability, and transparency. Conventional journalism has been defined with the creation of credibility through editorial values of checks and balances and institutional accountability. However, the prevalence of deepfakes undermines such mechanisms by putting the verification in an uncertain state. This issue is further enhanced by what has been christened as the liar dividend as individuals can make believable evidence false and therefore weaken accountability. It has thus come to pass that the trust is no longer solely on the source, nor solely on the established integrity of the medium itself. The study aims at addressing these intricacies by developing a theoretical framework that will enable the association of the deepfake technology with significant variables that are employed in visual journalism. A deepfakes exposure is the presumed central independent variable, that is, it has an impact on the mediating factors of perceived realism, perceived authenticity, and media literacy. In their turn, these have the effect on the audience trust and credibility judgments as the dependent variables. The strength and direction of such relationships too are supposed to be mediated by moderating variables such as technological awareness, platform credibility and regulatory frameworks. The interdependence of such variables suggests that there is a dynamic process, according to which deepfakes also raises the visual realism, and, simultaneously, the impression of authenticity, which leads to the lack of trust. However, the model does not neglect the potentially significant role of the countermeasures (detection technologies and media literacy programs) in the process of minimizing these effects. The technology-sociocultural aspect, which is achieved with the framework, provides a holistic view of how deepfakes distort the principles of visual journalism.

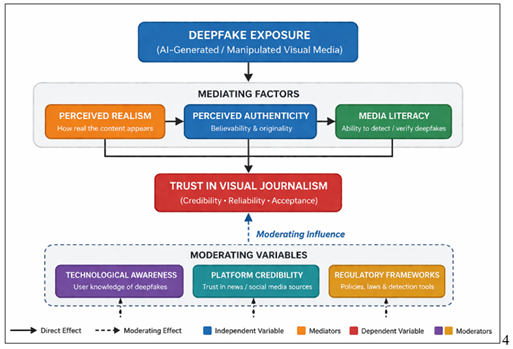

Figure 1

Figure 1 Proposed Framework

The Figure 1 shows that the initial point that affects the perception of the audience is deepfake exposure (AI-created or manipulated visual content). It interferes with three mediating factors, which include perceived realism, perceived authenticity and media literacy which define how users perceive and judge visual contents. All these mediators influence collectively the degree of trust in visual news such as credibility, reliability and acceptance of news. Also, there are variables to be moderated, including technological awareness, platform credibility, and regulatory frameworks, which have an impact on the extent to which deepfakes impact trust. In general, the model demonstrates that deepfakes can make something appear more real; however, in most cases, they diminish a level of authenticity, which further results in the decrease of trust in online visual content.

4. Methodology

The research design in this study is the mixed-method research design as it will be able to examine the effects of deepfake technology in visual journalism on the three dimensions of trust, realism, and authenticity. By combining the qualitative and quantitative methods, it is possible not only to have a better insight into the quantifiable impact that deepfakes have on the way the audience perceives things but also the context and meaning related to the digital visual culture. The methodology is designed to fit in the conceptual framework suggested in the second section, with the emphasis on the interconnection among the variables of deepfake exposure, mediating variables, and trust in visual journalism. The study starts with the qualitative content analysis of the chosen samples of visual media, the genuine and deepfake-created news content. The samples are diversified and relevant as these are curated using publicly available datasets, social media platforms, and confirmed news archives. Simultaneously, perception and trust of the audience are measured using a quantitative survey-based method. A questionnaire is structured to obtain answers to the questions regarding perceived realism, perceived authenticity, media literacy as well as trust in visual journalism. Questions on Likert scale, scenario-based assessments, and visual materials are incorporated in the survey in which subjects are required to distinguish between genuine and fake material. The target market is the digital media users, the students, professionals and ordinary users of online news sites that frequent digital news sites. The sampling method is stratified to provide a representation of all of the age groups, education levels, and the technological awareness levels.

The survey shall be carried out online where the data collection shall be conducted to make the survey accessible and reach out to more people. These variables are operationalized with the help of survey items and are analyzed to identify their impact in the relationship between the exposure to deep fakes and trust. An example is that people who have a greater level of media literacy or knowledge of the deepfake technology might be more skeptical and less prone to the influence of manipulated information. On the same note, a level of trust in particular platforms or knowledge of regulatory action might influence how users perceive visual data. The reliability and validity are assisted by various procedures such as pilot test of the questionnaire, computation of Cronbach alpha to display internal consistency and application of the existing scales used in the history of the research. The content validity is ensured by agreement between survey items and the conceptual constructs that are outlined in the theoretical framework. Also, employing various data sources and methods of analysis leads to the methodological rigor and strength.

5. Impact of Deepfakes on Visual Journalism

Deepfake technology has caused a substantial change in visual journalism, both introducing new opportunities and raising serious concerns. Due to the possibilities of artificial intelligence to make synthetic media seem more real and lifelike, the classic principles of journalism, truth, credibility, and authenticity, are under a greater challenge. This section discusses the complex implications of deepfakes on visual journalism, including news production, perception of audiences, and the news system in general. The effects of deepfakes in the news production process are witnessed as one of the most immediate. Visual evidence, in the form of photographs and videos, has traditionally been used by journalists and media houses as an acceptable depiction of what has really happened in the field. Nonetheless, the accessibility of deepfake technologies makes this process more complicated as it creates doubts about the authenticity of visual information. The newsrooms must now assume further checks of verification such as use of digital forensics or artificial intelligence detection tools before making their visual materials public. This doesn't only make the production of news more time-consuming and resource-intensive, but it also pressures journalists to acquire technical skills beyond the skills of a conventional journalist. Also, deepfakes have changed how news is propagated and consumed, especially via social media. Digital content is viral and therefore the manipulated media can become viral within a short time, and in many cases it may reach a huge number of people before it is confirmed or not. Such an effect enhances the effects of misinformation and false news since deepfakes may be effectively used to manipulate the masses, mislead the history, or ruin a reputation. The pace and magnitude of the spread render the journalists and fact-checkers ineffective in their respective capacities hence undermining the gatekeeping functions of the media institutions.

The most significant impact of deepfake spreading is the perceived and trusted audience. The supposition that images and videos represent direct evidence of reality has always been useful in visual journalism. Deepfakes do not follow this assumption and generate some content that seems to be real and yet completely made up. Consequently, there is a falling out of favour with the visual media, and the credibility of news sources is dwindling. This distrust is not limited to controlled content but is also applied to real media, which leads to a wider crisis of trust. This issue is even more complicated by such phenomenon as the so-called liar dividend because people can reject any real evidence because they can say that it is fake and avoid accountability. Moreover, deepfakes lead to the re-conceptualization of the realism and authenticity in visual culture. No longer related to the realistic depiction of objects, realism is at this time touched by the technological advancement and not by the reality. Deepfake material can be visualized in a way that borders or even exceeds the quality of genuine records, and it is hard to tell the difference between genuine and synthetic media. Meanwhile, authenticity, traditionally associated with the source and purity of content, is becoming weaker and weaker. This change generates a paradox according to which highly realistic images can be less authentic than it seems to be, which questions the epistemological basis of the visual journalism. Deepfakes influence can also be seen in a number of real-life case studies, when manipulated media was also used to affect the public discourse. The examples of deepfake videos of political personalities, celebrities, or public officials have proved the possibility of synthetic media to mislead viewers and create a controversial atmosphere. They have been used as propaganda in selected instances, as a form of satire or entertainment in others. Whether purposeful or not, such instances bring up the gray lines between the use of AI-generated material in legitimate and deceptive ways.

Journalists have to balance between the ethical aspect of the utilization of synthetic media making sure that the viewers are fully informed about the character of the content. Any further erosion of trust and compromise of journalism may result when it is not done. Therefore, the use of deepfakes in media needs to be regulated with more ethical guidelines and industry standards. Lastly, the emergence of deepfakes has led to the creation of countermeasures in terms of technology and institutions. Policymakers, media houses, and technology firms are putting more resources in detection services, verification systems and regulation policies to curb the proliferation of doctored content. The digital watermarking, content authentication through blockchain and the use of AI to perform forensic analysis are some of the initiatives that will help regain trust in visual media. Nevertheless, there remains a constant struggle against such attempts because deepfakes technologies develop very quickly, and the game of creation versus detection is going on.

6. Comparative Analysis and Discussion

The rapid emergence of the deepfake technology demands a relative comparison between the traditional visual journalism and the AI-created visual to be able to learn its connotation in terms of the credibility, authenticity, and realism. The discrepancies, overlaps, and novel contradictions between these two paradigms are critically examined in this section and a more broad discussion of the general technological, ethics, and policy-based aspects are presented. The nature of the content creation and authenticity is considered one of the key areas of comparison. The traditionally practiced visual journalism is grounded in the real-life events, in which a picture or a video is captured with the help of a camera and which is supposed to be indexically related to the reality. This association has been historical in giving it some measure of reality and authoritarianship. Deepfake artificially generated content, in its turn, is produced artificially, on the basis of machine learning models, and often has no reference to any real action. Though such content might be of an impressive visual realism, it lacks the inherent authenticity. This deviation highlights the new paradigm of the visual communications wherein realism is no longer a guarantee of the truth. The other dimension is the realism vs. credibility perception. The traditional media possess the audience trust which is sometimes manipulated or biased due to the institutional responsibility and the long developed editorial procedures. Deepfakes on the one side introduce a certain hyper-reality that can even take a laywit audience aback. This comes with the contradiction that the more the visual quality of a synthetic media, the less credible all visual materials are. The comparative analysis shows that, unlike the traditional journalism, which is concerned with the truth which can be established with evidence, the deepfake technology is concerned with the visual plausibility, and this comes at the expense of the factual accuracy.

The applications of technology in the two fields are also different making them even more different. Traditional journalism considers technology as the capture, editing and dissemination of content and human intervention is the most important aspect in this case. The situation is quite the opposite in deepfakes generation where the technology performs most of the production and human elements are reduced into minimum in the deepfakes generation process. This move presents serious challenges on the issues of authorship, responsibility and control. In the meantime, the two worlds are increasingly relying on advanced technologies to verify and fact-check journalists, as well as to develop content by deepfaking writers, which introduces a technology collision and complicates media space. Arms race between the deepfaked creation and detection technologies are the most important factor of this debate. Detection systems must also be continuously improved with the more sophisticated level of the advanced models of generative in order to identify subtle anomalies in synthetic media. The potential of the artificial Intelligence, signal processing and blockchain verification-based detection tools have not been demonstrated yet, and they are not yet even on the same level with the rapidly developing deepfake techniques. The observed constant competition proves the lack of effectiveness of purely technological actions and the need to supplement it with other strategies, including the policy frameworks and social awareness campaigns. The comparison brings about a lot of tribulations ethically. In a traditional journalism, there are some ethical principles that demand the accuracy, fairness and accountability. The Deepfake technology, however, is in a relatively unregulated field, with the morality standards often being of second priority as compared to the technological development or malicious intent. Misinformation, defamation, and propaganda about the misuse of deepfakes are also severe points of concern with regard to the loss of trust in individuals, and the potential damage to the society and individuals. The lack of a clear disclosure even in the valid uses e.g. entertainment or education may make the distinction between ethical and unethical blurred.

Regulatory options Governments and international organizations begin to recognize the threat of deepfakes and reflect on some of the potential regulation strategies against it. They are laws on the irrational use of artificial media, naming of AI-generated content, and trying to enhance digital literacy. However, the regulatory projects are faced with the issues of jurisdiction and enforcement and the high level of technological improvement. On the other hand, the traditional journalism also already has regulatory control and professional standards that provide more structured standard of accountability. As well the value of media literacy and audience awareness is traced in the comparative analysis. In a visual culture where we can no longer read things at face value, consumers must acquire a critical skill to evaluate the verisimilitude of the media. These abilities to ask the sources, the irreconcilability of the situations and the understanding of the possibilities of the AI technologies will be paramount to the perception of the digital information space. The transformation puts even greater responsibility on educational and media institutes to be digitally literate. The juxtaposition of the typical visual journalism to the deepfake-created content proves that the notion of realism, authenticity and trust is a complex interconnection. On the one hand, deepfakes present new possibilities in the sphere of content development, and, on the other hand, they cast a doubt on the validity of visual media and compel already existing ethical and regulatory categories. It was mentioned that these difficult issues can be resolved in a comprehensive way only, which involves technological innovation, ethical standards, policy interventions, and education of the general population. This is the sole means of maintaining the confidence in the visual journalism, not only of the responsible usage of the new technologies within the sphere of the digital age.

Table 2

|

Table 2 Comparative Analysis Table: Traditional Visual Journalism Vs Deepfake-Generated Content |

||

|

Parameter |

Traditional Visual

Journalism |

Deepfake-Generated Content |

|

Source of Content |

Real-world captured events |

AI-generated / synthetic |

|

Authenticity |

High (indexical to reality) |

Low (no real-world

reference) |

|

Realism |

Moderate to High |

Very High (hyper-realistic) |

|

Credibility |

Established through

editorial processes |

Questionable / variable |

|

Production Process |

Human-driven (camera,

reporting) |

AI-driven (GANs, deep

learning) |

|

Verification |

Fact-checking, editorial

review |

Requires forensic AI tools |

|

Speed of Dissemination |

Moderate (verified

publishing) |

Very fast (viral spread via

social media) |

|

Ethical Control |

Strong journalistic ethics |

Weak / emerging regulations |

|

Risk of Misuse |

Low to Moderate |

Very High (misinformation,

propaganda) |

|

Audience Trust |

Traditionally high |

Declining / skeptical perception |

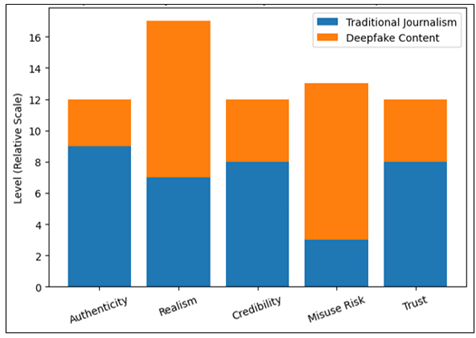

Figure 2

Figure 2 Comparative Analysis: Traditional Journalism Vs Deepfake

Content

The table of comparison 2 identifies the core differences between the traditional visual journalism and deepfake generated content. It demonstrates that the journalism of traditional journalism is based on real-life events, which guarantees the high degree of authenticity, credibility, and trust in the audience due to the established editorial procedures. Deepfake content on the other hand is artificially generated and incredibly realistic in visuals, lacks the authenticity and reliability. Another point of the table is the fact that deepfakes have much larger risks of abuse, particularly, misinformation and propaganda. These differences are supported visually in the bar chart Figure 2. It reveals that Traditional journalism has a higher score in authenticity, credibility and trust, Deepfake content higher in realism and misuse risk joint, the table and the graph clearly indicate the central understanding of the research the Deepfakes make the visual journalism more real but less authentic and credible, which can be a significant challenge to visual journalism.

7. Conclusion and Future Directions

The recent blistering development of deepfake technology has brought a paradigm shift to the field of visual journalism and the assumed long-term concepts of trust, realness, and authenticity have been severely questioned. The paper has examined the disruption of the visual evidence and truth connection through AI-generated synthetic media, which has transformed the viewer perception and media trustworthiness. Its results show that even though deepfakes have tremendously improved the extent of visual realism, it also affects authenticity, which contributes to the increased distrust in digital visual information. Among the major findings of this study is that the exposure to deepfakes has a direct and indirect effect on the level of trust in visual journalism. Deepfakes mediate the process of interpreting and rating visual information by viewers and mediating it through perceived realism, perceived authenticity, and media literacy. Though high realism might work at first to attain more engagement, the non-authenticity will lead to a decrease in trust. This paradox illustrates a severe problem of the contemporary journalism the technologies which bring quality to the visualization may destroy credibility. The paper also highlights the need to have moderating factors such as technological awareness, platform credibility and regulatory frameworks. More media literate people who are aware of the deepfake technologies were more likely to be skeptical and able to detect any manipulated content. Similarly, the credibility of particular platforms and presence of mechanisms of control are also key factors that determine the response by the audiences. These findings suggest the deepfake challenge cannot be resolved only with the help of technology but the educational and institutional ones also should be taken into account.

In a pragmatic approach, the study identifies the need to have stronger verification measures and ethical values in journalism. To make sure that the media houses are reputable, media houses must employ advanced detection mechanisms, have policies of open disclosure and reinforce editorial policies. In the meantime, the technology developers, policymakers, and journalists must collaborate to create a robust ecosystem, which can be used to curb the malpractice of deepfake technology. Another vulnerability of the current detection methods that are also described in the paper is that they are normally incapable of keeping pace with the rapid advancement of the deepfake generating systems. It is due to this game of creation and detection that the necessity to be innovative and conduct inter-disciplinary studies on a constant basis is a must. Another challenge is the lack of standardized systems of regulations in various locations as digital media is international in character, and it is rather challenging to create and apply regulations and responsibility. As far as the future directions are concerned, several research and development directions can be singled out of this research. First of all, more empirical data regarding the audience behavior and, in particular, the ways in which different demographic groups react and perceive deepfake content should be conducted. It is also proposed that potentially in the future, the system of integrating multimodal detection strategies can be investigated, which will entail a visual and audio analysis coupled with the context and this will help in making the system more effective and robust. Media houses and learning institutions ought to cooperate to develop programs that create awareness of deepfakes and avert irresponsible consumption of media. The implementation of the blockchain-based content authentication systems and digital watermarking methods to provide the guarantee of the traceability and integrity of visual media is another direction that should be considered important.

REFERENCES

Cenamor, J., Parida, V., and Wincent, J. (2019). How Entrepreneurial SMEs Compete Through Digital Platforms: The Roles of Digital Platform Capability, Network Capability and Ambidexterity. Journal of Business Research, 100, 196–206. https://doi.org/10.1016/j.jbusres.2019.03.035

Chen, Y., Li, J., and Zhang, J. (2024). Digitalisation, Data-Driven Dynamic Capabilities and Responsible Innovation: An Empirical Study of SMEs in China. Asia Pacific Journal of Management, 41(3), 1211–1251. https://doi.org/10.1007/s10490-022-09845-6

Falahat, M., Ramayah, T., Soto-Acosta, P., and Lee, Y.-Y. (2020). SMEs Internationalization: The Role of Product Innovation, Market Intelligence, Pricing and Marketing Communication Capabilities as Drivers of SMEs’ International Performance. Technological Forecasting and Social Change, 152, 119908. https://doi.org/10.1016/j.techfore.2020.119908

Frishammar, J., Cenamor, J., Cavalli-Björkman, H., Hernell, E., and Carlsson, J. (2018). Digital Strategies for Two-Sided Markets: A Case Study of Shopping Malls. Decision Support Systems, 108, 34–44. https://doi.org/10.1016/j.dss.2018.02.003

Grant, C. (2020). Roles of Culture and Cultural Sustainability in Eliminating Poverty. In Springer (1–11). https://doi.org/10.1007/978-3-319-69625-6_127-1

Helfat, C. E., and Raubitschek, R. S. (2018). Dynamic and Integrative Capabilities for Profiting from Innovation in Digital Platform-Based Ecosystems. Research Policy, 47(8), 1391–1399. https://doi.org/10.1016/j.respol.2018.01.019

Kiyabo, K., and Isaga, N. (2020). Entrepreneurial Orientation, Competitive Advantage, and SMEs’ Performance: Application of Firm Growth and Personal Wealth Measures. Journal of Innovation and Entrepreneurship, 9(1), 12. https://doi.org/10.1186/s13731-020-00123-7

Liao, Z., Chen, J., Chen, X., and Song, M. (2024). Digital Platform Capability, Environmental Innovation Quality, and Firms’ Competitive Advantage: The mOderating Role of Environmental Uncertainty. International Journal of Production Economics, 268, 109124. https://doi.org/10.1016/j.ijpe.2023.109124

Mukherjee, S., and Mukherjee, A. (2022). Indian SMEs in Global Value Chains: Status, Issues and Way Forward. Foreign Trade Review, 57(4), 473–496. https://doi.org/10.1177/00157325221092609

Nayak, B., Bhattacharyya, S. S., and Krishnamoorthy, B. (2022). Exploring the Black Box of Competitive Advantage—An Integrated Bibliometric and Chronological Literature Review Approach. Journal of Business Research, 139, 964–982. https://doi.org/10.1016/j.jbusres.2021.10.047

Patil, R. V., Kudande, V., Jagtap, S., Jadhav, S., and Jawalgekar, A. (2025). Self Healing Infrastructure System. International Journal of Electrical Engineering and Computer Science, 14(1), 10–14. https://doi.org/10.65521/ijeecs.v14i1.190

Sarwar, Z., Gao, J., and Khan, A. (2024). Nexus of Digital Platforms, Innovation Capability, and Strategic Alignment to Enhance Innovation Performance in the Asia Pacific Region: A dynAmic Capability Perspective. Asia Pacific Journal of Management, 41(2), 867–901. https://doi.org/10.1007/s10490-023-09879-4

Singh, P., and Kaur, C. (2021). Factors Determining Financial Constraint of SMEs: A Study of Unorganized Manufacturing Enterprises in India. Journal of Small Business and Entrepreneurship, 33(3), 269–287. https://doi.org/10.1080/08276331.2019.1641662

Sousa-Zomer, T. T., Neely, A., and Martinez, V. (2020). Digital Transforming Capability and Performance: A Microfoundational Perspective. International Journal of Operations and Production Management, 40(7–8), 1095–1128. https://doi.org/10.1108/IJOPM-06-2019-0444

Tiberius, V., Stiller, L., and Dabić, M. (2021). Sustainability Beyond Economic Prosperity: Social Microfoundations of Dynamic Capabilities in Family Businesses. Technological Forecasting and Social Change, 173, 121093. https://doi.org/10.1016/j.techfore.2021.121093

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.