ShodhKosh: Journal of Visual and Performing ArtsISSN (Online): 2582-7472

|

|

Artificial Intelligence as a Creative Collaborator in Contemporary Visual and Performing Arts

Pushpalatha P 1![]() , Pushpa Nagini Sripada 2

, Pushpa Nagini Sripada 2![]() , Vinitha

M 3

, Vinitha

M 3![]() , Muninathan

N 4

, Muninathan

N 4![]() , Subbulakshmi

Packirisamy 5

, Subbulakshmi

Packirisamy 5![]() , Yu

Yang 6

, Yu

Yang 6![]()

1 Department

of Computer Science, Meenakshi College of Arts and Science, Meenakshi Academy

of Higher Education and Research, India

2 Professor,

Department of English, Meenakshi College of Arts and Science, Meenakshi Academy

of Higher Education and Research, India

3 Department of Computer Science, Meenakshi College of Arts and

Science, Meenakshi Academy of Higher Education and Research, India

4 Central Research Laboratory, Meenakshi Medical College Hospital

& Research Institute, Meenakshi Academy of Higher Education and Research,

India

5 Assistant Professor, Department of Pharmacology, Meenakshi Ammal

Dental College and Hospital, Meenakshi Academy of Higher Education and Research,

India

6 Faculty of

Management, Shinawatra University, Thailand Research Fellow, INTI International

University, Malaysia

|

|

ABSTRACT |

||

|

The use of

artificial intelligence in modern art is gaining more and more popularity;

machines are able to produce visual data and be involved in creative

processes. This paper examines how artificial intelligence is used as a

creative partner in contemporary visual arts,

specifically how generative models can support artists in the design of the

concept and exploration of the artistic process. To encourage human-AI

co-creation, a collaborative model that combines data preparation, generative

AI models, interactive user interfaces and rendering modules is suggested. A

dataset of 10,000 digital artworks that represent a variety of artistic

styles was used to evaluate the experimentation. A generative model that runs

on diffusion has been used to generate visual compositions in response to

artist prompts. Findings show that AI-guided creative processes are highly

time-saving and enhance the production time of artwork, as well as, stylistic

and conceptual range. The comparative analysis shows that the rating of visual

quality and artist satisfaction improves in case AI tools are introduced to

the creative process. The results also demonstrate the possibility of

artificial intelligence as a creative companion that can make humans more

creative instead of stealing artistic status. The suggested framework helps

in the evolving body of computational creativity because it will show how

intelligent systems can supplement artistic processes and contribute to

interactive creation of visual arts. The research offers information on how

generative AI can be integrated into artistic space and outlines the future

research prospects of immersive and interactive systems based on AI using

creativity. |

|||

|

Received 28 December 2025 Accepted 30 March

2026 Published 03 April 2026 Corresponding Author Pushpalatha

P, pushpalathap@maher.ac.in

DOI 10.29121/shodhkosh.v7.i3s.2026.7310 Funding: This research

received no specific grant from any funding agency in the public, commercial,

or not-for-profit sectors. Copyright: © 2026 The

Author(s). This work is licensed under a Creative Commons

Attribution 4.0 International License. With the

license CC-BY, authors retain the copyright, allowing anyone to download,

reuse, re-print, modify, distribute, and/or copy their contribution. The work

must be properly attributed to its author.

|

|||

|

Keywords: Artificial Intelligence, Computational Creativity,

Generative Art, Human–AI Collaboration, Diffusion Models, Digital Visual

Arts, Creative AI Systems, Interactive Art, Generative Design |

|||

1. INTRODUCTION

The modern artistic practice is being affected by the development of the artificial intelligence system more and more, reshaping the conceptions, creation, and experience of visual and performing arts. The mathematical models that produce images, music, choreography, and multimedia performances have relegated the status of digital technologies as a passive tool to those that do creative work Ali et al. (2021). Recent AI designs include deep neural networks, generative adversarial networks and diffusion-based models that have shown the capability to analyze large amounts of art and generate new aesthetics outputs that mimic human creativity. This has led to the emergence of new opportunities to the artists, designers, and performers who want to experiment with hybrid forms of creativity through the interaction of human imaginative resources and machine intelligence in dynamic and iterative processes Ali et al. (2021).

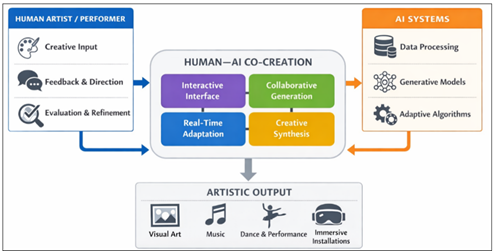

Figure 1

Figure 1

Interactive Human–AI

Co-Creation Framework

In the visual arts, generative systems created based on the principles of AI have the potential to create paintings, digital illustrations, and immersive visual installations by learning the stylistic features of historical and current art collections. More artists are incorporating such systems into their creative processes in order to explore style synthesis, visual abstraction and algorithmic aesthetics Ali et al. (2020). Similar changes are observed in parallel changes in performing arts. An image of machine learning models could be trained on motion capture, on music compositions or on theatrical performance data to produce choreography, to create music, or aid in the design and control of the lighting on a stage. The abilities of this kind allow performers to engage with smart systems that evolve in real time and this opens a new frontier of real time collaborative creativity between machines and humans. The question of authorship, artistic control, interpretability of algorithmic outputs and the ethics of machine-generated art are raised Ambrosio (2019). Moreover, current AI creative systems are usually unstructured in their design that does not facilitate natural communication between artists and intelligent systems throughout the creative process. The research concerning the AI as a partner in creative activity thus needs an interdisciplinary approach, which combines the ideas of computational creativity, human-computer interaction, and artistic theory. The given research focuses on the new purpose of artificial intelligence as an innovative partner in the modern visual and performing art Anadol (2022). The focus is made on the comprehension of collaborative structures, technological designs, and implementation processes that can facilitate useful human-AI co-creation. The knowledge gained through this exploration helps in understanding the way in which intelligent systems are transforming the artistic expression, creative processes, and the culture at large of digital art.

2. Background and Related Work

Technological innovation has played a part in the expression of art in the past, such as the advent of photography and computerized graphics, to modern algorithmic art systems. These possibilities have been again enhanced by the advent of artificial intelligence, as machines now have the ability to study artistic patterns and produce new creative work Anadol and Kivrak (2023). Initial attempts at computational creativity used rule based systems in which aesthetic rules were adhered to in order to create music, poetry or visual designs. Nevertheless, with the outstanding growth of machine learning and deep learning solutions, the intellectual ability of a computational tool to reproduce complicated artistic designs and generate high-quality creative products has increased considerably Avlonitou (2018).

The most recent studies in the field of AI-assisted art creation concentrate on the generative models (Generative Adversarial Networks (GANs) and diffusion models, as well as transformer-based ones). The GANs have proven to be incredibly efficient in producing realistic paintings, portraits, and online illustrations through learning style distributions of large art collections Bellaiche (2023), Benjamin (2008). Diffusion models extend quality of images through continuously refinement of generated image resulting in their use in digital art, animation, and creative design. Simultaneously, transformer-based and recurrent neural networks have been used to create music, design choreographies, and interactive performances on the stage Bhullar (2024). These models allow one to produce many structured sequences that are similar to human musical compositions or dance motions.

|

Gap Analysis |

|||

|

Approach |

Strength |

Limitation |

Research

Gap |

|

GAN-based

Art Generation Black (2018) |

Produces

highly realistic images and paintings |

Limited

interpretability and artistic control |

Need

for interactive generation frameworks |

|

Diffusion-Based

Image Models Blanco (2024), Colton and Wiggins (2012) |

High-quality

visual synthesis |

Computationally

intensive and less interactive |

Real-time

collaborative creativity required |

|

AI

Music and Dance Generation Boden (2004) |

Capable

of generating structured sequences |

Often

operates autonomously without human feedback |

Integration

of performer–AI interaction systems |

|

Rule-Based

Computational Creativity Bowen and Giannini (2019), Phurisikul and Phuakkong

(2025) |

Predictable

and interpretable outputs |

Limited

creativity and stylistic diversity |

Hybrid

human–AI creativity frameworks needed |

3. Framework of AI–Human Creative Collaboration

Human-computer-creative collaboration is a new paradigm of computational systems that are involved in the artistic process together with human artists. In contrast to the old-fashioned digital tools that only help to execute them, modern AI systems are able to produce innovative artistic works, interpret the stylistic patterns and adapt to human input. With these capabilities it is possible to create a co-creative space where artistic concepts are developed through generative interaction between human intuition and machine generative intelligence. In this system of collaboration, artificial intelligence also serves as a dynamic co-worker who can enhance creativity in humans but not to eliminate it. Jadhav (2027)

Figure 2

Figure 2

AI-Augmented

Artistic Co-Creation Workflow

The theoretical background of the creative cooperation between human beings and AI may be explained in terms of a number of co-creation models which explain various stages of interaction between artists and intelligent systems. The former is commonly known as AI-assisted creativity, and AI is depicted as a collaborative system to facilitate certain elements of the artistic process. In this setup, the human artist retains the complete conceptual control and AI systems are available to become image generators, style transfer, sound generators, or automated editors. The innovative idea is also supplied by the human creator, and AI enhances the production process mostly. The second model focuses on the AI-enhanced creativity where human creators and AI systems collaboratively affect a creative product. In this case, artists can offer conceptual guidance, aesthetic limitations or training inputs, with AI models offering variations or surprising design opportunities. With the help of repetitive feedback loops, the artist reviews the outputs produced by machines and also refines parameters, giving rise to a co-creative process, which is increasingly evolutional, involving human judgment and algorithmic experimentation. The most developed model is the symbiotic human-AI co-creation, whereby one engages in real-time interaction in a process of artistic creation. In this type of systems, the artificial intelligence system is constantly adjusted to the actions of the performers, the situations of the environment, or according to the reaction of the audience. Examples are AI-based choreography systems that react to dancer motions or computer-generated music systems that dynamically modify music in the course of live performances. This partnership model makes AI one of the players in the creative ecosystem. Suri et al. (2025)

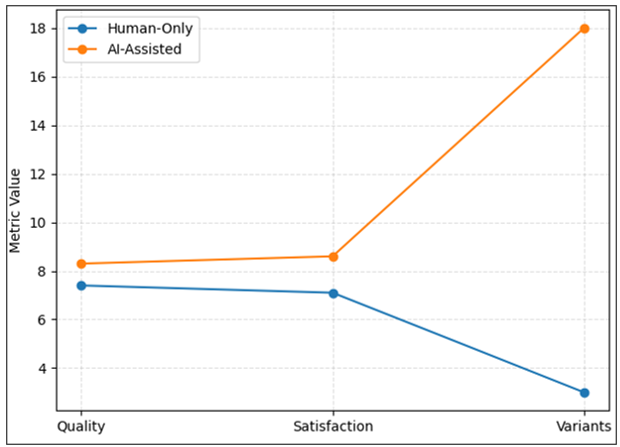

4. AI Techniques Used in Contemporary Art Creation

Techniques in artificial intelligence have quickly changed the artistic practice by means of machines learning aesthetic patterns and creating other form of visual and performing art. The contemporary creative AI applications are founded on deep learning architectures that can process complex data sets that include images, audio signals, motion sequences, and performance recordings. The techniques enable computational systems to create works of art with a sense of stylistic consistency, structural composition, and expressiveness comparable to that of an artist created by human beings. The Generative Adversarial Network (GAN) can be considered one of the most powerful approaches employed in creative AI systems. GAN architecture is a set of two neural networks which work in a competition: a generator which creates artificial data and a discriminator which rates the similarity of the generated content with the real artistic samples. The generator is trained by progressively learning to create visual output of high quality that looks like an authentic work of art. GAN-based models have been massively applied to produce paintings, portraits, digital images, and stylized visual images. In modern visual art, GANs are able to allow artists to test style synthesis, abstract visual metamorphosis, and algorithmically produced aesthetics. Vasanthan et al. (2019)

Figure 3

Figure 3

AI Techniques Used

in Contemporary Art Creation

The other notable trend is the rise of diffusion-based generative models that have recorded impressive results in terms of generating high-resolution pictures and intricate artistic designs. Diffusion models are the models that involve creating images by means of progressive refinement, where random noise is slowly converted into structure into a visual image. The model can also be used to produce much more stable models of intricate textures, lighting conditions, and stylistic artistic effects, unlike previous generative methods. Computer-generated illustrations, cinematic concept design, animation production and interactive visual installations are also becoming made with diffusion-based systems. Transformer-based architectures are used in sequence-oriented creative tasks, e.g. music composition, choreography generation and storytelling. Transformers have attention modules through which models are able to establish long-term connections in a sequential data. Transformer models are used in music generation to learn rhythmic patterns, harmonic structures and melodic transitions based on massive musical sets. Otherwise, motion-based transformers have the ability to produce dance moves or performance sequences and learn temporal features of motion capture data. The application of reinforcement learning also has an important role in the adaptive artistic environments when the systems can dynamically interact with the human performers or the audience. Reinforcement learning agents are learning to acquire the best creative strategies aiming at feedback in response to their environment and hence they adapt artistic outputs depending on user feedback or performance scenario. In the interactive installations, generative music performances, and responsive stage environments such adaptive systems are especially helpful.

5. System Architecture for AI-Assisted Creative Collaboration

Creative systems involving AI need a well-organized structure involving human-to-human interaction, data processing, machine learning models, and artistic rendering modules. Defining a layered architecture structure not only guarantees that artistic contribution, computational intelligence and generation of output are running in an environment of coordination, but also enables the production of output in an intelligent system that can provide a coordinated environment between artists and intelligent systems to work collaboratively in creative endeavors. Data layer is the backbone of the architecture and it is in charge of gathering and structuring multimodal artistic data. This layer combines various inputs which include visual images, audio records, motion capture records and textual prompts given by artists. Data pre processing modules do normalization, feature extraction and metadata tagging to get the datasets ready to be subjected to machine learning. Rawandale and Kolte (2021)

Live information feeds of sensors, microphones, cameras, or motion capture devices can be included in interactive artistic settings, too. The AI model layer, where computational creativity is applied based on the deep learning frameworks, is placed above the data layer. It is based on generative models including GANs, diffusion networks and architectures based on transformers that learn artistic patterns on the basis of training data. The training, inference and optimization are handled by model orchestration components. In certain systems, the reinforcement learning modules support the adaptability when it comes to responding to actions by the performer or the interactions with the audience. The interface layer facilitates the connection between human designers and AI. Artists deal with the system via graphical interface, digital design tools or through interactive performance platforms. This layer enables artists to give prompting, adjust parameters, and judge generated outputs and direct the creative operation with recurrent feedback. The ability to interact in real time is especially crucial in the performing arts implementation where AI systems can react to human behaviors in real time. Lastly, the output layer is where the artistic artifacts created in the course of the collaborative workflow are created. Visualization tools and rendering engines convert AI-generated data into one of the interpretable artistic forms that can be used in an exhibition or performance or that can be distributed online. Karwande et al. (2024)

6. Experimental Demonstration / Case Study

Creative collaboration aided by AI was experimentally validated by a case study illustrating how generative artificial intelligence can be incorporated in a visual art production process. The objective of the experiment was to investigate how AI systems could help artists to create new visual compositions without losing control over artistic decision-making to human actors. The process of the experiment was composed of dataset preparation, training of the AI model, interactive generation, and qualitative analysis of generated artworks. The data in this experiment was a curated set of about 10,000 digital works drawn out of the available art repositories on the Internet and open creative sources in the open creative data. The dataset covered paintings, digital illustrations, abstract art and concept artwork that represent various representations of visual styles which include impressionism, surrealism and contemporary computerized art. Before training, the images had been standardized to take uniform input sizes and perceptual quality. Preprocessing processes were done on image resizing, normalization and feature extraction. Table 1 summarizes the composition and preprocessing activities performed on the dataset in order to train it.

Table 1

|

Table 1 Experimental Dataset Characteristics |

||||

|

Dataset

Category |

Number

of Images |

Resolution |

Source

Type |

Preprocessing

Applied |

|

Classical

Paintings |

3,500 |

512×512 |

Open

Art Datasets |

Normalization,

Color Balancing |

|

Digital

Illustrations |

4,200 |

512×512 |

Online

Art Repositories |

Resizing,

Feature Extraction |

|

Abstract

Art |

1,800 |

512×512 |

Creative

Commons Collections |

Noise

Reduction |

|

Concept

Art |

500 |

512×512 |

Digital

Artist Archives |

Style

Tagging |

|

Total

Dataset |

10,000 |

512×512 |

Mixed

Sources |

Standardized

Pipeline |

Following the preparation of the datasets, a diffusion-based generative model was followed to produce high-resolution artistic images. The choice of diffusion models was explained by the fact that they are stable when trained and can generate expansive visual images with intricate textures and color gradients. The deep learning framework based on Python and trained on a cloud-based computing platform was used to train the model. The main parameters to be considered in the training of the model are shown in Table 2 where the details about the architecture set up and the environment are provided.

Table 2

|

Table 2 AI Model Training Configuration |

|||

|

Parameter |

Value |

Description |

Implementation

Tool |

|

Model

Type |

Diffusion

Model |

Image

generation architecture |

PyTorch |

|

Training

Epochs |

120 |

Total

training iterations |

Python |

|

Batch

Size |

32 |

Images

per training cycle |

CUDA

GPU |

|

Learning

Rate |

0.0002 |

Optimization

step size |

Adam

Optimizer |

|

Training

Time |

14

Hours |

Total

model training duration |

Cloud

GPU (NVIDIA A100) |

The artists were able to define the visual themes, style choices or composition limits, and the AI model used to produce several candidate artworks. These outputs were then evaluated by the artist and preferred variations were picked upon to further refine it. It enabled artists to experiment with an extensive variety of visual ideas and at the same time retain control over the end artistic product through a series of successive adjustments to the prompts. In order to consider the efficiency of AI-assisted creativity, the process of the traditional human-only artwork creation and the AI-assisted artistic workflow were compared. Some of the measures that were incorporated in the evaluation were time of creating artwork, change in style, and visual quality as perceived. A panel of digital artists and visual designers was engaged in the assessment and scored generated artworks on a set of standardised scoring criteria. The findings of this comparative assessment are represented in Table 3.

Table 3

|

Table 3 AI-Assisted vs Human-Only Artwork Generation Performance |

|||

|

Evaluation

Metric |

Human-Only

Creation |

AI-Assisted

Creation |

Improvement

(%) |

|

Average

Artwork Creation Time |

6.5

hours |

1.8

hours |

72%

Faster |

|

Style

Variation Generated |

3

variants |

18

variants |

500%

Increase |

|

Artist

Satisfaction Score (1–10) |

7.1 |

8.6 |

21% |

|

Visual

Quality Rating (1–10) |

7.4 |

8.3 |

12% |

|

Concept

Exploration Speed |

Medium |

Very

High |

— |

The findings indicate that AI-enhanced creative systems provide a much faster creative exploration stage of visual arts creation as well as boost stylistic variety and experimentation. This collaborative workflow enabled artists to quickly come up with a number of visual options and develop their concepts more effectively compared to manual processes. These findings show how artificial intelligence can become a creative partner that can be used to improve the creative output of artwork without stealing the human author or creative intent.

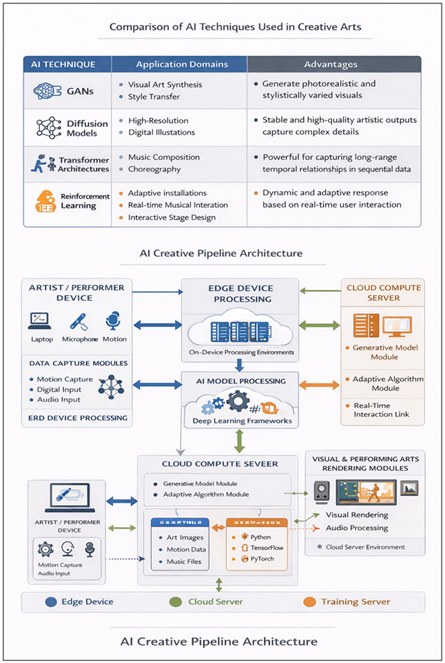

7. Results and Performance Analysis

The review of the AI creative workflow was performed through the analysis of the numerical data, provided in Table 3, which compares the conventional approach toward artistic production solely done by humans and the one facilitated by AI. Three main areas of creative performance are examined in the analysis: efficiency of production, artistic exploration diversity and perceived artistic quality. All these metrics give the understanding of how effective artificial intelligence can be used as a partner in creative work. There is also a significant decrease in time spent on the creation of an artwork as the time spent was decreased by the AI-assisted workflow, and the average time spent on creating an artwork was shortened to 1.8 hours, which is a significant decrease of the time spent on artwork creation, about 72 percent. This enhancement indicates that generative AI systems are much faster in initial phases of artistic creation, especially in exploring the concept and in prototyping a composition. The conventional creative processes involve artists taking a lot of time in trying out sketches, color combinations and structural layouts before finalizing on an idea. The use of AI systems is able to quickly create various candidate works of art, allowing artists to potentially review and edit ideas significantly more effectively. The other significant change is found in the style variation generated metric. On the human-onlyed mode, the average number of conceptual variations generated was three, on AI- assisted systems, about eighteen visual variants were generated using one prompt. The corresponding growth in conceptual diversity of almost 500 percent points to the ability of generative models to increase the creative search space. Artists can also use algorithmic generation of a wide range of stylistic options, rather than manually generating a number of drafts, which can enable them to find a promising artistic direction more rapidly. The outcomes also show the increase in the satisfaction of artists and the perceived visual quality. The average score of satisfaction among the participants regarding AI-assisted output was 8.6 out of 10, and 7.1 in case of traditional methods. Likewise, the visual quality rating was raised by 7.4 to 8.3, which means that the artists felt that AI-generated works were aesthetically worthy and creatively helpful. These advancements imply that AI tools not only make the process more efficient but also more creative as they create new stylistic opportunities.

Table 4

|

Table 4 Performance Metrics Comparison (Human vs AI-Assisted Workflow) |

|||

|

Performance

Metric |

Human-Only

Workflow |

AI-Assisted

Workflow |

Improvement |

|

Average

Creation Time |

6.5

hours |

1.8

hours |

72%

Faster |

|

Style

Variants Generated |

3 |

18 |

500% |

|

Artist

Satisfaction Score |

7.1 |

8.6 |

21% |

|

Visual

Quality Rating |

7.4 |

8.3 |

12% |

The comparative analysis provided in Table 4 proves the fact that AI-contributing creative pipelines contribute to both efficiency and creative diversity to a large extent. It can be used to devote more time to conceptual development than time spent in manual experimentation and the time spent creating is reduced. Simultaneously, the number of stylistic variants created increases, which enlarges the compositional possibilities that may be experimented in the process of design. Patil (2025)

Table 5

|

Table 5 Artist Evaluation Results (User Study) |

|||

|

Evaluation

Criterion |

Mean

Score (1–10) |

Standard

Deviation |

Interpretation |

|

Visual

Aesthetic Quality |

8.3 |

0.7 |

High

quality outputs |

|

Creativity

and Novelty |

8.5 |

0.6 |

Strong

originality |

|

Ease

of Collaboration with AI |

8.1 |

0.8 |

Effective

interaction |

|

Overall

Satisfaction |

8.6 |

0.5 |

Positive

user experience |

Findings of the user assessment as presented in Table 5 also suggest that the artists saw the AI system as a useful partner instead of a human creativity substitute. The scores of creativity and novelty are high, indicating that generative models bring new visual concepts to the artistic experimentation. The high score on ease of collaboration also demonstrates that the interactive interface is effective in supporting creative workflows that are iterative.

8. Analysis of Interpretation

Experimental findings support the fact that AI-based creative systems may achieve a significant level of increase in artistic production efficiency and diversity without sacrificing the fundamental significance of human creativity. The speed of the decrease in art creation time was observed, which means that the conceptual development stage of visual art can be extremely fast with the help of generative AI models. The system allows the artist to experiment with a variety of stylistic options quickly, so that even in the initial stages of sketching and preliminary design, less effort is necessary than with manual methods.

Figure 4

Figure 4

Comparison of

Artwork Creation Time Between Human-Only and AI-Assisted Workflows

The next crucial finding is the growth in the variety of stylistic tools that have been obtained due to AI-based workflows. Conventional artistic methods are known to give rise to few conceptual options due to the fact that the artist has to hand draw his or her design options. Generative models, in contrast, are capable of generating many stylistic variations of a single prompt, e.g. diffusion networks. It is an option that increases the creative exploration space and enables artists to consider more visual possibilities and then choose the most promising artistic course.

Figure 5

Figure 5

Performance Comparison

Across Key Creative Metrics

It is also possible to note the improvement in perceived quality of artwork and artist satisfaction as shown by the evaluation results. The increased quality scores of AI-assisted output indicate that generative models may acquire complicated visual attributes like composition of texture, color equilibrium, and spatial equilibrium. Such abilities offer an opportunity of inspiration and creative enhancement to artists instead of weighing upon the authorship of humans. By implementing timely adjustment and feedback, artists are in control of the creative process and use computational intelligence to produce alternative visual ideas. The presented interaction model shows that AI technologies can act as the collaborative partners which can further the creative potential of artists and enable new modes of digital artistic experimentation.

9. Conclusion

Artificial intelligence as a concept that is being introduced into modern visual art signifies a paradigm shift in the manner in which visual arts are thought out and created. This study explored the role of AI as a creative partner that could assist artists by their generative modeling, interactive creative process, and creative exploration through adaptive modeling. The suggested framework integrates information preparation, generative models founded on deep learning, human-AI interaction, and rendering to create a creative pipeline in collaboration. Empirical testing with a curated collection of digital artworks proved that the creative process with the help of AI leads to a significant increase in productivity and exploration of ideas. Its findings show that generative AI systems are capable of shortening the time taken to create artwork, improving the level of stylistic diversity, or improving the satisfaction of artists, without preventing human artistic control. By enabling the interaction on a basis of promptness and refinement through iteration, artists can be the focus of the creative process but take advantage of the computational power of the modern AI systems. The results point to the possibilities of AI technologies to broaden the scope of digital creativity and make it possible to create new ways of interaction in art. With the further development of the generative models, human-AI collaboration is probable to have a more significant role in the visual and performing art future. The possibilities of future work include incorporating multimodal generative models, immersive virtual environments and real time collaborative interfaces that in turn enhance the synergy of human creativity and artificial intelligence.

CONFLICT OF INTERESTS

None.

ACKNOWLEDGMENTS

None.

REFERENCES

Ali, S., DiPaola, D., Lee, I., Hong, J., and Breazeal, C. (2021). Exploring Generative Models with Middle School Students. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems ( 1–13). https://doi.org/10.1145/3411764.3445226

Ali, S., DiPaola, D., Lee, I., Sindato, V., Kim, G., Blumofe, R., and Breazeal, C. (2021). Children as Creators, Thinkers and Citizens in an AI-Driven Future. Computers and Education: Artificial Intelligence, 2, Article 100040. https://doi.org/10.1016/j.caeai.2021.100040

Ali, S., Park, H. W., and Breazeal, C. (2020). Can cHildren Emulate a Robotic Non-Player Character’s Figural Creativity? In Proceedings of the Annual Symposium on Computer-Human Interaction in Play (CHI PLAY ’20) ( 499–509). https://doi.org/10.1145/3410404.3414251

Ambrosio, C. (2019). Unsettling Robots and the Future of Art. Science, 365(6448), 38–39. https://doi.org/10.1126/science.aay1956

Anadol, R. (2022). Space in the Mind of a Machine: Immersive Narratives. Architectural Design, 92(3), 28–37. https://doi.org/10.1002/ad.2810

Anadol, R., and Kivrak, P. (2023). Machines That Dream: How AI-Human Collaborations in Art Deepen Audience Engagement. Management and Business Review, 3, 101–107. https://doi.org/10.1177/2694105820230301018

Avlonitou, Z. (2018). Collector and Collection, an Indivisible Unity: The Case of the Collector and Collection of Giorgos Kostakis (Doctoral Dissertation, University of Ioannina).

Bellaiche, L., et al. (2023). Humans Versus AI: Whether and Why we Prefer Human-Created Compared to AI-Created Artwork. Cognitive Research: Principles and Implications, 8, Article 42. https://doi.org/10.1186/s41235-023-00499-6

Benjamin, W. (2008). The Work of Art in the Age of Its Technological Reproducibility, and Other Writings on Media (M. W. Jennings, B. Doherty, and T. Y. Levin, Eds.). Belknap Press. https://doi.org/10.2307/j.ctv1nzfgns

Bhullar, R. (2024). Creative Artificial Intelligence: Exploring the Qualities of Popular AI Art Tools to Determine Effectiveness. NHSJS.

Black, G. (2018). Meeting the Audience Challenge in the “Age of Participation.” Museum Management and Curatorship, 33(4), 302–319. https://doi.org/10.1080/09647775.2018.1469097

Blanco, A. D., et al. (2024, November 11). Exploring the Neural Impact of AI-Generated Art at MoMA: An EEG Study on Refik Anadol’s Unsupervised. OSF Preprints.

Boden, M. A. (2004). The Creative Mind: Myths and Mechanisms (2nd ed.). Routledge. https://doi.org/10.4324/9780203508527

Bowen, J. P., and Giannini, T. (2019). The Digital Future for Museums. In T. Giannini and J. P. Bowen (Eds.), Museums and Digital Culture. Springer. https://doi.org/10.1007/978-3-319-97457-6_28

Cao, Y., et al. (2023). A Comprehensive Survey of AI-Generated Content (AIGC): A History of Generative AI from GAN to ChatGPT. arXiv.

Colton, S., and Wiggins, G. A. (2012). Computational Creativity: The Final Frontier? In Proceedings of the 20th European Conference on Artificial Intelligence (ECAI 2012) ( 21–26).

Jadhav, K. D. (2027). Integrating IoT, Sensors, and Machine Learning for Enhancing Crop Yield and Irrigation Efficiency Systems. In R. R. Janghel, R. Doriya, J. Lachure, and Y. K. Rathore (Eds.), Sustainable Agriculture Production Using Blockchain Technology. https://doi.org/10.1002/9781394248711.ch11

Karwande, V. S., Pawar, U. B., and Pattnaik, O. (2024). Leveraging Speech-Driven Patterns Multimodal Machine Learning Framework for Accurate Early-Stage Parkinson’s Disease Prediction: A Survey. In Proceedings of the 2nd International Conference on Advanced Computing and Communication Technologies (ICACCTech 2024) ( 525–532). https://doi.org/10.1109/ICACCTech65084.2024.00091

Patil, R. V., et al. (2025). Decentralized Autonomous Organizations as Emerging Economic Entities in Accounting and Governance Frameworks. International Journal of Accounting and Economics Studies, 12(4), 166–177. https://doi.org/10.14419/1sy2j677

Phurisikul, P., and Phuakkong, C. (2025). The Elderly Welfare Management of Local Administrative Government Organizations in Thailand. International Journal of Research and Development in Management Research, 14(1), 21–28. https://doi.org/10.65521/ijrdmr.v14i1.102

Rawandale, U. S., and Kolte, M. T. (2021). Design, Development and Analysis of Variable Bandwidth Filter Bank for Enhancing the Performance of Hearing Aid System. International Journal of Intelligent Engineering Systems, 14(6), 391–401. https://doi.org/10.22266/ijies2021.1231.35

Suri, S., Lakshman, K., Goyal, E., Goyal, G., Sood, G., Mirajkar, G. S., and Anerao, P. (2025). Emotion Modeling in Sculpture Design Using Neural Networks. ShodhKosh: Journal of Visual and Performing Arts, 3(3s), 31–40. https://doi.org/10.29121/shodhkosh.v6.i3s.2025.6756

Vasanthan, R., Balaji, P., and Dzuvichu, K. (2019). A Study on Kinaesthetic Style Preferences and Second Language Learning Requirements at the Primary Level. Journal of Advanced Research in Dynamical and Control Systems, 11(11 Special Issue), 861–869. https://doi.org/10.5373/JARDCS/V11SP11/20193108

|

|

This work is licensed under a: Creative Commons Attribution 4.0 International License

This work is licensed under a: Creative Commons Attribution 4.0 International License

© ShodhKosh 2026. All Rights Reserved.